Advanced Calculus of Several Variables (1973)

Part I. Euclidean Space and Linear Mappings

Chapter 5. THE KERNEL AND IMAGE OF A LINEAR MAPPING

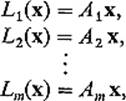

Let L : V → W be a linear mapping of vector spaces. By the kernel of L, denoted by Ker L, is meant the set of all those vectors ![]() such that

such that ![]()

![]()

By the image of L, denoted by Im L or f(L), is meant the set of all those vectors ![]() such that w = L(v) for some vector

such that w = L(v) for some vector ![]() ,

,

![]()

It follows easily from these definitions, and from the linearity of L, that the sets Ker L and Im L are subspaces of V and W respectively (Exercises 5.1 and 5.2). We are concerned in this section with the dimensions of these subspaces.

Example 1If a is a nonzero vector in ![]() n, and L :

n, and L : ![]() n →

n → ![]() is defined by L(x) = a · x, then Ker L is the (n − 1)-dimensional subspace of

is defined by L(x) = a · x, then Ker L is the (n − 1)-dimensional subspace of ![]() n that is orthogonal to the vector a, and Im L =

n that is orthogonal to the vector a, and Im L = ![]() .

.

Example 2If P : ![]() 3 →

3 → ![]() 2 is the projection P(x1, x2, x3) = (x1, x2), then Ker P is the x3-axis and Im P =

2 is the projection P(x1, x2, x3) = (x1, x2), then Ker P is the x3-axis and Im P = ![]() 2.

2.

The assumption that the kernel of L : V → W is the zero vector alone, Ker L = 0, has the important consequence that L is one-to-one, meaning that L(v1) = L(v2) implies that v1 = v2 (that is, L is one-to-one if no two vectors of Vhave the same image under L).

Theorem 5.1Let L : V → W be linear, with V being n-dimensional. If Ker L = 0, then L is one-to-one, and Im L is an n-dimensional subspace of W.

PROOFTo show that L is one-to-one, suppose L(v1) = L(v2). Then L(v1 − v2) = 0, so v1 − v2 = 0 since Ker L = 0.

To show that the subspace Im L is n-dimensional, start with a basis v1, . . . , vn for V. Since it is clear (by linearity of L) that the vectors L(v1), . . . , L(vn) generate Im L, it suffices to prove that they are linearly independent. Suppose

![]()

Then

![]()

so t1v1 + · · · + tn vn = 0 because Ker L = 0. But then t1 = · · · = tn = 0 because the vectors v1, . . . , vn are linearly independent.

![]()

An important special case of Theorem 5.1 is that in which W is also n-dimensional; it then follows that Im L = W (see Exercise 5.3).

Theorem 5.2Let L : ![]() n →

n → ![]() m be defined by L(x) = Ax, where A = (aij) is an m × n matrix. Then

m be defined by L(x) = Ax, where A = (aij) is an m × n matrix. Then

(a)Ker L is the orthogonal complement of that subspace of ![]() n that is generated by the row vectors A1, . . . , Am of A, and

n that is generated by the row vectors A1, . . . , Am of A, and

(b)Im L is the subspace of ![]() m that is generated by the column vectors A1, . . . , An of A.

m that is generated by the column vectors A1, . . . , An of A.

PROOF(a) follows immediately from the fact that L is described by the scalar equations

so that the ith coordinate Li(x) is zero if and only if x is orthogonal to the row vector Ai.

(b) follows immediately from the fact that Im L is generated by the images L(e1), . . . , L(en) of the standard basis vectors in ![]() n, whereas L(ei) = Ai, i = 1, . . . , n, by the definition of matrix multiplication.

n, whereas L(ei) = Ai, i = 1, . . . , n, by the definition of matrix multiplication.

![]()

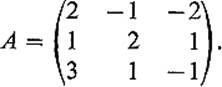

Example 3Suppose that the matrix of L : ![]() 3 →

3 → ![]() 3 is

3 is

Then A3 = A1 + A2, but A1 and A2 are not collinear, so it follows from 5.2(a) that Ker L is 1-dimensional, since it is the orthogonal complement of the 2-dimensional subspace of ![]() 3 that is spanned by A1 and A2. Since the column vectors of A are linearly dependent, 3A1 = 4A2 − 5A3, but not collinear, it follows from 5.2(b) that Im L is 2-dimensional.

3 that is spanned by A1 and A2. Since the column vectors of A are linearly dependent, 3A1 = 4A2 − 5A3, but not collinear, it follows from 5.2(b) that Im L is 2-dimensional.

Note that, in this example, dim Ker L + dim Im L = 3. This is an illustration of the following theorem.

Theorem 5.3If L : V → W is a linear mapping of vector spaces, with dim V = n, then

![]()

PROOFLet w1, . . . , wp be a basis for Im L, and choose vectors ![]() such that L(vi) = wi for i = 1, . . . , p. Also let u1, . . . , uq be a basis for Ker L. It will then suffice to prove that the vectors v1, . . . , vp, u1, . . . , uq constitute a basis for V.

such that L(vi) = wi for i = 1, . . . , p. Also let u1, . . . , uq be a basis for Ker L. It will then suffice to prove that the vectors v1, . . . , vp, u1, . . . , uq constitute a basis for V.

To show that these vectors generate V, consider ![]() . Then there exist numbers a1, . . . , ap such that

. Then there exist numbers a1, . . . , ap such that

![]()

because w1, . . . , wp is a basis for Im L. Since wi = L(vi) for each i, by linearity we have

![]()

or

![]()

so ![]() . Hence there exist numbers b1, . . . , bq such that

. Hence there exist numbers b1, . . . , bq such that

![]()

or

![]()

as desired.

To show that the vectors v1, . . . , vp, u1, . . . , uq are linearly independent, suppose that

![]()

Then

![]()

because L(vi) = wi and L(uj) = 0. Since w1, . . . , wp are linearly independent, it follows that s1 = · · · = sp = 0. But then t1u1 + · · · + tq uq = 0 implies that t1 = · · · = tq = 0 also, because the vectors u1, . . . , uq are linearly independent. By Proposition 2.1 this concludes the proof.

![]()

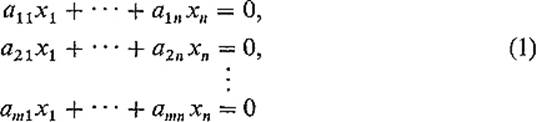

We give an application of Theorem 5.3 to the theory of linear equations. Consider the system

of homogeneous linear equations in x1, . . . , xn. As we have observed in Example 9 of Section 3, the space S of solutions (x1, . . . , xn) of (1) is the orthogonal complement of the subspace of ![]() n that is generated by the row vectors of the m × n matrix A = (aij). That is,

n that is generated by the row vectors of the m × n matrix A = (aij). That is,

![]()

where L : ![]() n →

n → ![]() m is defined by L(x) = Ax (see Theorem 5.2).

m is defined by L(x) = Ax (see Theorem 5.2).

Now the row rank of the m × n matrix A is by definition the dimension of the subspace of ![]() n generated by the row vectors of A, while the column rank of A is the dimension of the subspace of

n generated by the row vectors of A, while the column rank of A is the dimension of the subspace of ![]() m generated by the column vectors of A.

m generated by the column vectors of A.

Theorem 5.4 The row rank of the m × n matrix A = (aij) and the column rank of A are equal to the same number r. Furthermore dim S = n − r, where S is the space of solutions of the system (1) above.

PROOFWe have observed that S is the orthogonal complement to the subspace of ![]() n generated by the row vectors of A, so

n generated by the row vectors of A, so

![]()

by Theorem 3.4. Since S = Ker L, and by Theorem 5.2, Im L is the subspace of ![]() m generated by the column vectors of A, we have

m generated by the column vectors of A, we have

![]()

by Theorem 5.3. But Eqs. (2) and (3) immediately give the desired results.

Recall that if U and V are subspaces of ![]() n, then

n, then

![]()

and

![]()

are both subspaces of ![]() n (Exercises 1.2 and 1.3). Let

n (Exercises 1.2 and 1.3). Let

![]()

Then U × V is a subspace of ![]() 2n with dim(U × V) = dim U + dim V (Exercise 5.4).

2n with dim(U × V) = dim U + dim V (Exercise 5.4).

Theorem 5.5If U and V are subspaces of ![]() n, then

n, then

![]()

In particular, if U + V = ![]() n, then

n, then

![]()

PROOFLet L : U × V → ![]() n be the linear mapping defined by

n be the linear mapping defined by

![]()

Then Im L = U + V and ![]() so dim Im L = dim(U + V) and dim

so dim Im L = dim(U + V) and dim ![]() Since dim U × V = dim U + dim V by the preceding remark, Eq. (4) now follows immediately from Theorem 5.3.

Since dim U × V = dim U + dim V by the preceding remark, Eq. (4) now follows immediately from Theorem 5.3.

![]()

Theorem 5.5 is a generalization of the familiar fact that two planes in ![]() 3 “generally” intersect in a line (“generally” meaning that this is the case if the two planes together contain enough linearly independent vectors to span

3 “generally” intersect in a line (“generally” meaning that this is the case if the two planes together contain enough linearly independent vectors to span ![]() 3). Similarly a 3-dimensional subspace and a 4-dimensional subspace of

3). Similarly a 3-dimensional subspace and a 4-dimensional subspace of ![]() 7 generally intersect in a point (the origin); two 7-dimensional subspaces of

7 generally intersect in a point (the origin); two 7-dimensional subspaces of ![]() 10 generally intersect in a 4-dimensional subspace.

10 generally intersect in a 4-dimensional subspace.

Exercises

5.1If L : V → W is linear, show that Ker L is a subspace of V.

5.2If L : V → W is linear, show that Im L is a subspace of W.

5.3Suppose that V and W are n-dimensional vector spaces, and that F : V → W is linear, with Ker F = 0. Then F is one-to-one by Theorem 5.1. Deduce that Im F = W, so that the inverse mapping G = F−1 : W → V is defined. Prove that G is also linear.

5.4If U and V are subspaces of ![]() n, prove that

n, prove that ![]() is a subspace of

is a subspace of ![]() 2n, and that dim(U × V) = dim U + dim V. Hint: Consider bases for U and V.

2n, and that dim(U × V) = dim U + dim V. Hint: Consider bases for U and V.

5.5Let V and W be n-dimensional vector spaces. If L : V → W is a linear mapping with Im L = W, show that Ker L = 0.

5.6Two vector spaces V and W are called isomorphic if and only if there exist linear mappings S : V → W and T : W → V such that S ![]() T and T

T and T ![]() S are the identity mappings of W and V respectively. Prove that two finite-dimensional vector spaces are isomorphic if and only if they have the same dimension.

S are the identity mappings of W and V respectively. Prove that two finite-dimensional vector spaces are isomorphic if and only if they have the same dimension.

5.7Let V be a finite-dimensional vector space with an inner product ![]() ,

, ![]() . The dual space V* of V is the vector space of all linear functions V →

. The dual space V* of V is the vector space of all linear functions V → ![]() . Prove that V and V* are isomorphic. Hint: Let v1, . . . , vn be an orthonormal basis for V, and define

. Prove that V and V* are isomorphic. Hint: Let v1, . . . , vn be an orthonormal basis for V, and define ![]() by θj(vi) = 0 unless i = j, θj(vj) = 1. Then prove that θ1, . . . , θn constitute a basis for V*.

by θj(vi) = 0 unless i = j, θj(vj) = 1. Then prove that θ1, . . . , θn constitute a basis for V*.