A Book of Abstract Algebra, Second Edition (1982)

Chapter 28. VECTOR SPACES

Many physical quantities, such as length, area, weight, and temperature, are completely described by a single real number. On the other hand, many other quantities arising in scientific measurement and everyday reckoning are best described by a combination of several numbers. For example, a point in space is specified by giving its three coordinates with respect to an xyz coordinate system.

Here is an example of a different kind: A store handles 100 items; its monthly inventory is a sequence of 100 numbers (a1, a2, …, a100) specifying the quantities of each of the 100 items currently in stock. Such a sequence of numbers is usually called a vector. When the store is restocked, a vector is added to the current inventory vector. At the end of a good month of sales, a vector is subtracted.

As this example shows, it is natural to add vectors by adding corresponding components, and subtract vectors by subtracting corresponding components. If the store manager in the preceding example decided to double inventory, each component of the inventory vector would be multiplied by 2. This shows that a natural way of multiplying a vector by a real number k is to multiply each component by k. This kind of multiplication is commonly called scalar multiplication.

Historically, as the use of vectors became widespread and they came to be an indispensable tool of science, vector algebra grew to be one of the major branches of mathematics. Today it forms the basis for much of advanced calculus, the theory and practice of differential equations, statistics, and vast areas of applied mathematics. Scientific computation is enormously simplified by vector methods; for example, 3, or 300, or 3000 individual readings of scientific instruments can be expressed as a single vector.

In any branch of mathematics it is elegant and desirable (but not always possible) to find a simple list of axioms from which all the required theorems may be proved. In the specific case of vector algebra, we wish to select as axioms only those particular properties of vectors which are absolutely necessary for proving further properties of vectors. And we must select a sufficiently complete list of axioms so that, by using them and them alone, we can prove all the properties of vectors needed in mathematics.

A delightfully simple list of axioms is available for vector algebra. The remarkable fact about this axiom system is that, although we conceive of vectors as finite sequences (a1, a2, …, an) of numbers, nothing in the axioms actually requires them to be such sequences! Instead, vectors are treated simply as elements in a set, satisfying certain equations. Here is our basic definition:

A vector space over a field F is a set V, with two operations + and · called vector addition and scalar multiplication, such that

1. V with vector addition is an abelian group.

2. For any k ∊ F and a ∊ V, the scalar product ka is an element of V, subject to the following conditions: for all k, l ∊ F and a, b ∊ V,

(a) k(a) + b) = ka + kb,

(b) (k + l)a = ka + la,

(c) k(la) = (kl)a,

(d) 1a = a.

The elements of V are called vectors and the elements of the field F are called scalars,

In the following exposition the field F will not be specifically referred to unless the context requires it. For notational clarity, vectors will be written in bold type and scalars in italics.

The traditional example of a vector space is the set ![]() n of all n-tuples of real numbers, (a1, a2, …, an), with the operations

n of all n-tuples of real numbers, (a1, a2, …, an), with the operations

(a1, a2, …, an) + (b1, b2, …, bn) = (a1 + b1, a2 + b2, …, an + bn)

and k(a1, a2, …, an) = (ka1, ka2, …, kan)

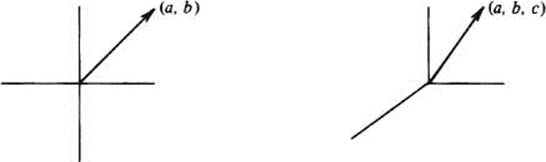

For example, ![]() 2 is the set of all two-dimensional vectors (a, b), while

2 is the set of all two-dimensional vectors (a, b), while ![]() 3 is the set of all vectors (a, b, c) in euclidean space. (See the figure on the next page.)

3 is the set of all vectors (a, b, c) in euclidean space. (See the figure on the next page.)

However, these are not the only vector spaces! Our definition of vector space is so very simple that many other things, quite different in appearance from the traditional vector spaces, satisfy the conditions of our definition and are therefore, legitimately, vector spaces.

For example, ![]()

![]() , you may recall, is the set of all functions from

, you may recall, is the set of all functions from ![]() to

to ![]() . We define the sum f + g of two functions by the rule

. We define the sum f + g of two functions by the rule

[f + g](x)= f(x) + g(x)

and we define the product af, of a real number a and a function f, by

[af](x) = af(x)

It is very easy to verify that ![]()

![]() , with these operations, satisfies all the conditions needed in order to be a vector space over the field

, with these operations, satisfies all the conditions needed in order to be a vector space over the field ![]() .

.

As another example, let ![]() denote the set of all polynomials with real coefficients. Polynomials are added as usual, and scalar multiplication is defined by

denote the set of all polynomials with real coefficients. Polynomials are added as usual, and scalar multiplication is defined by

k(a0 + a1x + ⋯ + anxn) = (ka0) + (ka1)x + ⋯ + (kan)xn

Again, it is not hard to see that ![]() is a vector space over

is a vector space over ![]() .

.

Let V be a vector space. Since V with addition alone is an abelian group, there is a zero element in V called the zero vector, written as 0. Every vector a in V has a negative, written as −a. Finally, since V with vector addition is an abelian group, it satisfies the following conditions which are true in all abelian groups:

a + b = a + c implies b= c (1)

a + b = 0 implies a = −b and b = −a (2)

−(a + b) = (−a) + (−b)and −(−a) = a (3)

There are simple, obvious rules for multiplication by zero and by negative scalars. They are contained in the next theorem.

Theorem 1 If V is a vector space, then:

(i)0a = 0, for every a ∈ V

(ii)k0 = 0, for every scalar k.

(iii)If ka = 0, then k = 0 or a = 0.

(iv)(−1)a = −a for every a ∈ V.

To prove Rule (i), we observe that

0a = (0 + 0)a = 0a + 0a

hence 0 + 0a = 0a + 0a. It follows by Condition (1) that 0 = 0a.

Rule (ii) is proved similarly. As for Rule (iii), if k = 0, we are done. If k ≠0, we may multiply ka = 0 by 1/k to get a = 0. Finally, for Rule (iv), we have

a + (−1)a = 1a + (−1)a = (1 + (−1))a = 0a = 0

so by Condition (2), (−1)a = −a.

Let V be a vector space, and U ⊆ V. We say that U is closed with respect to scalar multiplication if ka ∈ U for every scalar k and every a ∈ U. We call U a subspace of V if U is closed with respect to addition and scalar multiplication. It is easy to see that if V is a vector space over the field F, and U is a subspace of V, then U is a vector space over the same field F.

If a1,a2, …, an are in V and k1, k2, …, kn are scalars, then the vector

k1a1 + k2 a2 + ⋯ + knan

is called a linear combination of a1,a2, …, an. The set of all the linear combinations of a1,a2, …, an is a subspace of V. (This fact is exceedingly easy to verify.)

If U is the subspace consisting of all the linear combinations of a1,a2, …, an, we call U the subspace spanned by a1,a2, …, an. An equivalent way of saying the same thing is as follows: a space (or subspace) U is spanned by a1,a2, …, an iff every vector in U is a linear combination of a1, a2, …, an.

If U is spanned by a1,a2, …, an, we also say that a1,a2, …, an span U.

Let S = {a1,a2, …, an} be a set of distinct vectors in a vector space V. Then S is said to be linearly dependent if there are scalars k1, …, kn, not all zero, such that

k1a1 + k2a2 + ⋯ + knan = 0 (4)

Obviously this is the same as saying that at least one of the vectors in S is a linear combination of the remaining ones. [Solve for any vector ai, in Equation (4) having a nonzero coefficient.]

If S = {a1,a2, …, an} is not linearly dependent, then it is linearly independent. That is, S is linearly independent iff

k1a1 + k2a2 + ⋯ + knan = 0 implies k1 = k2 = ⋯ = kn = 0

This is the same as saying that no vector in S is equal to a linear combination of the other vectors in S.

It is obvious from these definitions that any set of vectors containing the zero vector is linearly dependent. Furthermore, the set {a}, containing a single nonzero vector a, is linearly independent.

The next two lemmas, although very easy and at first glance rather trite, are used to prove the most fundamental theorems of this subject.

Lemma 1 If {a1, a2, …, an} is linearly dependent, then some ai, is a linear combination of the preceding ones, a1, a2, …, ai−1.

PROOF: Indeed, if {a1, a2, …, an} is linearly dependent, then k1a1 + ⋯ + knan = 0 for coefficients k1,k2, …,kn which are not all zero. If ki is the last nonzero coefficient among them, then k1ai + ⋯ + kiai = 0, and this equation can be used to solve for ai in terms of a1,⋯,ai−1.■

Let {a1 a2, …,![]() , …, an} denote the set {a1,a2, …, an} after removal of ai.

, …, an} denote the set {a1,a2, …, an} after removal of ai.

Lemma 2 If {a1,a2, …, an} spans V, and ai is a linear combination of preceding vectors, then {a1, …,![]() , …,an} still spans V.

, …,an} still spans V.

PROOF: Our assumption is that ai, = k1a1 + ⋯ + ki−1ai−1 for some scalars k1, …, ki−1. Since every vector b∈ V is a linear combination

b = l1a1 + ⋯ + liai + ⋯ + lnan

it can also be written as a linear combination

b = l1a1 + ⋯ + li(k1 a1 + ⋯ +ki−1 ai−1) + ⋯ ln an

in which ai does not figure. ■

A set of vectors {a1, …, an} in V is called a basis of V if it is linearly independent and spans V.

For example, the vectors ε1 = (1, 0, 0), ε2 = (0,1,0), and ε3 = (0,0,1) form a basis of (![]() 3. They are linearly independent because, obviously, no vector in {ε1, ε2, ε3} is equal to a linear combination of preceding ones. [Any linear combination of ε1 and ε2 is of the form aε1 + bε2 = (a, b, 0), whereas ε3 is not of this form; similarly, any linear combination of ε1 alone is of the form aε1 = (a, 0, 0), and ε2 is not of that form.] The vectors ε1,ε2, ε3 span

3. They are linearly independent because, obviously, no vector in {ε1, ε2, ε3} is equal to a linear combination of preceding ones. [Any linear combination of ε1 and ε2 is of the form aε1 + bε2 = (a, b, 0), whereas ε3 is not of this form; similarly, any linear combination of ε1 alone is of the form aε1 = (a, 0, 0), and ε2 is not of that form.] The vectors ε1,ε2, ε3 span ![]() 3 because any vector (a, b, c) in

3 because any vector (a, b, c) in ![]() can be written as (a, b, c) = aε1 + bε2 + cε3.

can be written as (a, b, c) = aε1 + bε2 + cε3.

Actually, {ε1, ε2, ε3} is not the only basis of ![]() 3. Another basis of

3. Another basis of ![]() 3 consists of the vectors (1, 2, 3), (1,0, 2), and (3, 2,1); in fact, there are infinitely many different bases of

3 consists of the vectors (1, 2, 3), (1,0, 2), and (3, 2,1); in fact, there are infinitely many different bases of ![]() 3. Nevertheless, all bases of

3. Nevertheless, all bases of ![]() 3 have one thing in common: they contain exactly three vectors! This is a consequence of our next theorem:

3 have one thing in common: they contain exactly three vectors! This is a consequence of our next theorem:

Theorem 2 Any two bases of a vector space V have the same number of elements.

PROOF: Suppose, on the contrary, that V has a basis A = {a1, …, an} and a basis B = {b1, …, bm} where m ≠ n. To be specific, suppose n < m. From this assumption we will derive a contradiction.

Put the vector bl in the set A, so A now contains {b1, a1 a2, …, an}. This set is linearly dependent because b1 is a linear combination of a1, …, an. But then, by Lemma 1, some ai is a linear combination of preceding vectors. By Lemma 2 we may expel this ai, and the remaining set {b1, al, …, ![]() , …, an} still spans V.

, …, an} still spans V.

Repeat this argument a second time by putting b2 in A, so A now contains {b2, b1, a1, a2, …,![]() , …, an}. This set is linearly dependent because {b1,a1, …,

, …, an}. This set is linearly dependent because {b1,a1, …, ![]() , …, an} spans V and therefore b2 is a linear combination of b1, a1, …,

, …, an} spans V and therefore b2 is a linear combination of b1, a1, …, ![]() , …, an. By Lemma 1, some aj is a linear combination of preceding vectors in A, so by Lemma 2 we may remove aj, and {b2, b1, a1, a2, …,

, …, an. By Lemma 1, some aj is a linear combination of preceding vectors in A, so by Lemma 2 we may remove aj, and {b2, b1, a1, a2, …,![]() , …,

, …, ![]() , an} still spans V.

, an} still spans V.

This argument is repeated n times. Each time, a vector from B is put into A and a vector ak is removed. At the end of the nth repetition, A contains only b1, …,bn, and {b1, …, bn} still spans V. But this is impossible because it implies that bn +1 is a linear combination of b1, …, bn, whereas in fact, B = {bl, …, bn, …, bm} is linearly independent!

This contradiction proves that any two bases of V must contain the same number of elements ! ■

If V has a basis {a1, …, an}, we call V a finite-dimensional vector space and say that V is of dimension n. In that case, by Theorem 2 every basis of V has exactly n elements.

In the sequel we consider only finite-dimensional vector spaces. The next two lemmas are quite interesting. The first one states that if {a1 …, am} spans V, there is a way of removing vectors from this set, one by one, until we are left with an independent set which still spans V.

Lemma 3 If the set {a1, …, am} spans V, it contains a basis of V.

PROOF: If {a1, …, am} is an independent set, it is a basis, and we are done. If not, some a1 is a linear combination of preceding ones, so {a1, …, ![]() , …, am} still spans V. Repeating this process, we discard vectors one by one from {a1 …,am} and, each time, the remaining vectors still span V. We keep doing this until the remaining set is independent. (In the worst case, this will happen when only one vector is left.) ■

, …, am} still spans V. Repeating this process, we discard vectors one by one from {a1 …,am} and, each time, the remaining vectors still span V. We keep doing this until the remaining set is independent. (In the worst case, this will happen when only one vector is left.) ■

The next lemma asserts that if {a1, …, as} is an independent set of vectors in V, there is a way of adding vectors to this set so as to get a basis of V.

Lemma 4 If the set {a1, …,as} is linearly independent, it can be extended to a basis of V.

PROOF: If {b1, …, bn} is any basis of V, then {a1, …, as, b1, …, bn} spans V. By the proof of Lemma 3, we may discard vectors from this set until we get a basis of V. Note that we never discard any ai, because, by hypothesis, ai, is not a linear combination of preceding vectors. ■

The next theorem is an immediate consequence of Lemmas 3 and 4.

Theorem 3 Let V have dimension n. If {a1, …, an} is an independent set, it is already a basis of V.If{bl, …,bn} spans V, it is already a basis of V.

If {a1, …, an} is a basis of V, then every vector c in V has a unique expression c = k1a1 + ⋯ + knan as a linear combination of a1, …, an. Indeed, if

c = k1 a1 + ⋯ + knan = l1a1 + ⋯ + lnan

then

(k1 − l1)a1 + ⋯ +(kn − ln)an = 0

hence

k1 − l1 = ⋯ = kn − ln = 0

so k1 = l1, … kn = ln. If c = k1al + ⋯ + knan,the coefficients k1, …, kn are called the coordinates of c with respect to the basis {al, …, an}. It is then convenient to represent c as the n-tupie

c = (k1, …, kn)

If U and V are vector spaces over a field F, a function h : U → V is a homomorphism if it satisfies the following two conditions:

h(a + b) = h(a) + h(b)

and

h(ka) = kh(a)

A homomorphism of vector spaces is also called a linear transformation.

If h : U→V is a linear transformation, its kernel [that is, the set of all a ∈ U such that h(a) = 0] is a subspace of U, called the null space of h. Homomorphisms of vector spaces behave very much like homomorphisms of groups and rings. Their properties are presented in the exercises.

EXERCISES

A. Examples of Vector Spaces

1 Prove that ![]() n, as defined on page 283, satisfies all the conditions for being a vector space over

n, as defined on page 283, satisfies all the conditions for being a vector space over ![]() .

.

2 Prove that ![]() (

(![]() ), as defined on page 284, is a vector space over

), as defined on page 284, is a vector space over ![]() .

.

3 Prove that ![]() ,as defined on page 284, is a vector space over

,as defined on page 284, is a vector space over ![]() .

.

4 Prove that ![]() 2(

2(![]() ), the set of all 2 × 2 matrices of real numbers, with matrix addition and the scalar multiplication

), the set of all 2 × 2 matrices of real numbers, with matrix addition and the scalar multiplication

![]()

is a vector space over ![]() .

.

B. Examples of Subspaces

# 1 Prove that {(a, b, c) : 2a − 3b + c = 0} is a subspace of ![]() 3.

3.

2 Prove that the set of all (x, y, z) ∈ ![]() 3 which satisfy the pair of equations ax + by + c = 0, dx + ey + f = 0 is a subspace of

3 which satisfy the pair of equations ax + by + c = 0, dx + ey + f = 0 is a subspace of ![]() 3.

3.

3 Prove that {f : f(l) = 0} is a subspace of ![]() (

(![]() ).

).

4 Prove that { f : f is a constant on the interval [0,1]} is a subspace of ![]() (

(![]() ).

).

5 Prove that the set of all even functions [that is, functions f such that f(x) = f(−x)] is a subspace of ![]() (

(![]() ). Is the same true for the set of all the odd functions [that is, functions f such that f(−x) = −f(x)]?

). Is the same true for the set of all the odd functions [that is, functions f such that f(−x) = −f(x)]?

6 Prove that the set of all polynomials of degree ≤n is a subspace of ![]()

C. Examples of Linear Independence and Bases

1 Prove that {(0,0,0,1), (0,0,1,1), (0,1,1,1), (1,1,1,1)} is a basis of ![]() 4.

4.

2 If a = (1, 2, 3,4) and b = (4, 3,2,1), explain why {a, b} may be extended to a basis of ![]() 4. Then find a basis of

4. Then find a basis of ![]() 4 which includes a and b.

4 which includes a and b.

3 Let A be the set of eight vectors (x, y, z) where x, y, z = 1, 2. Prove that A spans ![]() 3, and find a subset of A which is a basis of

3, and find a subset of A which is a basis of ![]() 3.

3.

4 If ![]() is the subspace of

is the subspace of ![]() consisting of all polynomials of degree ≤ n, prove that {1, x, x2, …, xn} is a basis of

consisting of all polynomials of degree ≤ n, prove that {1, x, x2, …, xn} is a basis of ![]() . Then find another basis of

. Then find another basis of ![]() .

.

5 Find a basis for each of the following subspaces of ![]() 3:

3:

# (a) S1 = {(x, y, z) : 3x − 2y + z = 0} (b) S2 = {(x, y, z) : x + y − z = 0 and 2x − y + z = 0}

6 Find a basis for the subspace of ![]() 3 spanned by the set of vectors (x, y, z) such that x2 + y2 + z2 = 1.

3 spanned by the set of vectors (x, y, z) such that x2 + y2 + z2 = 1.

7 Let U be the subspace of ![]() (

(![]() ) spanned by {cos2 x, sin2 x, cos 2x}. Find the dimension of U, and then find a basis of U.

) spanned by {cos2 x, sin2 x, cos 2x}. Find the dimension of U, and then find a basis of U.

8 Find a basis for the subspace of ![]() spanned by

spanned by

{x3 + x2 + x + 1, x2 + 1, x3 − x2 + x − 1, x2 − 1}

D. Properties of Subspaces and Bases

Let V be a finite-dimensional vector space. Let dim V designate the dimension of V. Prove each of the following:

1 If U is a subspace of V, then dim U ≤ dim V.

2 If U is a subspace of V, and dim U = dim V, then U = V.

3 Any set of vectors containing 0 is linearly dependent.

4 The set {a}, containing only one nonzero vector a, is linearly independent.

5 Any subset of an independent set is independent. Any set of vectors containing a dependent set is dependent.

# 6 If {a, b, c} is linearly independent, so is {a + b, b + c, a + c}.

7 If {a1, …, an} is a basis of V, so is {k1a1, …, k nan } for any nonzero scalars

8 The space spanned by {a1, …,an} is the same as the space spanned by {b1, …, bm} iff each ai is a linear combination of b1, …, bm, and each bj is a linear combination of a1 …, an.

E. Properties of Linear Transformations

Let U and V be finite-dimensional vector spaces over a field F, and let h : U → V be a linear transformation. Prove parts 1–3:

1 The kernel of h is a subspace of U. (It is called the null space of h.)

2The range of h is a subspace of V. (It is called the range space of h.)

3 h is injective iff the null space of h is equal to {0}.

Let Nbe the null space of h, and ![]() the range space of h. Let {a1, …, ar} be a basis of N. Extend it to a basis {al, …, ar, …, an} of U.

the range space of h. Let {a1, …, ar} be a basis of N. Extend it to a basis {al, …, ar, …, an} of U.

Prove parts 4–6:

4 Every vector b∈ ![]() is a linear combination of h(ar+1), …, h(an).

is a linear combination of h(ar+1), …, h(an).

# 5 {h(ar+1), …, h(an)} is linearly independent.

6 The dimension of ![]() is n − r.

is n − r.

7 Conclude as follows: for any linear transformation h, dim (domain h) = dim (null space of h) + dim (range space of h).

8 Let U and V have the same dimension n. Use part 7 to prove that h is injective iff h is surjective.

F. Isomorphism of Vector Spaces

Let U and V be vector spaces over the field F, with dim U = n and dim V = m. Let h : U → V be a homomorphism.

Prove the following:

1 Let h be injective. If {a1.. ., ar} is a linearly independent subset of U, then {h(a1), …, h(ar)} is a linearly independent subset of V.

# 2 h is injective iff dim U = dim h(U).

3 Suppose dim U = dim V; h is an isomorphism (that is, a bijective homomorphism) iff h is injective iff h is surjective.

4 Any n-dimensional vector space V over F is isomorphic to the space Fn of all n-tupies of elements of F.

†G. Sums of Vector Spaces

Let T and U be subspaces of V. The sum of T and U, denoted by T + U, is the set of all vectors a + b, where a ∈ T and b ∈ U.

1 Prove that T + U and T ∩ U are subspaces of V.

V is said to be the direct sum of T and U if V = T + U and T ∩ U = {0}. In that case, we write V = T ⊕ U.

# 2 Prove: V = T ⊕ U iff every vector c ∈ V can be written, in a unique manner, as a sum c = a + b where a ∈ U and b ∈ U.

3 Let T be a k-dimensional subspace of an n-dimensional space V. Prove that an (n − k)-dimensional subspace U exists such that V = T ⊕ U.

4 If T and U are arbitrary subspaces of V, prove that

dim (T + U) = dim T + dim U − dim (T ∩ U)