The history of mathematics: A brief course (2013)

Part VII. Special Topics

Chapter 43. Foundations of Real Analysis

The uncritical use of limiting processes, which did little harm when applied to analytic functions of a complex variable, led to acute problems in the case of general functions of a real variable. The attempts to avoid self-contradictory results led to a close scrutiny of the properties of the real line and the identification of certain hidden assumptions that were needed to establish standard results, such as Cauchy's “theorem” that the sum of an infinite series of continuous functions is continuous. This close scrutiny, in turn, led to set theory. Trigonometric series were involved at the beginning of set theory, although nowadays it is developed without any reference to them. We shall discuss set theory in the next chapter. The present chapter is devoted to that closer scrutiny of the real numbers.

43.1 What Is a Real Number?

We have already alluded to the fact that our modern notion of a real number as an infinite decimal expansion really goes back to the Eudoxan concept of a ratio. A great advance came in the seventeenth century, when analytic geometry was invented by Descartes and Fermat. In his Géométrie, Descartes showed how to replace a ratio in thought by a line, choosing a line arbitrarily called a unit, and letting any other line stand for the number represented by its ratio to that unit.

Euclid had not discussed the product of two lines. He spoke instead of the rectangle on the two lines. Stimulated by algebra, however, and the application of geometry to it, Descartes looked at the product of two lengths in a different way. As pure numbers, the product ab is simply the fourth proportional to 1 : a : b. That is, ab : b : : a : 1. He therefore fixed an arbitrary line that he called I to represent the number 1 and represented ab as the line that satisfied the proportion ab : b : : a : I, when a and b were lines representing two given numbers.

The notion of a real number had at last arisen, not as most people think of it today—an infinite decimal expansion—but as a ratio of line segments. Only a few decades later Newton defined a real number to be “the ratio of one magnitude to another magnitude of the same kind, arbitrarily taken as a unit.” Newton classified numbers as integers, fractions, and surds (Whiteside, 1967, Vol. 2, p. 7). Even with this clarification, however, mathematicians were inclined to gloss over certain difficulties. For example, there is an arithmetic rule according to which ![]() . In Descartes' geometric definition of the product of two real numbers, it is not obvious how this rule is to be proved. The use of the decimal system, with its easy approximations to irrational numbers, soothed the consciences of mathematicians and gave them the confidence to proceed with their development of the calculus. No one even seemed very concerned about the absence of any good geometric construction of cube roots and higher roots of real numbers. The real line answered the needs of algebra in that it gave a representation of any real root there might be of any algebraic equation with real numbers as coefficients. It was some time before anyone realized that geometry still had resources that even algebra did not encompass and would lead to numbers for which pure algebra had no use.

. In Descartes' geometric definition of the product of two real numbers, it is not obvious how this rule is to be proved. The use of the decimal system, with its easy approximations to irrational numbers, soothed the consciences of mathematicians and gave them the confidence to proceed with their development of the calculus. No one even seemed very concerned about the absence of any good geometric construction of cube roots and higher roots of real numbers. The real line answered the needs of algebra in that it gave a representation of any real root there might be of any algebraic equation with real numbers as coefficients. It was some time before anyone realized that geometry still had resources that even algebra did not encompass and would lead to numbers for which pure algebra had no use.

Those resources included the continuity of the geometric line, which turned out to be exactly what was needed for the limiting processes of calculus. It was this property that made it sensible for Euler to talk about the number that we now call e, that is,

![]()

and the other Euler constant

![]()

The intuitive notion of continuity assured mathematicians that there were points on the line, and hence infinite decimal expansions, that must represent these numbers, even though no one would ever know the full expansions. The geometry of the line provided a geometric representation of real numbers and made it possible to reason about them without having to worry about the decimal expansion.

The continuity of the line brought the realization that the real numbers had more to offer than merely convenient representations of the solutions of equations. They could even represent some numbers such as e and γ that had not been found to be solutions of any equations. The line is richer than it needs to be for algebra alone. The concept of a real number allows arithmetic to penetrate into parts of geometry where even algebra cannot go. The sides and diagonals of regular figures such as squares, cubes, pentagons, pyramids, and the like all have ratios that can be represented as the solutions of equations, and hence are algebraic. For example, the diagonal D and side S of a pentagon satisfy D2 = S(D + S). For a square the relationship is D2 = 2S2, and for a cube it is D2 = 3S2. But what about the number we now call π, the ratio of the circumference C of a circle to its diameter D? In the seventeenth century, Leibniz noted that any line that could be constructed using Euclidean methods (straightedge and compass) would have a length that satisfied some equation with rational coefficients. In a number of letters and papers written during the 1670s, Leibniz was the first to contrast what is algebraic (involving polynomials with rational coefficients) with objects that he called analytic or transcendental and the first to suggest that π might be transcendental. In the preface to his pamphlet De quadratura arithmetica circuli (On the Arithmetical Quadrature of the Circle), he explained that at least as a function of a variable, the sine is not a polynomial:

A complete quadrature would be one that is both analytic and linear; that is, it would be constructed by the use of curves whose equations are of [finite] degrees. The illustrious [James] Gregory, in his book On the Exact Quadrature of the Circle, has claimed that this is impossible, but, unless I am mistaken, has given no proof. I still do not see what prevents the circumference itself, or some particular part of it, from being measured [that is, being commensurable with the radius], a part whose arc has a ratio to its sine [half-chord] that can be expressed by an equation of finite degree. But to express the ratio of the arc to the sine in general by an equation of finite degree is impossible, as I shall prove in this little work. [Gerhardt, 1971, Vol. 5, p. 97]

No representation of π as the root of a polynomial with rational coefficients was ever found. This ratio had a long history of numerical approximations from all over the world, but no one ever found any nonidentical equation with rational coefficients satisfied by the circumference and diameter of a circle. The fact that π is transcendental was first proved in 1881 by Ferdinand Lindemann (1852–1939). The complete set of real numbers thus consists of the positive and negative rational numbers, all real roots of equations with integer coefficients (the algebraic numbers), and the transcendental numbers. All transcendental numbers and some algebraic numbers are irrational. Examples of transcendental numbers turned out to be rather difficult to produce. The first well-known1 number to be proved transcendental was the base of natural logarithms e, and this proof was achieved only in 1873, by the French mathematician Charles Hermite (1822–1901). It is still not known whether the Euler constant γ ≈ 0.57712 is even irrational.

43.1.1 The Arithmetization of the Real Numbers

Not until the nineteenth century, when mathematicians took a retrospective look at the magnificent edifice of calculus that they had created and tried to give it the same degree of logical rigor possessed by algebra and Euclidean geometry, were attempts made to define real numbers arithmetically, without mentioning ratios of lines. One such definition by Richard Dedekind (1831–1916), a professor at the Zürich Polytechnikum, was inspired by a desire for rigor when he began lecturing to students in 1858. He found the rigor he sought without much difficulty, but did not bother to publish what he regarded as mere common sense until 1872, when he wished to publish something in honor of his father. In his book Stetigkeit und irrationale Zahlen (Continuity and Irrational Numbers) he referred to Newton's definition of a real number:

. . . the way in which the irrational numbers are usually introduced is based directly upon the conception of extensive magnitudes—which itself is nowhere carefully defined—and explains number as the result of measuring such a magnitude by another of the same kind. Instead of this I demand that arithmetic shall be developed out of itself.

As Dedekind saw the matter, it was really the totality of rational numbers that defined a ratio of continuous magnitudes. Although one might not be able to say that two continuous quantities a and b had a ratio equal to, or defined by, a ratio m : n of two integers, an inequality such as ma < nb could be interpreted as saying that the real number a : b (whatever it was) was less than the rational number n/m. In fact, that interpretation of the inequality was the basis for the Eudoxan theory of proportion, although neither Eudoxus nor Euclid was able to say precisely what a ratio of two lines is.

Thus a positive real number could be defined as a way of dividing the positive rational numbers into two classes, those that were larger than the number and those that were equal to it or smaller, and every member of the first class was larger than every member of the second class. But, so reasoned Dedekind, once the positive rational numbers have been partitioned in this way, the two classes themselves can be regarded as the number.2 They are a well-defined object, and one can define arithmetic operations on such classes so that the resulting system has all the properties we want the real numbers to have, especially the essential one for calculus: continuity. Dedekind claimed that in this way he was able to prove rigorously for the first time that ![]() .3

.3

The practical-minded reader who is content to use approximations will probably be getting somewhat impatient with the discussion at this point and asking if it was really necessary to go to so much trouble to satisfy a pedantic desire for rigor. Such a reader will be in good company. Many prominent mathematicians of the time asked precisely that question. One of them was Rudolf Lipschitz (1832–1903). Lipschitz didn't see what the fuss was about, and he objected to Dedekind's claims of originality (Scharlau, 1986, p. 58). In 1876 he wrote to Dedekind:

I do not deny the validity of your definition, but I am nevertheless of the opinion that it differs only in form, not in substance, from what was done by the ancients. I can only say that I consider the definition given by Euclid. . .to be just as satisfactory as your definition. For that reason, I wish you would drop the claim that such propositions as ![]() have never been proved. I think the French readers especially will share my conviction that Euclid's book provided necessary and sufficient grounds for proving these things.

have never been proved. I think the French readers especially will share my conviction that Euclid's book provided necessary and sufficient grounds for proving these things.

Dedekind refused to back down. He replied (Scharlau, 1986, pp. 64–65):

I have never imagined that my concept of the irrational numbers has any particular merit; otherwise I should not have kept it to myself for nearly fourteen years. Quite the reverse, I have always been convinced that any well-educated mathematician who seriously set himself the task of developing this subject rigorously would be bound to succeed. . .Do you really believe that such a proof can be found in any book? I have searched through a large collection of works from many countries on this point, and what does one find? Nothing but the crudest circular reasoning, to the effect that ![]() because

because ![]() ; not the slightest explanation of how to multiply two irrational numbers. The proposition (mn)2 = m2n2, which is proved for rational numbers, is used unthinkingly for irrational numbers. Is it not scandalous that the teaching of mathematics in schools is regarded as a particularly good means to develop the power of reasoning, while no other discipline (for example, grammar) would tolerate such gross offenses against logic for a minute? If one is to proceed scientifically, or cannot do so for lack of time, one should at least honestly tell the pupil to believe a proposition on the word of the teacher, which the students are willing to do anyway. That is better than destroying the pure, noble instinct for correct proofs by giving spurious ones.

; not the slightest explanation of how to multiply two irrational numbers. The proposition (mn)2 = m2n2, which is proved for rational numbers, is used unthinkingly for irrational numbers. Is it not scandalous that the teaching of mathematics in schools is regarded as a particularly good means to develop the power of reasoning, while no other discipline (for example, grammar) would tolerate such gross offenses against logic for a minute? If one is to proceed scientifically, or cannot do so for lack of time, one should at least honestly tell the pupil to believe a proposition on the word of the teacher, which the students are willing to do anyway. That is better than destroying the pure, noble instinct for correct proofs by giving spurious ones.

Mathematicians have accepted the need for Dedekind's rigor in the teaching of mathematics majors, although the idea of defining real numbers as partitions of the rational numbers (Dedekind cuts) is no longer the most popular approach to that rigor. More often, students are now given a set of axioms for the real numbers and asked to accept on faith that those axioms are consistent and that they characterize a set that has the properties of a geometric line. Only a few books attempt to start with the rational numbers and construct the real numbers. Those that do tend to follow an alternative approach, defining a real number to be a sequence of rational numbers (more precisely, an equivalence class of such sequences, one of which is the sequence of successive decimal approximations to the number).

43.2 Completeness of the Real Numbers

Dedekind's arithmetization of the real numbers amounted to the statement that the real numbers form a complete metric space. The concept now known as completeness of the real numbers is associated with the Cauchy convergence criterion, which asserts that a sequence of real numbers ![]() converges to some real number a if it is a Cauchy sequence; that is, for every ε > 0 there is an index n such that |an − ak| < ε for all k ≥ n. This condition was stated somewhat loosely by Cauchy in his Cours d'analyse, published in the mid-1820s, and the proof given there was also somewhat loose. The same criterion had been stated, and for sequences of functions rather than sequences of numbers, a decade earlier by Bernhard Bolzano (1741–1848). In imprecise language, this criterion says that there exists a number that the sequence is getting close to, provided its terms are getting close to one another. The point is that the criterion of getting close to one another makes no reference to anything outside the sequence. Without this criterion, it would presumably be necessary to exhibit the limit explicitly in order to prove that the sequence converges. That might be difficult to do. Indeed, it is difficult in the case of such Dirichlet series as

converges to some real number a if it is a Cauchy sequence; that is, for every ε > 0 there is an index n such that |an − ak| < ε for all k ≥ n. This condition was stated somewhat loosely by Cauchy in his Cours d'analyse, published in the mid-1820s, and the proof given there was also somewhat loose. The same criterion had been stated, and for sequences of functions rather than sequences of numbers, a decade earlier by Bernhard Bolzano (1741–1848). In imprecise language, this criterion says that there exists a number that the sequence is getting close to, provided its terms are getting close to one another. The point is that the criterion of getting close to one another makes no reference to anything outside the sequence. Without this criterion, it would presumably be necessary to exhibit the limit explicitly in order to prove that the sequence converges. That might be difficult to do. Indeed, it is difficult in the case of such Dirichlet series as

![]()

whose partial sums get close to one another, but whose sum has never been expressed in finite terms using only known real numbers.

43.3 Uniform Convergence and Continuity

Cauchy was not aware at first of any need to make the distinction between pointwise and uniform convergence, and he even claimed that the sum of a series of continuous functions would be continuous, a claim contradicted by Abel, as we have seen. The distinction is a subtle one. It is all too easy not to notice whether choosing n large enough to get a good approximation when fn(x) converges to f(x) requires one to take account of which x is under consideration. That point needed to be stated precisely. The first clear statement of it is due to Philipp Ludwig von Seidel (1821–1896), a professor at Munich, who in 1847 studied the examples of Dirichlet and Abel, coming to the following conclusion:

When one begins from the certainty thus obtained that the proposition cannot be generally valid, then its proof must basically lie in some still hidden supposition. When this is subject to a precise analysis, then it is not difficult to discover the hidden hypothesis. One can then reason backwards that this [hypothesis] cannot occur [be fulfilled] with series that represent discontinuous functions. [Quoted in Bottazzini, 1986, p. 202]

In order to reason confidently about continuity, derivatives, and integrals, mathematicians began restricting themselves to cases where the series converged uniformly, that is, given a positive number ε, one could find an index Nsuch that |fn(x) − f(x)| < ε for all n > N and all x. Weierstrass, in particular, provided a famous theorem known as the M-test for uniform convergence of a series. But, although the M-test is certainly valuable in dealing with power series, uniform convergence in general is too severe a restriction. The trigonometric series exhibited by Abel, for example, represented a discontinuous function as the sum of a series of continuous functions and therefore did not converge uniformly. Yet it could be integrated term by term. One could provide many examples of series of continuous functions that converged to a continuous function but not uniformly. Weaker conditions were needed that would justify the operations rigorously without restricting their applicability too strongly.

43.4 General Integrals and Discontinuous Functions

The search for less restrictive hypotheses and the consideration of more general figures on a line than just points and intervals led to more general notions of length, area, and integral, allowing more general functions to be integrated. Analysts began generalizing the integral beyond the refinements introduced by Riemann. Foundational problems also added urgency to this search. For example, in 1881, Vito Volterra (1860–1940) gave an example of a continuous function having a derivative at every point, but whose derivative was not Riemann integrable. What could the fundamental theorem of calculus mean for this derivative, which had an antiderivative but no integral, as integrals were then understood?

New integrals were created by the Latvian mathematician Axel Harnack (1851–1888), by the French mathematicians Emile Borel (1871–1956), Henri Lebesgue (1875–1941), and Arnaud Denjoy (1884–1974), and by the German mathematician Oskar Perron (1880–1975). By far the most influential of these was the Lebesgue integral, which was developed between 1899 and 1902. This integral was to have profound influence in the area of probability, due to its use by Borel, and in trigonometric series representations, an application that Lebesgue developed as an application of it. Lebesgue justified his more general integral in the preface to a 1904 monograph in which he expounded it, saying,

[I]f we wished to limit ourselves always to these good [that is, smooth] functions, we would have to give up on the solution of a number of easily stated problems that have been open for a long time. It was the solution of these problems, rather than a love of complications, that caused me to introduce in this book a definition of the integral that is more general than that of Riemann and contains the latter as a special case.

Despite its complexity—to develop it with proofs takes four or five times as long as developing the Riemann integral—the Lebesgue integral was included in textbooks as early as 1907: for example, Theory of Functions of a Real Variable, by E. W. Hobson (1856–1933). Its chief attraction was the greater generalilty of the conditions under which it allowed termwise integration. For example, one of the main theorems of this theory is the Lebesgue dominated convergence theorem, which states that if fn(x) are measurable functions4 such that |fn(x)| ≤ g(x), where g(x) is integrable (in the sense of Lebesgue), and fn(x) → f(x) pointwise, then ∫fn(x) dx → ∫ f(x) dx. (Part of the theorem is that f(x) is integrable. This is the Lebesgue dominated convergence theorem.)

Following the typical pattern of development in real analysis, the Lebesgue integral soon generated new questions. The Hungarian mathematician Frigyes Riesz (1880–1956) introduced the classes now known as Lp-spaces, the spaces of measurable functions f for which |f|p is Lebesgue integrable, p > 0. (The space L∞ consists of functions that are bounded on a set whose complement has measure zero.) How the Fourier series and integrals of functions in these spaces behave became a matter of great interest, and a number of questions were raised. For example, in his 1915 dissertation at the University of Moscow, Nikolai Nikolaevich Luzin (1883–1950) posed the conjecture that the Fourier series of a (Lebesgue-) square-integrable function converges except on a set of measure zero. Fifty years elapsed before this conjecture was proved by the Swedish mathematician Lennart Carleson (b. 1928). Because a Riemann-integrable function is square-integrable in the sense of Lebesgue, this theorem applies in particular to such functions. Luzin's student Andrei Nikolaevich Kolmogorov (1903–1987) showed that the Fourier series of a function that is merely Lebesgue-integrable may diverge at every point.

43.5 The Abstract and the Concrete

The increasing generality allowed by the notation y = f(x) threatened to carry mathematics off into stratospheric heights of abstraction. Although the mathematical physicist Ampère (1775–1836) had tried to show that a continuous function is differentiable at most points, the attempt was doomed to failure. Bolzano constructed a “sawtooth” function in 1817 that was continuous, yet had no derivative at any point. Weierstrass later used an absolutely convergent trigonometric series to achieve the same result,5 and a young Italian mathematician, Salvatore Pincherle (1853–1936), who took Weierstrass' course in 1877–1878, wrote a treatise in 1880 in which he gave a very simple example of such a function (Bottazzini, 1986, p. 286):

![]()

Volterra's example of a continuous function whose derivative was not (Riemann) integrable, together with the examples of continuous functions having no derivative at any point naturally cast some doubt on the usefulness of the abstract concept of continuity and even the abstract concept of a function. Besides the construction of more general integrals and the consequent ability to “measure” more complicated geometric figures, it was necessary to investigate differentiation in more detail as well.

43.5.1 Absolute Continuity

The secret of that quest turned out to be not continuity, but monotonicity. A continuous function may fail to have a derivative, but in order to fail, it must oscillate very wildly, as the examples of Bolzano and Weierstrass did. A function that did not oscillate, or oscillated only a finite total amount, necessarily had a derivative except on a set of measure zero. The ultimate result in this direction was achieved by Lebesgue, who showed that a monotonic function has a derivative on a set whose complement has measure zero. Such a function might or might not be the integral of its derivative, as the fundamental theorem of calculus states. In 1902 Lebesgue gave necessary and sufficient conditions for the fundamental theorem of calculus to hold; a function that satisfies these conditions, and is consequently the integral of its derivative, is called absolutely continuous.

43.5.2 Taming the Abstract

It had been known at least since the time of Lagrange that any finite set of n data points (xk, yk), k = 1,. . ., n, with xk all different, could be fitted perfectly with a polynomial of degree at most n − 1. Such a polynomial might—indeed, probably would—oscillate wildly in the intervals between the data points. Weierstrass showed in 1884 that any continuous function, no matter how abstract, could be uniformly approximated by a polynomial over any bounded interval [a, b]. Since there is always some observational error in any set of data, this result meant that polynomials could be used in both practical and theoretical ways, to fit data, and to establish general theorems about continuous functions. Weierstrass also proved a second version of the theorem, for periodic functions, in which he showed that for these functions the polynomial could be replaced by a finite sum of sines and cosines. This connection to the classical functions freed mathematicians to use the new abstract functions, confident that in applications they could be replaced by computable functions.

Weierstrass lived before the invention of the new abstract integrals mentioned above, although he did encourage the development of the abstract set theory of Georg Cantor, which provided the language in which these integrals were formulated. With the development of the Lebesgue integral, a new category of functions arose, the measurable functions. These are functions f(x) such that the set of x for which f(x) > c always has a meaningful measure, although it need not be a geometrically simple set, as it is in the case of continuous functions. It appeared that Weierstrass' work needed to be repeated, since his approximation theorem did not apply to measurable functions. In his 1915 dissertation, Luzin produced two beautiful theorems in this direction. The first was what is commonly called by his name nowadays, the theorem that for every measurable function f(x) and every ε > 0 there is a continuous function g(x) such that g(x) ≠ f(x) only on a set of measure less than ε. As a consequence of this result and Weierstrass' approximation theorem, it followed that every measurable function is the limit of a sequence of polynomials on a set whose complement has measure zero. Luzin's second theorem was that every finite-valued measurable function is the derivative of a continuous function at the points of a set whose complement has measure zero. He was able to use this result to show that any prescribed set of measurable boundary values on the disk could be the boundary values of a harmonic function.

With the Weierstrass approximation theorem and theorems like those of Luzin, modern analysis found some anchor in the concrete analysis of the “classical” period that ran from 1700 to 1850. But the striving for generality and freedom of operation still led to the invocation of some strong principles of inference in the context of set theory. By mid-twentieth century mathematicians were accustomed to proving concrete facts using abstract techniques. To take just one example, it can be proved that some differential equations have a solution because a contraction mapping of a complete metric space must have a fixed point. Classical mathematicians would have found this proof difficult to accept, and many twentieth-century mathematicians have preferred to write in “constructivist” ways that avoid invoking the abstract “existence” of a mathematical object that cannot be displayed explicitly. But most mathematicians are now comfortable with such reasoning.

43.6 Discontinuity as a Positive Property

The Weierstrass approximation theorems imply that the property of being the limit of a sequence of continuous functions is no more general than the property of being the limit of a sequence of polynomials or the sum of a trigonometric series. That fact raises an obvious question: What kind of function is the limit of a sequence of continuous functions? Du Bois-Reymond had shown that it can be discontinuous on a set that is, as we now say, dense. But can it, for example, be discontinuous at every point? That was one of the questions that interested René-Louis Baire (1874–1932). If one thinks of discontinuity as simply the absence of continuity, classifying mathematical functions as continuous or discontinuous seems to make no more sense than classifying mammals as cats or noncats. Baire, however, looked at the matter differently. In his 1905 Leçons sur les fonctions discontinues (Lectures on Discontinuous Functions) he wrote

Is it not the duty of the mathematician to begin by studying in the abstract the relations between these two concepts of continuity and discontinuity, which, while mutually opposite, are intimately connected?

Strange as this view may seem at first, we may come to have some sympathy for it if we think of the dichotomy between the continuous and the discrete, that is, between geometry and arithmetic. At any rate, to a large number of mathematicians at the turn of the twentieth century, it did not seem strange. The Moscow mathematician Nikolai Vasilevich Bugaev (1837–1903, father of the writer Andrei Belyi) was a philosophically inclined scholar who thought it possible to establish two parallel theories, one for continuous functions, the other for discontinuous functions. He called the latter theory arithmology to emphasize its arithmetic character. There is at least enough of a superficial parallel between integrals and infinite series and between continuous and discrete probability distributions (another area in which Russia has produced some of the world's leaders) to make such a program plausible. It is partly Bugaev's influence that caused works on set theory to be translated into Russian during the first decade of the twentieth century and brought the Moscow mathematicians Luzin and Dmitrii Fyodorovich Egorov (1869–1931) and their students to prominence in the area of measure theory, integration, and real analysis.

Baire's monograph was single-mindedly dedicated to the pursuit of one goal: to give a necessary and sufficient condition for a function to be the pointwise limit of a sequence of continuous functions. He found the condition, building on earlier ideas introduced by Hermann Hankel (1839–1873): The necessary and sufficient condition is that the discontinuities of the function form a set of first category. A set is of first category if it is the union of a sequence of sets Ak such that every interval (a, b) contains an interval (c, d) disjoint from Ak. All other sets are of second category.6 Although interest in the specific problems that inspired Baire has waned, the importance of his work has not. The subject of functional analysis rests on three main theorems. Two of them are direct consequences of what is called the Baire category theorem, which asserts that a complete metric space is of second category as a subset of itself. These fundamental pillars of functional analysis—the closed graph theorem and the open mapping theorem—cannot be proved without the Baire category theorem. Here we have an example of an unintended and fortuitous consequence, in which a result turned out to be useful in an area not considered by its discoverer.

Problems and Questions

Mathematical Problems

43.1. On the basis of the geometric series

![]()

Euler was willing to say that ![]() . Later analysts rejected this interpretation of infinite series and confined themselves to series that converge in the ordinary sense. (Such a series cannot converge unless its general term tends to zero.) Kurt Hensel (1861–1941), showed in 1905 that it is possible to define a notion of distance, the p-adic metric, by saying that an integer is close to zero if it is divisible by a large power of the prime number p (in the present case p = 5). Specifically, the distance from m to 0 is given by d(m, 0) = 5−k, where 5k divides m but 5k+1 does not divide m. The distance between 0 and the rational number r = m/n is then by definition d(m, 0)/d(n, 0). Show that d(1, 0) = 1. Show that, if the distance between two rational numbers r and s is defined to be d(r − s, 0), then in fact the series just mentioned does converge to 16 in the sense that d(Sn, 16) → 0, where Sn is the nth partial sum.

. Later analysts rejected this interpretation of infinite series and confined themselves to series that converge in the ordinary sense. (Such a series cannot converge unless its general term tends to zero.) Kurt Hensel (1861–1941), showed in 1905 that it is possible to define a notion of distance, the p-adic metric, by saying that an integer is close to zero if it is divisible by a large power of the prime number p (in the present case p = 5). Specifically, the distance from m to 0 is given by d(m, 0) = 5−k, where 5k divides m but 5k+1 does not divide m. The distance between 0 and the rational number r = m/n is then by definition d(m, 0)/d(n, 0). Show that d(1, 0) = 1. Show that, if the distance between two rational numbers r and s is defined to be d(r − s, 0), then in fact the series just mentioned does converge to 16 in the sense that d(Sn, 16) → 0, where Sn is the nth partial sum.

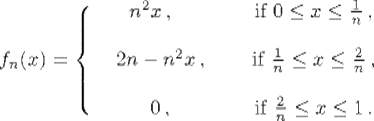

43.2. Consider the functions

Show that fn(x) → 0 as n→ ∞ for each x satisfying 0 ≤ x ≤ 1, but ![]() for all n. Why does this sequence not satisfy the hypotheses of the Lebesgue dominated convergence theorem?

for all n. Why does this sequence not satisfy the hypotheses of the Lebesgue dominated convergence theorem?

43.3. Show that on a finite interval [a, b] the space Lp([a, b]) is contained in Lq([a, b]) if q < p, while the opposite is true for the lp spaces of sequences. (The space lp consists of all sequences ![]() such that

such that ![]() .) Which, if either, of these statements is true for the interval [0, ∞)?

.) Which, if either, of these statements is true for the interval [0, ∞)?

Historical Questions

43.4. Why was the concept of uniform convergence important in the application of the principles of analysis to infinite series and integrals? Why was it too restrictive for the needs of modern analysis?

43.5. How does the Lebesgue integral differ from the Riemann integral, and why is the latter inadequate for the needs of modern analysis?

43.6. What is the Baire category theorem, and why is it important in modern analysis?

Questions for Reflection

43.7. What are the advantages, if any, of building a theory by starting with abstract definitions, then later proving a structure theorem showing that the abstract objects so defined are really composed of familiar simple objects? (Recall that Cauchy preferred to begin his discussion of analytic functions with the abstract property of differentiability, while Weierstrass preferred the more concrete definition of an analytic function as a power series. But it turns out that the two classes are the same.)

43.8. Why did the naive application of finite rules to infinite series lead to paradoxes?

43.9. One consequence of the Lebesgue dominated converge theorem is that if a uniformly bounded sequence of continuous functions fn(x) tends to 0 at each point x ![]() [0, 1], then

[0, 1], then ![]() . This theorem is quite difficult to prove in the context of the Riemann integral, but becomes a trivial consequence of a basic result when the integrals are interpreted as Lebesgue integrals. What advantage does this fact point to when the Riemann integral is compared with the Lebesgue integral?

. This theorem is quite difficult to prove in the context of the Riemann integral, but becomes a trivial consequence of a basic result when the integrals are interpreted as Lebesgue integrals. What advantage does this fact point to when the Riemann integral is compared with the Lebesgue integral?

Notes

1. Joseph Liouville had shown how to construct real numbers that are transcendental as early as 1844, using infinite series and continued fractions, but none of the numbers so constructed had ever arisen naturally.

2. Actually, since the two classes determine each other, one of them, say the one consisting of larger numbers, can be taken as the definition of the real number. Thus ![]() can be defined as the set of all positive rational numbers rsuch that r2 > 2.

can be defined as the set of all positive rational numbers rsuch that r2 > 2.

3. In his paper (1992) David Fowler (1937–2004) investigated a number of approaches to the arithmetization of the real numbers and showed how the specific equation ![]() could have been proved geometrically and also how difficult this proof would have been using many other natural approaches.

could have been proved geometrically and also how difficult this proof would have been using many other natural approaches.

4. See below for the definition of measurable functions.

5. This example was communicated by his student Paul Du Bois-Reymond (1831–1889) in 1875. The following year Du Bois-Reymond constructed a continuous periodic function whose Fourier series failed to converge at a set of points that came arbitrarily close to every point.

6. The sets Ak are said to be nowhere dense. In his famous treatise Mengenlehre (Set Theory), Felix Hausdorff (1862–1942) criticized the phrase of first category as “colorless.”