Methods of Mathematics Applied to Calculus, Probability, and Statistics (1985)

Part I. ALGEBRA AND ANALYTIC GEOMETRY

Chapter 2. The Integers

2.1 THE INTEGERS

There is a famous saying due to Kronecker (1823–1891):

God made the integers, man did the rest.

So fundamental are the integers that whenever you see a discussion of communication with an alien intelligence it is always assumed that they too have the integers, at least the positive ones. Counting is so basic that a high level of technology seems impossible without the integers. Counting is usually taught as 1, 2, 3, … , but experience is beginning to favor 0, 1, 2, … as the preferable way. You will see numerous examples of this change as we go along.

The most startling aspect of the positive integers is that there is no last integer. It takes time to grasp the implications of this fact. Young children often fail to appreciate the logic that there can be no largest integer, because, if there were, then adding 1 to it would make a still larger integer. A little later in life they are often fascinated by this observation.

It is often said that the integers approach infinity, which is a simple way of describing the process of becoming arbitrarily large, that no matter how large an integer you mention, I can always name a larger one. By viewing it as a process, you can avoid the question of the set of all integers and escape such questions are “Is there a largest integer and if so what is its name?”

There seems to be a significant psychological (and logical) difference between the simple process of admitting that there is always a next integer, and the assumption that there exists a set consisting of all the integers. The first is much closer to experience, and the latter raises the question of how anyone could form the entire set of all positive integers.

Between these two concepts, of process and set, lies the concept of the class of all positive integers. For a class you are only required to decide if a given object is or is not in the class; you are not asked to form the entire set. For example, the class of even integers seems to be a reasonable idea. The concept of class seems to be closer to most people’s intuitions than is the concept of an infinite set.

The fact that there is no largest integer produces some rather surprising observations. Long ago, Galileo (1564–1642) observed that there are as many integers as there are squares of integers, since we clearly have a term-by-term correspondence between the following two lines;

![]()

The three dots imply that the sequence is indefinitely extended. There is a one-to-one correspondence between the entries in the two lines of numbers. Using this method of comparing, neither row can have more numbers than the other row; both have the same number of numbers.

The power of a simple contradiction is very great in mathematics. For example, using only the tool of contradiction, Euclid (flourished about 300 B.C.) proved that there is no largest prime number (a number with no factors other than itself and 1). He reasoned as follows. Suppose there were a largest prime number. Then let all the prime numbers be labeled in increasing order as p1 = 2, p2 = 3, p3 = 5, … , on up to pn, where pn is the assumed largest prime number. Now consider the number

N = (p1p2 … pn) + 1

(where the three dots represent the missing prime numbers). Since no integer (other than 1) can divide each of two consecutive integers, the number N cannot be divided by p1 or p2, … , or pn. Therefore, N is either a prime number larger than pn or else is composed of factors all of which are larger than pn. In either case we have shown that there exists a larger prime number, a contradiction. Thus Euclid concluded that there is no largest prime number; there are an infinite number of prime numbers.

Much of mathematics is involved with the infinite. D. H. Lehmer (1905–) says somewhere that if a result is to be called a theorem in mathematics then it must involve an infinite number of cases. For example, the statement that

1 + 2 + 3 + 4 = 10

cannot be called a theorem. But the statement that

![]()

is true for all integers n is a theorem. Thus, somehow, in mathematics we must learn to cope with an infinite number of cases. We have already seen that there is no largest prime number. As another example, consider the process of getting more and more accurate approximations to the number pi, π = 3.14159265358979 …. We can focus on the process of getting more and more digits, but we are soon compelled to think about the existence of the number itself, which is represented by all its digits (although there is no last digit).

No one has any direct experience with the actual infinite. Indeed, it is currently believed that the whole universe is finite, and that even space is finite. But whether the universe is finite or not, within the framework of current astronomy and physics, there is a sphere, drawn with you as the center, of say 100-light-year radius (the distance light travels in 100 years) such that anything now outside this sphere cannot affect your life for the next 100 years. Thus the physical universe you can hope to experience is bounded and finite.

But the concept of the infinite is central to much of mathematics. Allowing no integers beyond some point would be very awkward in practice. Infinity may be only an idealization of our experience, but it is a very useful idealization. Many things are much easier to think about and handle in our minds when we allow the concept of infinity. The basic idea comes from the experience of “There is always a next integer.” But how we get to an abstract idea needs to be watched so that we understand both its usefulness and the dangers of believing too much in the results later obtained. Often it is sufficient to admit only the process, but occasionally we have to deal with the infinite set itself.

The concept of the number zero is especially difficult at first. The idea that something (the symbol 0) can stand for nothing (no things) seems a bit paradoxical to the untrained mind. It also takes experience to avoid the logical troubles with the two concepts, nothing and the symbol for nothing. Often the symbol ![]() (resembling φ, the Greek lowercase phi) is used to denote the empty set just to avoid this confusion. The symbol 0 is something and must be treated accordingly. We must be careful to distinguish between the symbol and its meaning.

(resembling φ, the Greek lowercase phi) is used to denote the empty set just to avoid this confusion. The symbol 0 is something and must be treated accordingly. We must be careful to distinguish between the symbol and its meaning.

When you include the negative integers, then there is no first (least) integer. This infinite regression is also very difficult to grasp. Many of the ancient myths that discuss the creation of the world have the world floating in a sea, resting on the back of an elephant, or on a tortoise that swims in a sea, or some such explanation for what holds the world up. The fact that the myths have come down to us in this form indicates that neither the audience nor the speakers themselves often asked, “And on what does that rest?” It takes some sophistication to recognize the infinite regression and to appreciate that there is neither a first nor a last integer.

2.2 ON PROVING THEOREMS

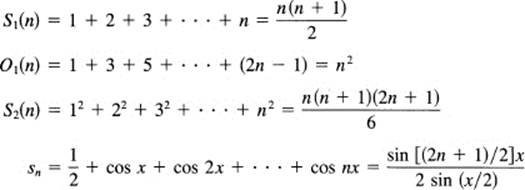

Many results in mathematics involve a potentially infinite number of cases. As examples, consider the following equations, which, because each depends on an arbitrary integer n, are true for all positive integers n.

The three dots in each equation are called an ellipsis (ellipsis means leaving out, omitting), meaning that we have not written the obvious terms. When n is very small, we have sometimes written too many terms. In particular, in the first equation if n = 2, then we have only

![]()

The four expressions on the left sides of the equations are functions whose values are computed only for positive integers; they are functions over the (class of) positive integers. However, many times it is very convenient to evaluate them for the integer 0, often meaning no terms in the sums. Usually the value is then 0, the sum of no terms. However, for the fourth equation the sum is ![]() when n = 0. Furthermore, the fourth statement depends on an arbitrary x. It is a very strong statement; for all positive integers n, and for all real x, the left-hand side equals the right-hand side of the equation. Occasionally, we will want to extend a function to the negative integers, but the above examples are not such cases.

when n = 0. Furthermore, the fourth statement depends on an arbitrary x. It is a very strong statement; for all positive integers n, and for all real x, the left-hand side equals the right-hand side of the equation. Occasionally, we will want to extend a function to the negative integers, but the above examples are not such cases.

How could we ever hope to prove that such formulas are true? We cannot do this by testing them on any finite number of cases as the following examples show.

Example 2.2-1

A formula for primes. Consider the following well-known formula:

f(n) = n2 – n + 41

Suppose it is claimed that for any positive (or possibly 0) integer this formula gives a prime number (a number having no factors other than 1 and itself). Well, we start with n = 0. We get 41, which is a prime. Next we try n = 1, and again get the number 41. Next we make a short table

|

n = 2 |

43 |

|

n = 3 |

47 |

|

n = 4 |

53 |

|

n = 5 |

61 |

|

n = 6 |

71 |

|

… |

… |

|

n = 40 |

1601 |

and by some careful checking we decide that all the entires in the table are indeed primes.

We have verified a formula for 41 consecutive cases; are these cases enough to prove that the formula always gives primes? No, because the very next case, n = 41, clearly gives a composite number (a composite number is one that has factors other than 1 and itself): 4l × 41 = 412, which is not a prime. Thus testing any finite number of cases is not enough to prove that a theorem in mathematics is true for all integers. On the other hand, in science we must always stop after a finite number of experiments and simply assert the universal law. In both mathematics and science, one counterexample can be enough to disprove a conjecture.

Example 2.2-2

An obvious example of the same thing is the function

f(n) = n(n – l)(n – 2) … (n – k)

This expression in the variable n vanishes for n = 0, 1, 2, … , k and for no other cases. Thus k + 1 verifications that a function is zero do not prove that it is always zero. This function could be added to any other function g(n) to get the sum f(n) + g(n), and you would get the same values for both g(n) and f(n) + g(n) for the first k + 1 values, and then the two would differ.

Example 2.2-3

The purpose of this example is to show you the triviality of making up such examples as the above. Suppose, to be specific, you want the first million cases to be true, but no others. We have, for the million cases n = 0, 1, … , 999,999, the following obvious theorem:

All the nonnegative integers require less than 7 decimal digits to represent them (in decimal form).

Even a child can see the falsity of the theorem and generalize to the futility of using any finite number of true cases to prove such a theorem.

Many methods for proving statements involve a potentially infinite number of cases. The best known method is called mathematical induction, which we take up in the next section.

EXERCISES 2.2

1.How far does the formula f(n) = n2 – 79n + 1601 generate primes? Ans.: It fails at n = 80 since f(80) = 412.

2.Prove that the square of an odd integer is an odd integer. Hint: Write the odd number as 2k + 1.

3.Create your own example of a large number of true cases but that are not universally true.

4.Extend the formula n2 – n + 41 to negative integers.

5.Is it true that every positive integer is either a prime or a composite number?

6.Do you think that –7 should be called a prime? Why?

2.3 MATHEMATICAL INDUCTION

How can we hope to prove that some statement is true for all positive integers (and possibly 0, too)? Certainly not by testing a finite number of cases. But suppose we can show that if we take any one arbitrary case as being true then the next case must also be true. This is like a string of dominos arranged so that each in falling down knocks over the next. Then it would only remain to show that the first case was true (tipped over). Can we do this sort of thing in mathematics? As an illustration that we can, consider the following example.

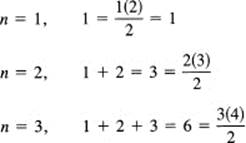

Example 2.3-1

Sum of the first n integers. The first formula in the last section states that

![]()

We first, prudently, check the first few cases.

Now we use the falling domino plan. We assume that the formula is true for the mth case; that is, the following equation is true:

![]()

To advance the left-hand side to the next case, we must (for this problem) add m + 1 to both sides (thus keeping the equality):

![]()

The left-hand side is now the left-hand side of (2.3-1) for the next case n = (m + 1). How about the right-hand side? We can factor out the m + 1 from each term on the right-hand side and get

![]()

This can now be seen to be the right-hand side of the original equation (2.3-1) with n replaced by m + 1. Thus from the statement for m we have derived the statement for m + 1; we have successfully done the domino trick. Since we have already checked the formula for the first case, n = 1, we conclude that the formula is true for all positive integers n.

This method of mathematical induction is very important and has many variations. One variation is to introduce a slight change that sometimes makes the algebra significantly easier (because the target formula is simpler); we assume the truth of the (m – 1)th case and derive from it the truth of the mth case (instead of going from m to m + 1). This is one of the many “tricks of the trade” that the experts all know but that are seldom explicitly stated.

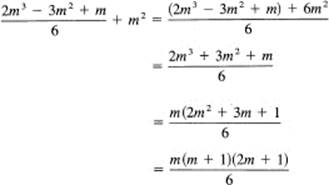

Example 2.3-2

Sum of the squares of the integers. We will prove the third equation in the previous section,

![]()

First we must verify it for some particular case, say n = 1.

![]()

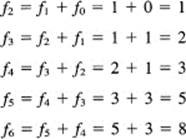

With experience we might have noted that the trivial case n = 0 is a simpler basis for the induction. To advance from the (m – 1)th case to the mth case, we need, for this problem, to add the quantity m2 to both sides of the (m – 1)th case (put n = m – 1 in Equation (2.3-2) and then add m2to both sides). We get

![]()

which is the proper left-hand side of (2.3-2) for the mth case. To get the proper form on the right-hand side, we multiply out the parentheses on the right-hand side and rearrange into the appropriate form

which is the required form (n = m) for the right-hand side of (2.3-2).

Sometimes when we apply mathematical induction we need more than one starting case. We can also abbreviate the steps written down in the proof by eliding (omitting) the formality “assume it is true for m.”

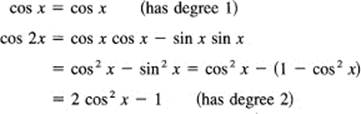

Example 2.3-3

Cos nx is a polynomial in cos x. Suppose we wish to prove that

cos nx = Pn (cos x)

where Pn(cos x) is a polynomial (many terms, “poly” means many) of degree n in the variable cos x. Notice that we are being rather abstract. We are not going to produce the explicit polynomial; we are only going to show that one exists. This is part of the plan to force you to learn to handle abstraction; we are deliberately withholding the concrete expression.

To carry out the proof, we need a particular trigonometric identity that is easy to derive from the basic addition formulas (16.1-7) and (16.1-6)

cos (n + 1)x = cos nx cos x – sin nx sin x

cos (n – 1)x = cos nx cos x + sin nx sin x

Adding these two identities causes the sine terms to cancel, and then transposing one term gives us

![]()

Suppose, now, that both cos nx and cos (n – 1)x were polynomials in cos x of degrees n and n – 1, respectively. (Notice that we have not bothered to introduce the “dummy letter” m but assume that the process is sufficiently familiar that you can follow the argument in the abbreviated form.) The reasoning goes as follows: since cos nx is a polynomial of degree n in cos x and this is, by the identity (2.3-3), to be multiplied by 2 cos x, it follows that the product is now of degree n + 1 in cos x. The second term, cos (n – 1)x, adds a polynomial of degree n – 1 in cos x and cannot affect the degree of the sum. Thus from our identity we see that cos (n + 1)x is a polynomial of degree n + 1 in cos x. But to get to the case n + 1 we need both the case n and the case n – 1. Therefore, this induction proof needs two consecutive starting cases. For n = 1 and n = 2, we have [again used (16.1-7)]

We might have used the simpler cases n = 0 and n = 1 as a basis for the induction since

cos 0 = 1 (has degree 0)

Occasionally, in going to the next case it is necessary to assume the truth of all the preceding cases, but this does not change the argument very much.

There is a more sophisticated argument for the basis for the mathematical induction proof that some people find more convincing than the domino argument. This argument goes as follows. Suppose the statement is not true for every positive (or every nonnegative) integer. If so, then there are some false cases. Now consider the set for which the statement is false. If this is a nonempty set, then it would have a least integer, call this integer m. Now consider the preceding case, which is m – 1. This (m – 1)th case must be true by definition, and we know that there is such a case because as a basis for the induction we showed that there was at least one true case. We now apply the step forward, starting from this true case m – 1, and conclude that the next case, case m, must be true. But we assumed that it was false! A contradiction. So we go back up the argument until we find the if and decide that what immediately follows the “if” must be false. Thus there are no false cases and the formula is true for all integers above the integer used as the basis for induction. Logical consistency is a foundation stone of both mathematics and science.

The technical difficulty the beginning student has with mathematical induction is in the matter of how to go from one step, say the (m – l)th case, to the next, the mth. When applying mathematical induction to sums, as we did in the above cases, you simply add the next term of the series to both sides of the equation (you must preserve the equality you are assuming for the induction step). As we will later see, in other cases you must do the appropriate thing to get to the next case.

The theoretical difficulty the student has with mathematical induction arises from the reluctance to ask seriously, “How could I prove a formula for an infinite number of cases when I know that testing a finite number of cases is not enough?” Once you really face this question, you will then see how mathematical induction gives an answer; you will understand the ideas behind mathematical induction. It is only when you grasp the problem clearly that the method becomes clear.

Example 2.3-4

Number of subsets in a set. Given a set of n elements (items), how many subsets can be formed? It is customary to include the two improper subsets consisting of the null set (no elements) and the whole set (all the elements). The reason for this will become apparent from the following proof.

We assert that a set of n elements has 2n subsets. This is certainly true for the case n = 1, since there we have only the two improper subsets. Suppose it is true for the case of m elements. Then add one element to make the set with m + 1 elements. The subsets that can be formed are all those without the new element, which total, by hypothesis, 2m, and those with the new element added to each old subset, again 2m. No two of these sets are the same, so for the case m + 1 there are 2(2m) = 2m+1 subsets, and the proof is done.

Consider how awkward the proof would be if the improper subsets were excluded. There would be 2m – 2 subsets for m elements. Adding the new element would produce another 2m – 2 subsets. We would then have to argue that the new element itself was a subset, as well as that the original whole set is now a subset. This would give the proof, since

2(2m – 2) + 1 + 1 = 2m+1 – 2

as required for the induction step.

This shows how the details of mathematical proofs can sometimes shape the very definitions to be made; definitions do not arise from the vacuum of pure thought, but from the real world of experience plus the details of working with the definitions. This example again shows the importance of thinking of the case n = 0 (counting 0, 1, 2, …).

As the following example shows, sometimes it is easier to think about the downward induction.

Example 2.3-5

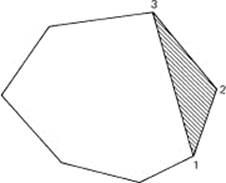

Sum of the angles of a convex polygon. Prove that the sum of the interior angles of a convex polygon having n sides is 180° (n – 2).

Figure 2.3-1 Convex polygon

Given a convex polygon we pick three consecutive vertices along the edge and draw a line from the first to the third. See Figure 2.3-1. This forms a triangle. If we remove the triangle (of course we leave the edge between the first and third points), we decrease the number of sides of the polygon by 1 and the sum of the interior angles by 180°. We can continue this as long as we have at least four sides. When we get down to the case of three vertices, we have a triangle and know that the sum of the interior angles is 180°. Thus we have a proof in the downward form; if the formula is not true for some n, then an earlier case (n – 1) is not true, and when we get down to the first case which we know to be true we decide (since the steps are reversible) that all the other cases must also be true.

EXERCISES 2.3

1.Find the sum of the first 100 positive integers. (It is said that Gauss at the age of 10 solved this in his head, which started him on the path to being a mathematician.)

2.Find the sum of the squares of the first 100 positive integers.

3.For the series 1 + 23 + 33 + … + (m – 1)3, what term would you add in an inductive proof?

4.For the series 1 + 4 + 7 + … + 3m – 2, what term would you add in an induction proof?

5.For the series 1 + ![]() +

+ ![]() + … + 1/(m – 1), what term would you add in an induction proof?

+ … + 1/(m – 1), what term would you add in an induction proof?

6.Prove that 1 + 3 + 5 + … + (2n – 1) = n2.

7.Show that

![]()

Hint: 1 /k(k + 1) = 1/k – 1/(k + 1).

8.Prove that sin (nx)/sin x is a polynomial of degree n – 1 in cos x. Hint: Expand sin (m + 1)x.

9.Guess the coefficient of the leading term of the polynomial representation of cos nx. Prove it by induction.

10.Show that 6 divides n3 – n for all n > 1.

11.By mathematical induction, prove that 2n > n2 for all n > 4.

12.Suppose that all you can prove is that from the case m the case m + 3 follows. How would you make the proper induction argument?

13.Suppose you want to prove an assertion for all integers positive, zero, and negative. Give a suitable pattern of logic.

14.Consider the following argument. Theorem: All positive integers are interesting. Proof: Certainly the integer 1 is an interesting number. If all the integers are not interesting, then there is a least not interesting integer. But this is an interesting property! A contradiction.

15.Marbles in a bag example: The theorem states that in any bag of black and white marbles all the marbles are the’ same color. The proof by induction starts with the case of a bag with one marble. Certainly all the marbles in the bag are of the same color. Next we assume the theorem is true for any bag of m marbles. Take a bag with m + 1 marbles and remove one. By the induction hypothesis, they are all of the same color. Replace it and remove another marble. Again they are all of the same color. Thus all the marbles in the bag are of the same color. Hint: In all such cases of an obvious error, it is wise to examine closely the first case you believe to be false.

16.Extend Example 2.3-5 to nonconvex polygons. What restrictions do you want to observe?

17.*Two different arguments are presented for the logic used in connection with mathematical induction. Write an essay comparing them in detail. Which do you prefer and why?

18.Prove O2(n) = 12 + 32 + 52 + … + (2n – 1)2 = n(4n2 – 1)/3.

2.4 THE BINOMIAL THEOREM

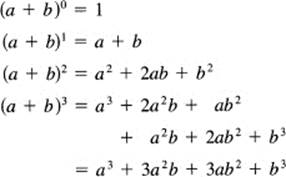

A very important application of mathematical induction is the proof of the binomial (two term; “bi” means two) theorem:

![]()

Again we begin with a short table to get a feel for the results. This habit of doing a number of special cases first was very characteristic of Leonhard Euler (1707–1783), one of the greatest mathematicians who ever lived. By this time we have learned the value of starting with the zeroth case. Notice that for this induction each line is obtained from the preceding line by multiplying by (a + b).

We need a notation for describing the coefficients. For the nth power, let us write the kth coefficient along the nth line as the binomial coefficient

C(n, k)

Read this as “Binomial coefficient of order n and index k,” or more simply, “C of n and k” The first integer n names the row, and the second integer k names the position along the row. The values of k run from 0 through n. For any other integer k, it is conventional to set the binomial coefficient equal to zero. A currently popular notation for the binomial coefficients is

![]()

but this is hard to type, takes extra space whenever it occurs in a running text of words, and is difficult to handle using a computer terminal, so we use the older, more convenient, C(n, k) notation here.

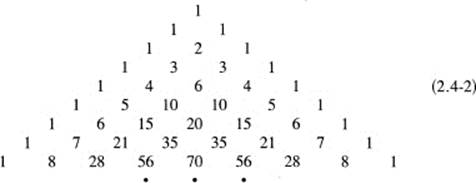

The binomial coefficients are the numerical coefficients in the above equations, and they may be written neatly in the form of the Pascal (1623–1662) triangle as follows:

This triangle also appears in Omar Khayyam’s (1048–1131) Algebra, as well as in Chu Shih-Chien’s Precious Mirror (1303).

As is easily seen, each (upper) edge number is a 1, and each inner number is the sum of the two numbers immediately above it. To show that this last statement is true in general (meaning for all cases), it suffices to note the way a term in one line arises from those in the line above when the corresponding equation is multiplied by (a + b). Looking at the coefficient in the triangle corresponding to the kth term in the nth row (beyond the n = 1 row),

an–kbk

we see that one part of the term comes from the C(n – 1, k – 1) when we multiply it by the a (the previous row and one lower power in a), and the other part comes from the C (n – 1, k) when we multiply it by the b (the previous row and the same power of a). Thus we have the fundamental relation

![]()

Each number is the sum of the two numbers in the row above. This equation, together with the boundary l’s, implicitly defines the binomial coefficients. It is easily seen that these are the mathematical induction conditions that make the binomial theorem true for all positive integer components.

In more mathematical notation, this identity is

![]()

and we select the corresponding term, akbn−k, from both sides.

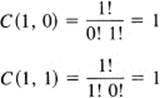

To get an explicit representation for the C(n, k) we need to introduce the factorial notation for the product of the first n integers:

1(2)(3)(4) … (n) = n!

It is easy to see that 1! = 1 and that

![]()

for all n greater than 1. This is a recursive definition; each factorial (after the first) is defined in terms of an earlier factorial.

It is convenient to extend the definition of the factorial function in a consistent way to the case of 0!. We simply ask what 0! would have to be to satisfy the Equation (2.4-4). Put n = 1 and we get

1! = 1(0!)

from which we conclude that

0! = 1

is a reasonable extension of the definition of a factorial.

We now use mathematical induction to prove that the value of C(n, k) is given by

![]()

It is certainly true for the case n = 1, where the two cases to check are k = 0 and k = 1.

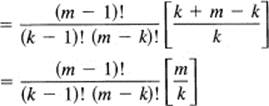

and this serves as the basis for the induction reasoning. Assuming that the factorial representation (2.4-5) of the binomial coefficient is true for the case m – 1 (for all k), we compute C(m, k) from the earlier identity (2.4-3) between the binomial coefficients. The right-hand side of (2.4-3) is

![]()

We factor out the first term on the right-hand side (notice that we have an extra factor k in the denominator of the second term and lack a factor m – k in the denominator, which must therefore also be supplied in the numerator). Hence the right-hand side is

![]()

We now rearrange the expression in brackets.

![]()

The last line arises when we move the trailing factors of m and k forward to their corresponding factorial terms. Thus we have completed the induction step for Equation (2.4-5). Since we verified the induction for the case k = 1, we seem to have a complete proof. But let us check all the logic carefully. We have the basis for the induction, and we moved from the (m – 1)th case to the mth case for k – 1,2, …, m – 1. We missed the two end values, k = 0 and k = m. Examining the proof, we see from (2.4-5) that both end values C(m, 0) and C(m, m) are 1. Thus we have completed the proof to cover all m + 1 terms for the new line of binomial coefficients.

It is worth noting at this point that

![]()

which simply says that the binomial coefficients of order n are symmetric in k about the middle value (or the two middle values); replacing k by n – k does not change the numerical value of the binomial coefficient of order n. Note that in spite of the indicated division the binomial coefficients are integers.

Example 2.4-1

C(17, 12) = C(17, 5) C(17, 8) = C(17, 9)

For a given order n, the binomial coefficients along a row can be found directly rather than by laboriously filling in all the Pascal triangle down to that level. There is a simple recursive rule, which is clear from the following. In each case the brackets [ ] are the preceding coefficient in the row:

![]()

In practice you write down a 1 for the coefficient of the first term, multiply this by n and divide by 1 to get the next coefficient, multiply this by n – 1 and divide by 2 to get the next coefficient, multiply this by n – 2 and divide by 3 to get the next, and so on, and you end up with 1 for the final coefficient. Actually, since you know that the coefficients are symmetric about the middle coefficient(s), you need only compute one-half of them if you are confident; computing them all gives a check by using the symmetry.

Rule. In passing from one term in the row to the next term in the same row, you simply multiply by a number one smaller than the one you multiplied by in the previous step and divide by a number one greater than you divided by in the previous step. In symbols the relationship we are using is

![]()

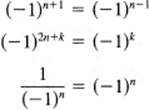

If in the binomial expansion (2.4-1) we replace b by –b (the expansion is true for any a and b), then since

![]()

we get the same terms, but now with alternating signs:

![]()

The alternation in sign is very common in mathematics and is worth a moment’s extra attention. The quantity

(–1)n

has the following useful properties:

Example 2.4-2

We now return to further examples of mathematical induction.

Example 2.4-3

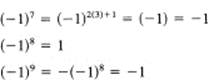

Fibonacci number representation. The famous Fibonacci (also known as Leonardo of Pisa, 1170–1250) numbers fk are defined by the rules

Let us compute a number of early cases.

Suppose you are now told that the numbers can be represented by the form (which we will derive in example 24.8-1

![]()

The presence of a ![]() looks like the formula cannot lead to integers, but from the definition the Fibonacci numbers are clearly integers! A closer look shows that the first of the two binomial expansions of order n has all positive terms, while the second binomial expansion has alternating signs. These are being subtracted, and therefore only terms with the square root will persist inside the brackets [remember that (

looks like the formula cannot lead to integers, but from the definition the Fibonacci numbers are clearly integers! A closer look shows that the first of the two binomial expansions of order n has all positive terms, while the second binomial expansion has alternating signs. These are being subtracted, and therefore only terms with the square root will persist inside the brackets [remember that (![]() )2 = 5]. The divisor outside will exactly take care of them! Thus you see that it is possible that this formula leads to integers. But we still face the problem of proving a formula for an infinite number of cases. As a basis for the conventional mathematical induction, we check that the cases n = 0 and n = 1 are correct. In the process we see how the square roots exactly cancel, confirming our earlier reasoning. If you not do see this, then do the next several cases until you see why

)2 = 5]. The divisor outside will exactly take care of them! Thus you see that it is possible that this formula leads to integers. But we still face the problem of proving a formula for an infinite number of cases. As a basis for the conventional mathematical induction, we check that the cases n = 0 and n = 1 are correct. In the process we see how the square roots exactly cancel, confirming our earlier reasoning. If you not do see this, then do the next several cases until you see why ![]() cancels out.

cancels out.

We therefore assume that the formula is correct for all cases up to n, and form the right-hand side of the defining equation for the fn+1.

![]()

To expand these seems like too much work. However, it might possibly work out that the terms that contain the + ![]() sign will separately combine to produce the new term that contains the +

sign will separately combine to produce the new term that contains the + ![]() sign. And, therefore, the terms that contain the –

sign. And, therefore, the terms that contain the –![]() sign would likewise combine properly. It is worth a try! We have

sign would likewise combine properly. It is worth a try! We have

![]()

We next factor out the lowest power of the two terms that contain the + ![]() to get for the fn + fn–1 terms; and writing only the terms with the positive

to get for the fn + fn–1 terms; and writing only the terms with the positive ![]() , we have

, we have

![]()

But this is

![]()

If this is to be the proper term for the fn + 1 expression, then we must have the bracketed expression in this equation:

![]()

Therefore, let us multiply out the right-hand side to check this condition. We get

![]()

as it should! The corresponding terms with the – ![]() behave similarly. Thus we have completed the induction step (as well as verified the induction for two special cases) and believe that the representation of the Fibonacci numbers in this form is correct.

behave similarly. Thus we have completed the induction step (as well as verified the induction for two special cases) and believe that the representation of the Fibonacci numbers in this form is correct.

From the recurrence relation defining the numbers, we get f0 = 0, f1 = 1, f2 = 1, f3 = 2, f4 = 3, f5 = 5, f6 = 8, …. Evidently, it is much easier to compute the first few Fibonacci numbers from the recurrence relation than from the formula we just proved. The formula is useful, however, when estimating the values of the Fibonacci numbers for large n. You have only to compute numerically

![]()

If you raise this number to the appropriate power n and finally divide by ![]() , you get an estimate of the size of the nth number, because the term with the minus sign

, you get an estimate of the size of the nth number, because the term with the minus sign

![]()

is less than 1 in size, and for high powers it is very small (see Section 7.2). If you round to the nearest integer, the first term alone gives fn.

The number (1 + ![]() )/2 is called the golden ratio and is believed (by some people) to be the ratio of the sides of the most esthetically pleasing rectangle. (This can be seen in some packaging!) It also has the property that if you remove the largest square that you can from the rectangle then the remaining rectangle has the same ratio of its two sides (Figure 2.4-1).

)/2 is called the golden ratio and is believed (by some people) to be the ratio of the sides of the most esthetically pleasing rectangle. (This can be seen in some packaging!) It also has the property that if you remove the largest square that you can from the rectangle then the remaining rectangle has the same ratio of its two sides (Figure 2.4-1).

Figure 2.4-1 Golden rectangle

EXERCISES 2.4

1.From the Pascal triangle, expand (a + b)5.

2.From the Pascal triangle, expand (a – b)5.

3.Write out the next line of the Pascal triangle.

4.Expand (1 + x)10.

5.Expand (a – b)7.

6.Expand (p + q)n.

7.Expand (p – q)n.

8.Expand and evaluate (1 + 1)5.

9.Expand and evaluate (1 – 1)5.

10.Add the expansions in Exercises 8 and 9 and divide by 2 to get the sum of C(5, 0) + C(5, 2) + C(5, 4).

11.Add the expansions in Exercises 6 and 7 and divide by 2 to get the sum of the terms of even index terms C(n, 0)pn + C(n, 2)pn–2q2 + ….

12.In Exercise 11 set p = q = ![]() and find the sum of the even index binomial coefficients of order n.

and find the sum of the even index binomial coefficients of order n.

13.Expand (1 + x)n and then set x = 1 to get the sum of all the binomial coefficients of order n.

14.As in Exercise 13, but replace x by –1. Then add the two equations and divide by 2 to get the sum of the even index coefficients

C(n, 0) + C(n, 2) + C(n, 4) + … = 2n – 1

By subtraction find the sum of the odd index terms.

15.Show that 1(1!) + 2(2!) + 3(3!) + … + n(n!) = (n + 1)! – 1.

16.Show that the edge values of the Pascal triangle satisfy the recurrence relation for the binomial coefficients. Hint: Outside the printed triangle the values are all zero.

17.Show that

![]()

18.Show that (2k + 1)!/(2kk!) = (2k + 1)(2k – 1)(2k – 3) … (1).

19.Show that (2k)!/(k! k!) = 2k[2 –1/k][2 – 1 /(k – 1)][2 – 1/(k – 2)] … [2 – 1].

20.Prove the last statement of this section.

21.Why would you not want to extend the definition of the factorial function to negative integers?

2.5 MATHEMATICAL INDUCTION USING UNDETERMINED COEFFICIENTS

The glaring weakness of mathematical induction is that you must know the answer before you start. All you do is verify the truth; you do not find the formula. Sometimes you do know the answer before you start, but usually you are not so lucky. Often much of your problem is to find the formula. Having found a result by some method, mathematicians often then publish only the polished inductive proof, which completely conceals the method they used originally to find the formula.

How can you find the right-hand side of a formula for starting the mathematical induction? Often guessing from the results of a few cases will work, but sometimes it will not. The following method of undetermined coefficientsrequires only that you know the general form of the answer, not all the details of it.

The idea is as follows:

1.Assume the general form of the answer.

2.Impose the condition that the induction be true for n = 0 (or any other convenient value).

3.Impose the induction condition that the step from m – 1 to m be true.

4.From steps 2 and 3, determine the unknown coefficients.

If we can do all these steps, then we are assured, without carrying it out, that the steps of the induction proof will be true; we have already in steps 2 and 3 imposed the conditions that they will!

If we cannot carry out all the steps, we have assumed the wrong form, but the failure will probably suggest modifications to the assumed form, and we can try again (and again).

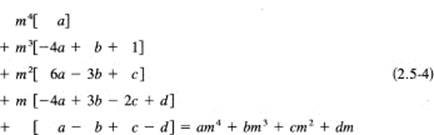

Extension 2.5-1

Sum of the third powers of the integers. When computing the sums Sk(n) of the kth powers of the successive integers for the cases k = 1 and k = 2, we found that the right-hand sides were polynomials of degrees 2 and 3, respectively. The obvious conjecture is that the sum of the kth powers must be a polynomial of degree k + 1. You can make an additional check on the hypothesis by dropping back to the case k = 0. The positive integers to the zeroth power are always 1, so the sum should be exactly n, a polynomial of degree 1. This gives you further evidence that the conjecture is likely to be true.

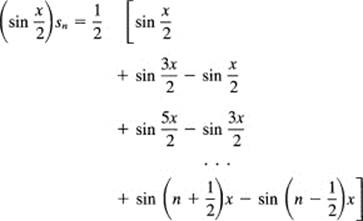

Therefore, for step 1 we write out the sum of the third powers of the integers as a polynomial of degree 4 with five undetermined coefficients, a, b, c, d, and e.

![]()

For step 2 we use as a basis for the induction the case of n = 0, no terms, and this forces us to conclude that

e = 0

For step 3 we apply the induction step (going from m – 1 to m) to this polynomial. To do this we simply add m3 to both the left- and right-hand sides. As usual, we then need to transform the right-hand side to the proper form. The right-hand side of (2.5-1) is

![]()

and this must be, if the induction is to work, exactly equal to

![]()

For step 3 we equate these two expressions (2.5-2) and (2.5-3).

![]()

We now rearrange the left-hand side of this equation. Apply the binomial expansion to each term, and organize the results by the powers of m to match the form of the right-hand side. The equation is

We see the binomial coefficients in the columns. The extra 1 in the m3 term arises from the added m3 to each side to make the induction step.

Notice that each term on the right-hand side of this equation will exactly cancel a corresponding term on the left. We have, therefore, a polynomial of degree 3, which is to be zero for all positive integers m.

Can any polynomial be zero for all positive integers unless all its coefficients are zero? What is the equation except the assertion that the sum of all its positive terms will exactly cancel the sum of the negative terms? More tersely, the sum of all the terms must cancel out completely. This being so, we see immediately that, if we pick m sufficiently large, then the first term cannot possibly be canceled by all the other terms in the equation unless the coefficient –4a + 1 is zero. We apply the same argument to the next lower power, then the next lower, and so on, and thus we have the following important rule:

If a polynomial is identically zero for all positive integers, then each coefficient must be zero separately.

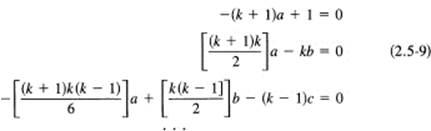

We apply this to the long Equation (2.5-5) in the variable m (partially simplified by the canceling of like terms). Equate the coefficients of each power of m to zero separately and get the set of equations

To complete step 4, we solve these equations in turn, top down. Notice that the first equation involves only a. The second uses this value of a to find the value of b. The third equation involves only the new value c and uses the two values a and b just found. The system of equations (2.5-6) is triangular (a common case) and is therefore easily solved. The undetermined coefficients are

Thus the polynomial we started with, and wrote with undetermined coefficients, now has its coefficients determined. The formula for the powers of the cubes of the consecutive integers is now known to be

![]()

Without directly demonstrating it, we know that the mathematical induction process will apply to this equation, and therefore it is a correct expression.

We have shown that the sum of the cubes of the first n positive integers is exactly the square of the sum of the first powers of the first n positive integers. This is a remarkable feature that apparently is just a chance relationship, as there seems to be no comparable feature among the other powers of the integers. But it is true that S5 + S7 = 2(S3)2.

Let us review this method of undetermined coefficients.

1.We assumed the general polynomial of degree k + 1 for the sum of the kth powers of the integers (2.5-1).

2.We set up a basis for the induction (this forced e = 0).

3.Then we applied the induction step to the assumed general form and rearranged the terms as powers of m (2.5-5). This polynomial was identically zero for all values m. Using an important result, we concluded that we could equate the coefficients to zero separately.

4.When we carry out the steps, we are assured that the formula will obey the induction step. We are sure that the formula is correct provided we accept the pattern of mathematical induction as being valid reasoning.

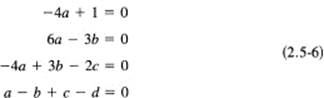

Generalization 2.5-2

Sum of the kth powers of the integers. For practice in handling general expressions, let us carry out the first few steps for the general case of the kth powers. The long spread-out Equation (2.5-4) we first found will now look like (we see the binomial coefficients in the appropriate columns)

Next, cancel each term on the right with the corresponding term on the left, and then equate each coefficient to zero separately. As before, from the basis for the induction with n = 0 we get that the constant term is zero. The individual equations are in a triangular form, which is easy to solve one equation at a time; compare with (2.5-5) and (2.5-6).

The first equation of (2.5-9) gives (for all k ≥ 0)

![]()

The second equation gives (for all k ≥ 1)

![]()

After some algebra, the third equation gives (for all k ≥ 2)

![]()

Still further algebra gives d = 0 (k ≥ 3). Evidently, we could go as far as we pleased (and our patience held out). As a result we have the general formula

![]()

for the summation of the kth powers of the consecutive integers.

We can easily check this result against our earlier special cases of k = 0, 1, 2, and 3. We have the following table:

One purpose for finding part of the general solution was to give you practice in the art of working with abstract symbols. The approach we used to find the sum of the powers of the consecutive integers is the same as that used by Jacques (James) Bernoulli (1654–1705). The results of the general case checked the several special cases. If there had been a disagreement, then either the special case would have been wrong or else there would have been an error in the general case. Thus, even if you are only interested in some special case, working out the details of the general case gives increased confidence in the particular result. You are then more willing to risk your reputation on the result.

We see that the method of undetermined coefficients depends on guessing the proper form. If you miss guessing the right form, this error is revealed to you by the subsequent work; you will not be able to complete all the algebra properly. But often the form of the failure will suggest a new guess at the general form of the solution.

This method of undetermined coefficients is a powerful method for finding answers to problems when all you have is a general idea of the form of the answer. If you guess right, when you finish you will have a proof by induction. We will use this method of undetermined coefficients many times in various ways to demonstrate its versatility. We applied the method of undetermined coefficients to mathematical induction, and therefore we applied the conditions that the steps of mathematical induction be true; for other applications of the method we would apply other, appropriate conditions. The basic idea is that we assume the form we think we want and then apply the conditions that define the object we are searching for. When the method works, the undetermined coefficients are determined by the applied conditions and we have our answer.

EXERCISES 2.5

1.Compute the sum of the first 100 integers cubed.

2.Sum 101 + 102 + 103 + … + 200.

3.Sum 1012 + 1022 + 1032 + … + 2002.

4.*In the induction we did for finding the sum of the third powers of the integers in this section, we went from m – 1 to m. Discuss and carry out the induction when you go from m to m + 1. In this case, which one do you prefer?

5.Find one more term in the fourth power summation of the integers, and then find the real factors of the resulting polynomial for the sum of the fourth powers.

6.*Repeat Exercise 5 but for the generalization to the kth powers.

7.Show that Sk(2n) – 2kSk(n) equals the sum of the kth powers of the odd integers

1k + 3k + 5k + … + (2n – 1)k

8.Adapt the idea in Exercise 7 to find the first three terms of the sum of the kth powers of the integers with alternating signs, 1k – 2k + 3k – … – (2n – 1)k.

9.*Prove that S5 + S7 = 2(S3)2.

2.6 THE ELLIPSIS METHOD

The ellipsis method is another way of handling the problem of proving an infinite number of cases. In this method we write the equation in the general form with the conventional three dots, “….”

Example 2.6-1

Sum of a geometric progression. Consider the sum of a geometric progression where the first term is a, and each term after the first is found by multiplying the preceding term by the fixed rate r. The first n individual terms are, in sequence,

a, ar, ar2, ar3, … , arn–1

The sum of the first n terms is

Sn = a + ar + ar2 + ar3 + … + arn–1

A bit of study suggests that multiplying this equation by r will produce another equation with many of the same terms:

rSn = ar + ar2 + ar3 + ar4 + … + arn

Subtracting the second of these equations from the first produces a great deal of cancellation of terms:

(1 – r)Sn = a – arn = a(1 – rn)

Provided r ≠ 1, we can divide both sides by 1 – r to solve for Sn:

![]()

If r = 1, then this formula has a division by 0 and is invalid. In this case we note that the sum of the terms of the geometric progression is

Sn = a + a + a + … + a = na

When r is greater than 1 in size, the conventional form for writing the sum is (it is obtained by multiplying both numerator and denominator by –1)

![]()

We have a formula, (2.6-1) or (2.6-2), for the sum of a geometric progression for all n; by using the ellipsis notation all the equations have been solved in parallel, all at the same time.

An especially useful case of this result occurs when a = 1 and r is replaced by x.

![]()

This shows for n = positive integer that 1 – xn is always divisible by 1 – x. Now that we know the formula we could, of course, give an inductive proof; but this is not necessary since our derivation proved the truth of the formula.

Example 2.6-2

Sum of an arithmetic progression. Another commonly occurring progression is the arithmetic progression where each term is found from the preceding term by adding a constant amount labeled d (which might be a negative number). The first n terms are, in sequence,

a, a + d, a + 2d, … , a (n – 1)d

The sum of the terms is

Tn – a + (a + d) + (a + 2d) + (a + 3d) + … + [a + (n – 1)d]

We can write this sum as (arrange the terms by the parameters a and d)

Tn = na + d[1 + 2 + 3 + … + (n – 1)]

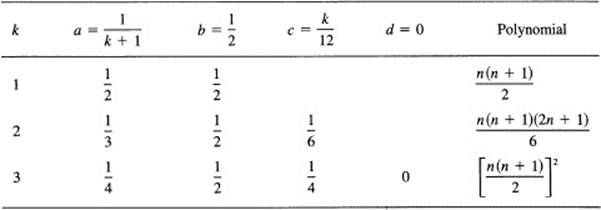

Now using our earlier sum for the consecutive integers, we get for the sum of an arithmetic progression

where l is the last term. This formula shows that the sum Tn of an arithmetic progression is the average of the first and last terms multiplied by the number of terms n.

An alternative proof of the formula for the sum of an arithmetic progression [and the proof that the young Gauss (1777–1855) probably used] is to write out the progression twice, once forward and once in reverse order (where again l is the last term):

Tn = a + (a + d) + (a + 2d) + … + [a + (n – 1)d]

Tn = l + (l – d) + (l – 2d) + … + [l – (n – 1)d]

Then add the two equations:

2Tn = (a + l) + (a + l) + … + (a + l)

There are exactly n of the terms a + l, so the sum must be

![]()

The first proof of the general arithmetic progression was based on the very simple special case of a = 0, d = 1. This is a useful method of proof—examine the simplest case you think will include the main difficulty, and with luck its proof will be easy as well as serve as a base for proving the general case. (See Hilbert’s statement quoted in Section 1.5.) The second method of proof was based on a sudden insight into a trick that would work in this case; in a sense, once this derivation is seen, it shows “why” the answer is what it is.

Example 2.6-3

Sum of cosines. We can also use the ellipsis method on the trigonometric identity of Section 2.2.

![]()

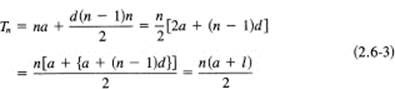

We need to know a trick (which must have been hard to discover the first time, but once seen is easy to use again in similar situations). It is based on the trigonometric identity

![]()

which can be checked by expanding the basic addition formulas (16.1-8) and (16.1-9) on the right-hand side of the equation. Multiply the given trigonometric sum by sin (x/2), and apply the above identity to each product:

The right-hand side collapses due to cancellations (as did the geometric progression), and this time we have only one term:

![]()

Therefore, the original sum is (solve for sn)

![]()

Again using the ellipsis method, we have found the solution for all the equations in parallel; we know the sum for any n and all x for which sin (x/2) ≠ 0. (The symbol ≠ means “not equal to.”)

There are many other methods for coping with an infinite number of cases but we will not take them up here. Some other methods will appear as we go along in the text.

EXERCISES 2.6

1.Find the sum of 1 + 2 + 4 + 8 + … + 2n.

2.Sum 3 + 6 + 12 + … for n terms.

3.Sum ![]() .

.

4.Find the sum of 1 + 4 + 7 + … + 301.

5.Find the sum of 100 + 96 + 92 + … + 0.

6.It is said that the inventor of the game of chess, when asked to name his reward, asked for one grain of wheat for the first square, 2 for the second, 4 for the third, and so on. What was the sum he asked for? Using the approximation that 210 is approximately 103, estimate the sum.

7.When r = 2, compare the last term with the sum of the geometric progression. (The last term is the amount that happens in the last doubling period of the geometric progression.)

8.If the rate r is greater than 1 (and the starting value is positive), compute the value of n for which the next term exceeds a given number, say C, no matter how large C may be.

9.It is said that currently knowledge is doubling every 17 years. If this rate continued what would be the increase in 340 years?

10.In Example 2.6-3 analyze the case when sin (x/2) = 0.

2.7 REVIEW AND FALLACIES IN ALGEBRA

Algebra arose from arithmetic, and the letters used in algebra were originally assumed to be numbers. Hence the rules that evolved for algebra were those used in arithmetic. It was comparatively late that these rules were formalized into postulates that summarize the practice in arithmetic.

Let the letters a, b, and c be any quantities in the algebra, then we have the following:

1.Associative rules (meaning you can associate the quantities in any way):

a + (b + c) = (a + b) + c, a(bc) = (ab)c

2.Commutative rules (meaning you can interchange the order of the letters):

a + b = b + a ab = ba

3.There exist unique identity elements 0 and 1 together with inverses to each letter (meaning subtraction, and division by other than 0, can always be done):

![]()

with the corresponding properties

a + 0 = a, a(0) = 0, a(1) = a

4.Distributive rule (connects addition and multiplication):

a(b + c) = ab + ac

From these simple rules all the rules of algebra follow, provided you use the standard forms of logic, including equals plus equals are equal. For example, the rule of transposition is easily deduced.

Example 2.7-1

Let

a + b = 0

Then use (the identity)

–b = –b

Add both equations together and apply the associative rule:

a + (b – b) = 0 – b

or

a = –b

and we have the rule for transposing a term to the other side of an equation.

Example 2.7-2

We can similarly derive the rule that a negative times a negative is a positive. We begin with

a(0) = a(b – b) = a(b) + a(–b) = 0

Hence

a(–b) = –ab

We next begin with

–a(0) = –a(b – b) = (–a)b + (–a)(–b) = 0

from which it follows that

(–a)(–b) = –(–ab) = ab

Our desire for general rules without exceptions forces these results.

We repeat, these rules were abstracted from the rules of arithmetic that developed over centuries. They represent what you have learned over many years, but being in a compact form they summarize a lot of individual rules you learned when you studied arithmetic. It is because the symbols in algebra were at first seen as merely generalized numbers that the rules for algebra are the same as those for arithmetic.

We have reviewed only the parts of algebra that have reasoning patterns which are needed later, and have neglected many other parts with which we assume you are familiar. However, we need to warn the reader against a number of standard errors that arise when doing algebra without thinking.

The simplest fallacy is supposing that

![]()

for all x. There is a sign missing. We see this error in the equation

![]()

which is not true when x is negative. What is true is that

![]()

Example 2.7-3

A better disguise of picking the wrong sign of the radical is the following “proof.” Surely

–15 = –15

Rewrite this as

which is clearly false!

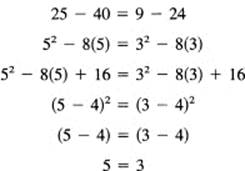

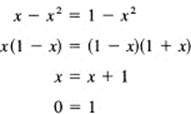

Another class of fallacies arises from the division by zero. There are many such examples, and perhaps the simplest is the following.

Example 2.7-4

Let

x = 1

Then

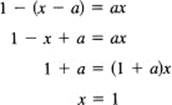

Example 2.7-5

For a more subtle example, consider the equation

![]()

Cleared of fractions,

But when a = 1 the original equation is not defined for x = 1; hence x = 1 cannot be a solution. Also, what happens when a = – 1? When a = – 1, the equation is an identity and any x other than 0 and –1 is a solution!

Clearly, we need to be careful, when we do a long problem, to see that we have not included one or more of these fallacies. Whenever the result is going to be used, it should receive a careful inspection that searches for these kinds of blunders.

2.8 SUMMARY

This chapter looked at the integers and examined how to prove a result for an infinite number of values. The classic tool for doing this is mathematical induction, which has a number of simple variations. The ellipsis method is widely used as an alternative method of proof. Occasionally, a simple indirect proof by contradiction can be used (for example the proof in Section 2.1 that there is no largest prime number).

Thus we came to grips almost immediately with a fundamental problem of mathematics, coping with the infinite. One important tool introduced is the device of undetermined coefficients: if you know the form, but not the details, then you can impose the conditions you want to apply to the form and determine the details. In one particular case of using the form of a mathematical induction proof we imposed both the initial conditions and the induction step, and these conditions determined the particular coefficients. Along the way we did a number of examples (including geometric and arithmetic progressions) whose results are useful both in using mathematics generally and in later parts of the book. The examples are useful, but the methods are more important.

The central idea behind all of them is recursion; just as the integers are defined recursively, so too must the proof somehow be recursive in order to exhaust all the cases.

We have not tried to develop the material in an orderly fashion; rather we have used various properties of numbers that you have learned earlier. We concentrated on those that involve the concept of “infinity” and some of its consequences, so that as we go forward things will not be “slipped by” you without your realizing what is implied.

We also included a number of fallacies in algebra to warn you against idiotic results that may arise when you are careless.