Barron's AP Psychology, 7th Edition (2016)

Chapter 4. Sensation and Perception

KEY TERMS

Transduction

Sensory adaptation

Sensory habituation (also called perceptual adaptation)

Cocktail-party phenomenon

Sensation

Perception

Energy senses

Chemical senses

Vision

Cornea

Pupil

Lens

Retina

Feature detectors

Optic nerve

Occipital lobe

Visible light

Rods and cones

Fovea

Blind spot

Trichromatic theory

Color blindness

Afterimages

Opponent-process theory

Hearing

Sound waves

Amplitude

Frequency

Cochlea

Pitch theories

Place theory

Frequency theory

Conduction deafness

Nerve deafness

Touch

Gate-control theory

Taste (or gustation)

Smell (or olfaction)

Vestibular sense

Kinesthetic sense

Absolute threshold

Subliminal messages

Difference threshold

Weber’s law

Signal detection theory

Top-down processing

Perceptual set

Bottom-up processing

Gestalt rules

Proximity

Similarity

Continuity

Closure

Constancy

Size constancy

Shape constancy

Brightness constancy

Depth cues

KEY PEOPLE

David Hubel

Torsten Wiesel

Ernst Weber

Gustav Fechner

Eleanor Gibson

OVERVIEW

Right now as you read this, your eyes capture the light reflected off the page in front of you. Structures in your eyes change this pattern of light into signals that are sent to your brain and interpreted as language. The sensation of the symbols on the page and the perception of these symbols as words allow you to understand what you are reading. All our senses work in a similar way. In general, our sensory organs receive stimuli. These messages go through a process called transduction, which means the signals are transformed into neural impulses. These neural impulses travel first to the thalamus then on to different cortices of the brain (you will see later that the sense of smell is the one exception to this rule). What we sense and perceive is influenced by many factors, including how long we are exposed to stimuli. For example, you probably felt your socks when you put them on this morning, but you stopped feeling them after a while. You probably stopped perceiving the feeling of your socks on your feet because of a combination of sensory adaptation (decreasing responsiveness to stimuli due to constant stimulation) and sensory habituation (our perception of sensations is partially due to how focused we are on them). What we perceive is determined by what sensations activate our senses and by what we focus on perceiving. We can voluntarily attend to stimuli in order to perceive them, as you are doing right now, but paying attention can also be involuntary. If you are talking with a friend and someone across the room says your name, your attention will probably involuntarily switch across the room (this is sometimes called the cocktail-party phenomenon). These processes are our only way to get information about the outside world. The exact distinction between what is sensation and what is perception is debated by psychologists and philosophers. For our purposes, though, we can think of sensation as activation of our senses (eyes, ears, and so on) and perception as the process of understanding these sensations. We will review the structure and functions of each sensory organ and then explain some concepts involved in perception.

One of the ways to organize the different senses in your mind is by thinking about what they gather from the outside world. The first three senses listed here, vision, hearing, and touch, gather energy in the form of light, sound waves, and pressure, respectively. Think of these three senses as energy senses. The next two, taste and smell, gather chemicals. Think of these as chemical senses. The last two senses described, vestibular and kinesthetic, help us with body position and balance.

ENERGY SENSES

Vision

Vision is the dominant sense in human beings. Sighted people use vision to gather information about their environment more than any other sense. The process of vision involves several steps.

STEP ONE: GATHERING LIGHT

Vision is a complicated process, and you should have a basic understanding of the structures and processes involved for the AP test. First, light is reflected off objects and gathered by the eye. Visible light is a small section of the electromagnetic spectrum that you may have studied in your science classes. The color we perceive depends on several factors. One is light intensity. It describes how much energy the light contains. This factor determines how bright the object appears. A second factor, light wavelength, determines the particular hue we see. Wavelengths longer than visible light are infrared waves, microwaves, and radio waves. Wavelengths shorter than visible light include ultraviolet waves and X-rays.

We see different wavelengths within the visible light spectrum as different colors. The colors of the visible spectrum in order from longest to shortest wavelengths are: red, orange, yellow, green, blue, indigo, violet; you probably were taught the acronym Roy G. Biv to help you remember this order. As you were also no doubt taught, when you mix all these colors of light waves together, you get white light or sunlight. Although we think of objects as possessing colors (a red shirt, a blue car), objects appear the color they do as a result of the wavelengths of light they reflect. A red shirt reflects red light and absorbs other colors. Objects appear black because they absorb all colors and white because they reflect all wavelengths of light.

STEP TWO: WITHIN THE EYE

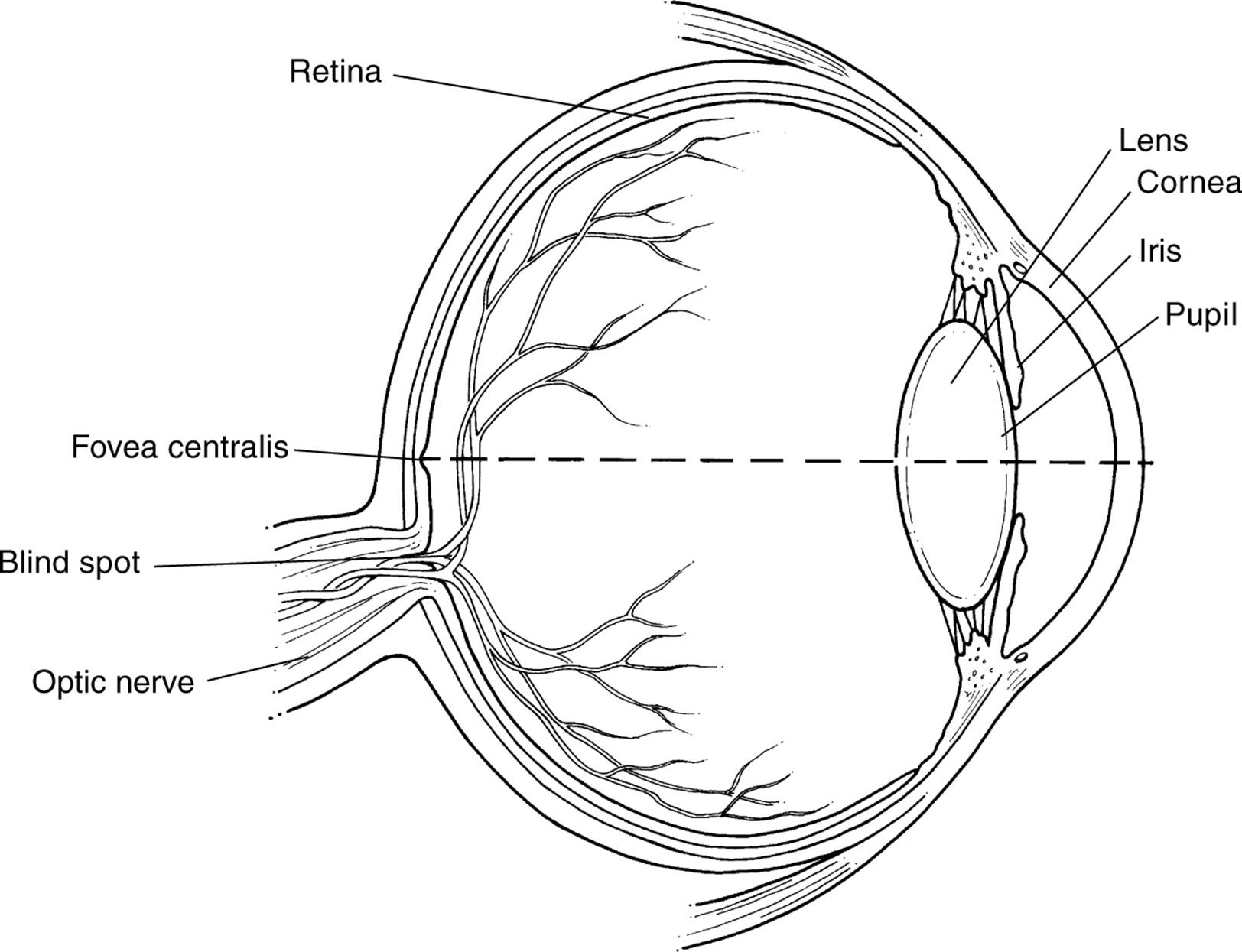

When we look at something, we turn our eyes toward the object and the reflected light coming from the object enters our eye. To understand the following descriptions, refer to Figure 4.1 for structures in the eye. The reflected light first enters the eye through the cornea, a protective covering. The cornea also helps focus the light. Then the light goes through the pupil. The pupil is like the shutter of a camera. The muscles that control the pupil (called the iris) open it (dilate) to let more light in and also make it smaller to let less light in. Through a process called accommodation, light that enters the pupil is focused by the lens; the lens is curved and flexible in order to focus the light. Try this: Hold up one finger and focus on it. Now, change your focus and look at the wall behind your finger. Then look at the finger again. You can feel the muscles changing the shape of your lens as you switch your focus. As the light passes through the lens, the image is flipped upside down and inverted. The focused inverted image projects on the retina, which is like a screen on the back of your eye. On this screen are specialized neurons that are activated by the different wavelengths of light.

STEP THREE: TRANSDUCTION

The term transduction refers to the translation of incoming stimuli into neural signals. This term applies not only to vision but to all our senses. In vision, transduction occurs when light activates the neurons in the retina. Actually several layers of cells are in the retina.

The first layer of cells is directly activated by light. These cells are cones, cells that are activated by color, and rods, cells that respond to black and white. These cells are arranged in a pattern on the retina. Rods outnumber cones (the ratio is approximately twenty to one) and are distributed throughout the retina. Cones are concentrated toward the center of the retina. At the very center of the retina is an indentation called the fovea that contains the highest concentration of cones. If you focus on something, you are focusing the light onto your fovea and see it in color. Your peripheral vision, especially at the extremes, relies on rods and is mostly in black and white. Your peripheral vision may seem to be full color, but controlled experiments prove otherwise. (You can try this yourself. Focus on a spot in front of you and have a friend hold different colored pens in your peripheral vision. You will find you cannot determine the color of the pens until they get close to the center of your vision.) If enough rods and cones fire in an area of the retina, they activate the next layer of bipolar cells. If enough bipolar cells fire, the next layer of cells, ganglion cells, is activated. The axons of the ganglion cells make up the optic nerve that sends these impulses to a specific region in the thalamus called the lateral geniculate nucleus (LGN). From there, the messages are sent to the visual cortices located in the occipital lobes of the brain. The spot where the optic nerve leaves the retina has no rods or cones, so it is referred to as the blind spot. The optic nerve is divided into two parts. Impulses from the left side of each retina go to the left hemisphere of the brain. Impulses from the right side of each retina go to the right side of our brain. The spot where the nerves cross each other is called the optic chiasm.

Figure 4.1. Cross section of the eye.

You might have guessed that this is a simplified version of this process. Different factors are involved in why each layer of cells might fire, but this explanation is suitable for our purposes.

STEP FOUR: IN THE BRAIN

You might remember from the “Biological Bases” chapter that the visual cortex of the brain is located in the occipital lobe. Some researchers say it is at this point that sensation ends and perception begins. Others say some interpretation of images occurs in the layers of cells in the retina. Still others say it occurs in the LGN region of the thalamus. That debate aside, the visual cortex of the brain receives the impulses from the cells of the retina, and the impulses activate feature detectors. Perception researchers David Hubel (1926–present) and Torsten Wiesel (1924–present) discovered that groups of neurons in the visual cortex respond to different types of visual images. The visual cortex has feature detectors for vertical lines, curves, motion, and many other features of images. What we perceive visually is a combination of these features.

Theories of Color Vision

TRICHROMATIC THEORY

Competing theories exist about how and why we see color. The oldest and most simple theory is trichromatic theory (also called the Young–Helmholtz Trichromatic (three color) theory. This theory hypothesizes that we have three types of cones in the retina: cones that detect the different colors blue, red, and green (the primary colors of light). These cones are activated in different combinations to produce all the colors of the visible spectrum. While this theory has some research support and makes sense intuitively, it cannot explain some visual phenomena, such as afterimages and color blindness. If you stare at one color for a while and then look at a white or blank space, you will see a color afterimage. If you stare at green, the afterimage will be red, while the afterimage of yellow is blue. Color blindness is similar. Individuals with dichromatic color blindness cannot see either red/green shades or blue/yellow shades. (The other type of color blindness is monochromatic, which causes people to see only shades of gray.) Another theory of color vision is needed to explain these phenomena.

OPPONENT-PROCESS THEORY

The opponent-process theory states that the sensory receptors arranged in the retina come in pairs: red/green pairs, yellow/blue pairs, and black/white pairs. If one sensor is stimulated, its pair is inhibited from firing. This theory explains color afterimages well. If you stare at the color red for a while, you fatigue the sensors for red. Then when you switch your gaze and look at a blank page, the opponent part of the pair for red will fire, and you will see a green afterimage. The opponent-process theory also explains color blindness. If color sensors do come in pairs and an individual is missing one pair, he or she should have difficulty seeing those hues. People with dichromatic color blindness have difficulty seeing shades of red and green or of yellow and blue.

Most researchers agree with a combination of trichromatic and opponent-process theory. Individual cones appear to correspond best to the trichromatic theory, while the opponent processes may occur at other layers of the retina. The important thing to remember is that both concepts are needed to explain color vision fully.

Hearing

Our auditory sense also uses energy in the form of waves, but sound waves are vibrations in the air rather than electromagnetic waves. Sound waves are created by vibrations, which travel through the air, and are then collected by our ears. These vibrations then finally go through the process of transduction into neural messages and are sent to the brain. Sound waves, like all waves, have amplitude and frequency. Amplitude is the height of the wave and determines the loudness of the sound, which is measured in decibels. Frequency refers to the length of the waves and determines pitch, measured in megahertz. High-pitched sounds have high frequencies, and the waves are densely packed together. Low-pitched sounds have low frequencies, and the waves are spaced apart.

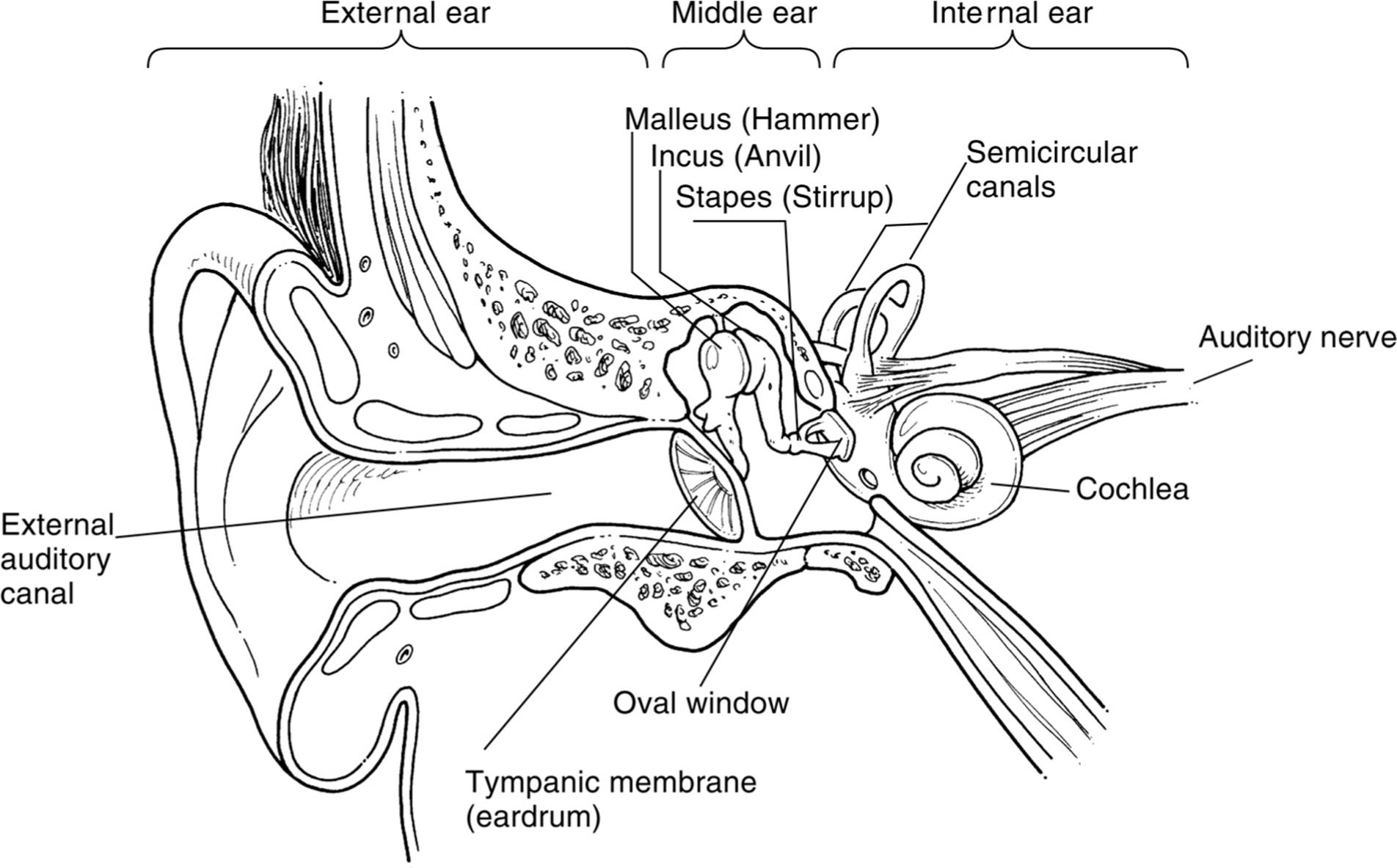

Sound waves are collected in your outer ear, or pinna (see Fig. 4.2 for structures in the ear). The waves travel down the ear canal (also called the auditory canal) until they reach the eardrum or tympanic membrane. This is a thin membrane that vibrates as the sound waves hit it. Think of it as the head of a drum. This membrane is attached to the first in a series of three small bones collectively known as the ossicles. The eardrum connects with the hammer (or malleus), which is connected to the anvil (or incus), which connects to the stirrup (or stapes). The vibration of the eardrum is transmitted by these three bones to the oval window, a membrane very similar to the eardrum. The oval window membrane is attached to the cochlea, a structure shaped like a snail’s shell filled with fluid. As the oval window vibrates, the fluid moves. The floor of the cochlea is the basilar membrane. It is lined with hair cells connected to the organ of Corti, which are neurons activated by movement of the hair cells. When the fluid moves, the hair cells move and transduction occurs. The organ of Corti fires, and these impulses are transmitted to the brain via the auditory nerve.

One way to remember amplitude and frequency is to imagine you are watching waves go by. Frequency is how frequently the waves come by. If they speed by quickly, the waves are high in frequency. Amplitude is how tall the waves are. The taller the waves, the more energy and the louder the noise.

Pitch Theories

The description of the hearing process above explains how we hear in general, but how do we hear different pitches or tones? As with color vision, two different theories describe the two processes involved in hearing pitch: place theory and frequency theory.

PLACE THEORY

Place theory holds that the hair cells in the cochlea respond to different frequencies of sound based on where they are located in the cochlea. Some bend in response to high pitches and some to low. We sense pitch because the hair cells move in different places in the cochlea.

Figure 4.2. Cross section of the ear.

FREQUENCY THEORY

Research demonstrates that place theory accurately describes how hair cells sense the upper range of pitches but not the lower tones. Lower tones are sensed by the rate at which the cells fire. We sense pitch because the hair cells fire at different rates (frequencies) in the cochlea.

Deafness

An understanding of how hearing works explains hearing problems as well. Conduction deafness occurs when something goes wrong with the system of conducting the sound to the cochlea (in the ear canal, eardrum, hammer/anvil/stirrup, or oval window). For example, my mother-in-law has a medical condition that is causing her stirrup to deteriorate slowly. Eventually, she will need surgery to replace that bone in order to hear well. Nerve (or sensorineural) deafness occurs when the hair cells in the cochlea are damaged, usually by loud noise. If you have ever been to a concert, football game, or other event loud enough to leave your ears ringing, chances are you came close to or did cause permanent damage to your hearing. Prolonged exposure to noise that loud can permanently damage the hair cells in your cochlea, and these hair cells do not regenerate. Nerve deafness is much more difficult to treat since no method has been found that will encourage the hair cells to regenerate.

Touch

When our skin is indented, pierced, or experiences a change in temperature, our sense of touch is activated by this energy. We have many different types of nerve endings in every patch of skin, and the exact relationship between these different types of nerve endings and the sense of touch is not completely understood. Some nerve endings respond to pressure while others respond to temperature. We do know that our brain interprets the amount of indentation (or temperature change) as the intensity of the touch, from a light touch to a hard blow. We also sense placement of the touch by the place on our body where the nerve endings fire. Also, nerve endings are more concentrated in different parts of our body. If we want to feel something, we usually use our fingertip, an area of high nerve concentration, rather than the back of our elbow, an area of low nerve concentration. If touch or temperature receptors are stimulated sharply, a different kind of nerve ending called pain receptors will also fire. Pain is a useful response because it warns us of potential dangers.

Gate-control theory helps explain how we experience pain the way we do. Gate-control theory explains that some pain messages have a higher priority than others. When a higher priority message is sent, the gate swings open for it and swings shut for a low priority message, which we will not feel. Of course, this gate is not a physical gate swinging in the nerve, it is just a convenient way to understand how pain messages are sent. When you scratch an itch, the gate swings open for your high-intensity scratching and shut for the low-intensity itching, and you stop the itching for a short period of time (but do not worry, the itching usually starts again soon!). Endorphins, or pain-killing chemicals in the body, also swing the gate shut. Natural endorphins in the brain, which are chemically similar to opiates like morphine, control pain.

CHEMICAL SENSES

Taste (or Gustation)

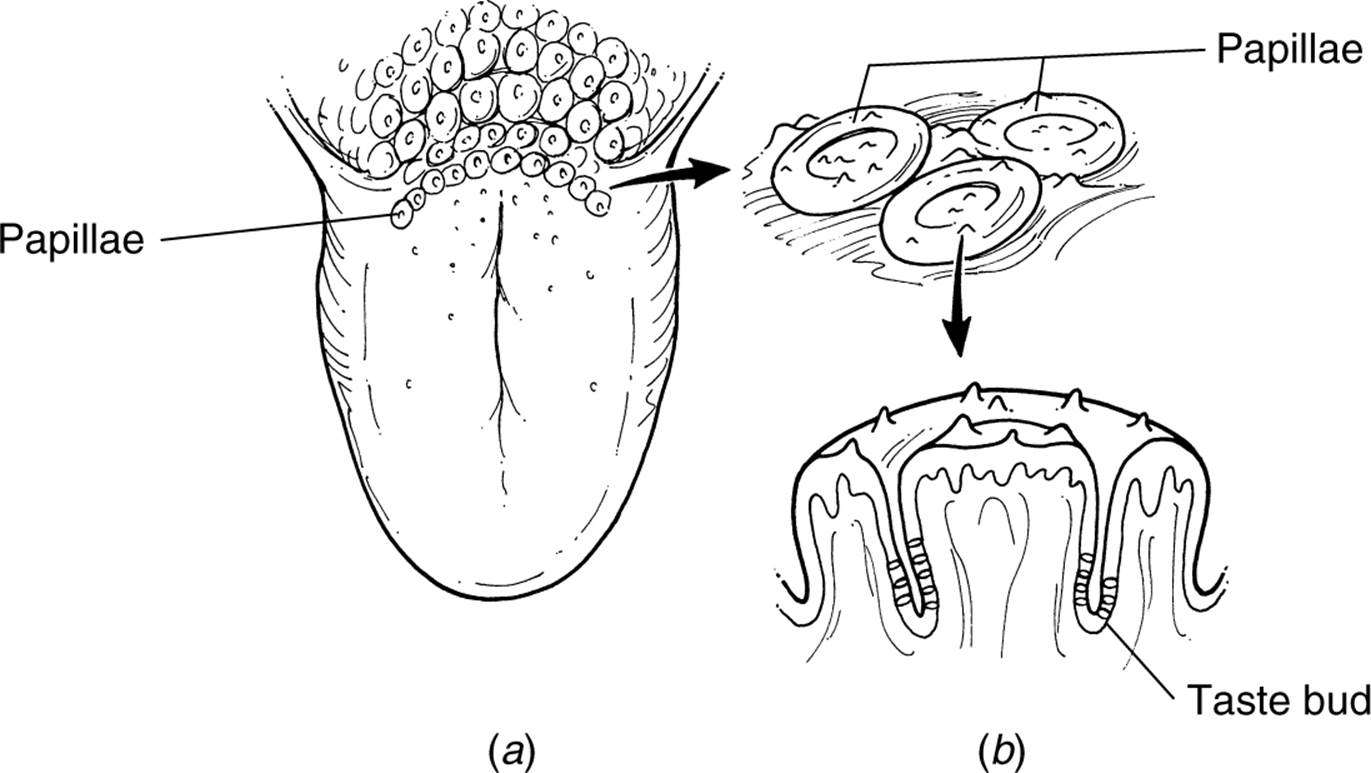

The nerves involved in the chemical senses respond to chemicals rather than to energy, like light and sound waves. Chemicals from the food we eat (or whatever else we stick into our mouths) are absorbed by taste buds on our tongue (see Fig. 4.3). Taste buds are located on papillae, which are the bumps you can see on your tongue. Taste buds are located all over the tongue and some parts of the inside of the cheeks and roof of the mouth. Humans sense five different types of tastes: sweet, salty, sour, bitter, and umami (“savory” or “meaty” taste). Some taste buds respond more intensely to a specific taste and more weakly to others. People differ in their ability to taste food. The more densely packed the taste buds, the more chemicals are absorbed, and the more intensely the food is tasted. You can get an idea of how densely packed taste buds are by looking at the papillae on your tongue. If all the bumps are packed tightly together, you probably taste food intensely. If they are spread apart, you are probably a weak taster. What we think of as the flavor of food is actually a combination of taste and smell.

Figure 4.3. Taste sensors.

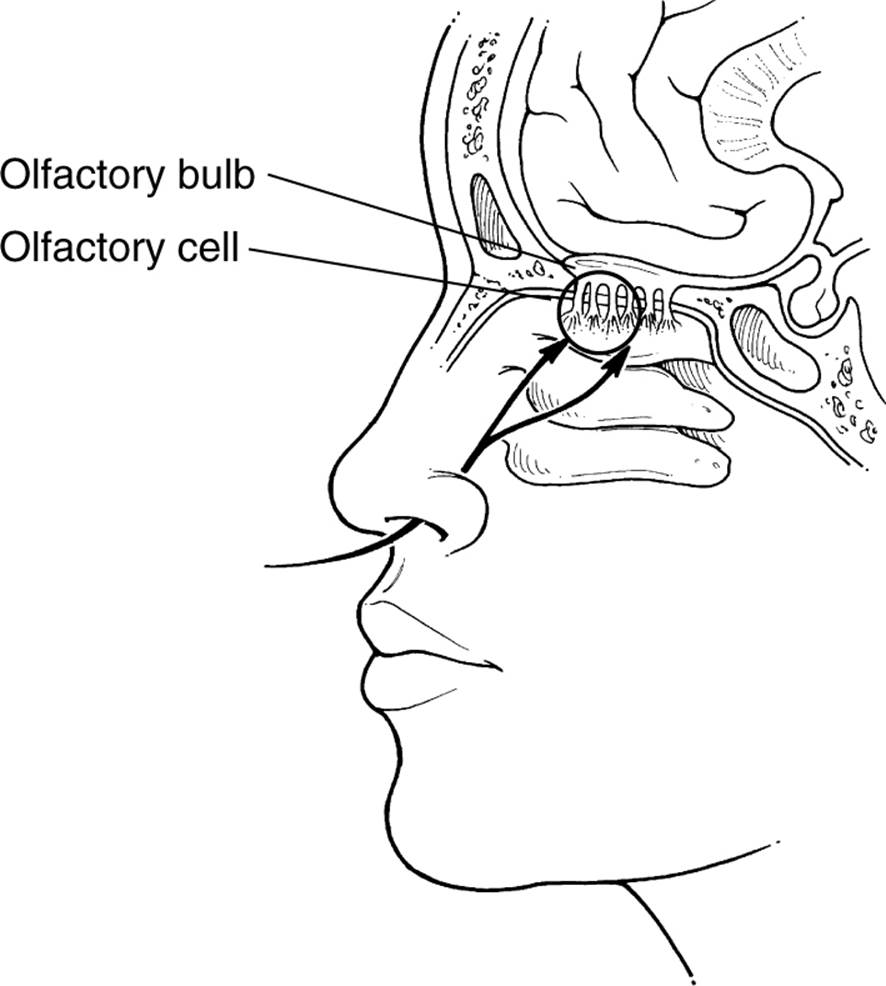

Figure 4.4. Cross section of the olfactory system.

Smell (or Olfaction)

Our sense of smell also depends on chemicals emitted by substances. Molecules of substances, hot chocolate for example, rise into the air. Some of them are drawn into our nose. The molecules settle in a mucous membrane at the top of each nostril and are absorbed by receptor cells located there. The exact types of these receptor cells are not yet known, as they are for taste buds. Some researchers estimate that as many as 100 different types of smell receptors may exist. These receptor cells are linked to the olfactory bulb (see Fig. 4.4), which gathers the messages from the olfactory receptor cells and sends this information to the brain. Interestingly, the nerve fibers from the olfactory bulb connect to the brain at the amygdala and then to the hippocampus, which make up the limbic system—responsible for emotional impulses and memory. The impulses from all the other senses go through the thalamus first before being sent to the appopriate cortices. This direct connection to the limbic system may explain why smell is such a powerful trigger for memories.

BODY POSITION SENSES

Vestibular Sense

Our vestibular sense tells us about how our body is oriented in space. Three semicircular canals in the inner ear (see Fig. 4.2) give the brain feedback about body orientation. The canals are basically tubes partially filled with fluid. When the position of your head changes, the fluid moves in the canals, causing sensors in the canals to move. The movement of these hair cells activate neurons, and their impulses go to the brain. You have probably experienced the nausea and dizziness caused when the fluid in these canals is agitated. During an exciting roller-coaster ride, the fluid in the canals might move so much that the brain receives confusing signals about body position. This causes the dizziness and nauseous reaction.

Kinesthetic Sense

While our vestibular sense keeps track of the overall orientation of our body, our kinesthetic sense gives us feedback about the position and orientation of specific body parts. Receptors in our muscles and joints send information to our brain about our limbs. This information, combined with visual feedback, lets us keep track of our body. You could probably reach down with one finger and touch your kneecap with a high degree of accuracy because your kinesthetic sense provides information about where your finger is in relation to your kneecap.

See the following table for a summary of the senses and their associated receptors.

PERCEPTION

As stated before, perception is the process of understanding and interpreting sensations. Psychophysics is the study of the interaction between the sensations we receive and our experience of them. Researchers who study psychophysics try to uncover the rules our minds use to interpret sensations. We will cover some of the basic principles in psychophysics and examine some basic perceptual rules for vision.

Thresholds

Research shows that while our senses are very acute, they do have their limits. The absolute threshold is the smallest amount of stimulus we can detect. For example, the absolute threshold for vision is the smallest amount of light we can detect, which is estimated to be a single candle flame about 30 miles (48 km) away on a perfectly dark night. Most of us could detect a single drop of perfume a room away. Actually, the technical definition of absolute threshold is the minimal amount of stimulus we can detect 50 percent of the time, because researchers try to take into account individual variation in sensitivity and interference from other sensory sources. Stimuli below our absolute threshold is said to be subliminal. Some companies claim to produce subliminal message media that can change unwanted behavior. Psychological research does not support their claim. In fact, a truly subliminal message would not, by definition, affect behavior at all because if a message is truly subliminal, we do not perceive it! Research indicates some messages called subliminal (because they are so faint we do not report perceiving them) can sometimes affect behavior in subtle ways, such as choosing a word at random from a list after the word was presented subliminally. Evidence does not exist, however, that more complex subliminal messages such as “lose weight” or “increase your vocabulary” are effective. If these tapes do change behavior, the change most likely comes from the placebo effect rather than from the effect of the subliminal message.

Table 4.1. Senses and Associated Receptors

|

Energy Senses |

Vision |

Rods, Cones (in Retina) |

|

Hearing |

Hair cells connected to the organ of Corti (in cochlea) |

|

|

Touch |

Temperature, pressure, pain nerve endings (in the skin) |

|

|

Chemical Senses |

Taste (gustation) |

Sweet, sour, salty, bitter, umami taste buds (in papillae on the tongue) |

|

Smell (olfaction) |

Smell receptors connected to the olfactory bulb (in the top of the nose) |

|

|

Body Position Senses |

Vestibular sense |

Hairlike receptors in three semicircular canals (in the inner ear) |

|

Kinesthetic sense |

Receptors in muscles and joints |

So if we can see a single candle 30 miles (48 km) away, would we notice if another candle was lit right next to it? In other words, how much does a stimulus need to change before we notice the difference? The difference threshold defines this change. The difference threshold, sometimes called just-noticeable difference, is the smallest amount of change needed in a stimulus before we detect a change. This threshold is computed by Weber’s law,named after psychophysicist Ernst Weber (Note: Some textbooks refer to this law as the Weber-Fechner law to honor the contributions of psychophysicist Gustav Fechner, 1801–1887). It states that the change needed is proportional to the original intensity of the stimulus. The more intense the stimulus, the more it will need to change before we notice a difference. You might notice a change if someone adds a small amount of cayenne pepper to a dish that is normally not very spicy, but you would need to add much more hot pepper to five-alarm chili before anyone would notice a difference. Further, Weber discovered that each sense varies according to a constant, but the constants differ between the senses. For example, the constant for hearing is 5 percent. If you listened to a 100-decibel tone, the volume would have to increase to 105 decibels before you noticed that it was any louder. Weber’s constant for vision is 8 percent. So 8 candles would need to be added to 100 candles before it looked any brighter.

Perceptual Theories

Psychologists use several theories to describe how we perceive the world.

These perceptual theories are not competing with one another. Each theory describes different examples or parts of perception. Sometimes a single example of the interpretation of sensation needs to be explained using all of the following theories.

SIGNAL DETECTION THEORY

Real-world examples of perception are more complicated than controlled laboratory-perception experiments. After all, how many times do we get the opportunity to stare at a single candle flame 30 miles (48 km) away on a perfectly clear, dark night? Signal detection theory investigates the effects of the distractions and interference we experience while perceiving the world. This area of research tries to predict what we will perceive among competing stimuli. For example, will the surgeon see the tumor on the CAT scan among all the irrelevant shadows and flaws in the picture? Will the quarterback see the one open receiver in the end zone despite the oncoming lineman? Signal detection theory takes into account how motivated we are to detect certain stimuli and what we expect to perceive. These factors together are called response criteria (also called receiver operating characteristics). For example, I will be more likely to smell a freshly baked rhubarb pie if I am hungry and enjoy the taste of rhubarb. By using factors like response criteria, signal detection theory tries to explain and predict the different perceptual mistakes we make. A false positive is when we think we perceive a stimulus that is not there. For example, you may think you see a friend of yours on a crowded street and end up waving at a total stranger. A false negative is not perceiving a stimulus that is present. You may not notice the directions at the top of a test that instruct you not to write on the test form. In some situations, one type of error is much more serious than the other, and this importance can alter perception. In the surgeon example mentioned previously, a false negative (not seeing a tumor that is present) is a more serious mistake than a false positive (suspecting that a tumor is there), although both mistakes are obviously important.

TOP-DOWN PROCESSING

When we use top-down processing, we perceive by filling in gaps in what we sense. For example, try to read the following sentence:

I _ope yo_ _et a 5 on t_ _ A_ e_am.

You should be able to read the sentence as “I hope you get a 5 on the AP exam.” You perceived the blanks as the appropriate letters by using the context of the sentence. Top-down processing occurs when you use your background knowledge to fill in gaps in what you perceive. Our experience creates schemata, mental representations of how we expect the world to be. Our schemata influence how we perceive the world. Schemata can create a perceptual set, which is a predisposition to perceiving something in a certain way. If you have ever seen images in the clouds, you have experienced top-down processing. You use your background knowledge (schemata) to perceive the random shapes of clouds as organized shapes. In the 1970s, some parent groups were very concerned about backmasking: supposed hidden messages musicians recorded backward in their music. These parent groups would play song lyrics backward and hear messages, usually threatening messages. Some groups of parents demanded an investigation about the effects of the backmasking. What was happening? Lyrics played backward are basically random noise. However, if you expect to hear a threatening message in the random noise, you probably will, much like expecting to see an image in the clouds. People who listened to the songs played backward and had schemata of this music as dangerous or evil perceived the threatening messages due to top-down processing.

BOTTOM-UP PROCESSING

Bottom-up processing, also called feature analysis, is the opposite of top-down processing. Instead of using our experience to perceive an object, we use only the features of the object itself to build a complete perception. We start our perception at the bottom with the individual characteristics of the image and put all those characteristics together into our final perception. Bottom-up processing can be hard to imagine because it is such an automatic process. The feature detectors in the visual cortex allow us to perceive basic features of objects, such as horizontal and vertical lines, curves, motion, and so on. Our mind builds the picture from the bottom up using these basic characteristics. We are constantly using both bottom-up and top-down processing as we perceive the world. Top-down processing is faster but more prone to error, while bottom-up processing takes longer but is more accurate.

Principles of Visual Perception

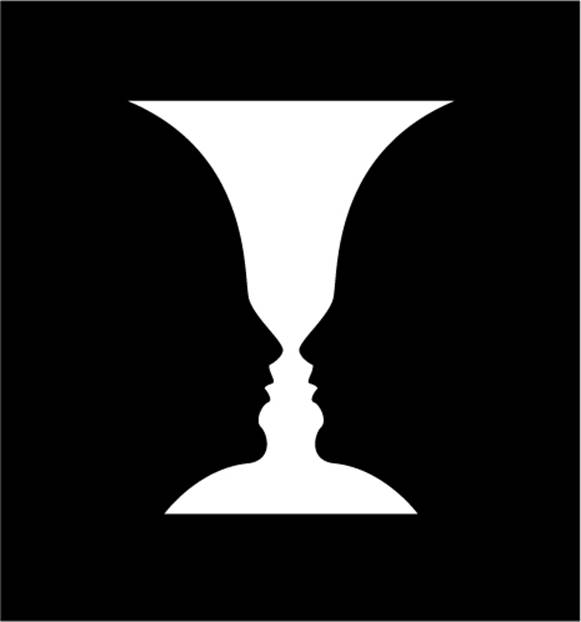

The rules we use for visual perception are too numerous to cover completely in this book. However, some of the basic rules are important to know and understand for the AP psychology exam. One of the first perceptual decisions our mind must make is the figure-ground relationship. What part of a visual image is the figure and what part is the ground or background? Several optical illusions play with this rule. One example is the famous picture of the vase that if looked at one way is a vase but by switching the figure and the ground can be perceived as two faces (see Fig. 4.5).

Figure 4.5. Optical illusion.

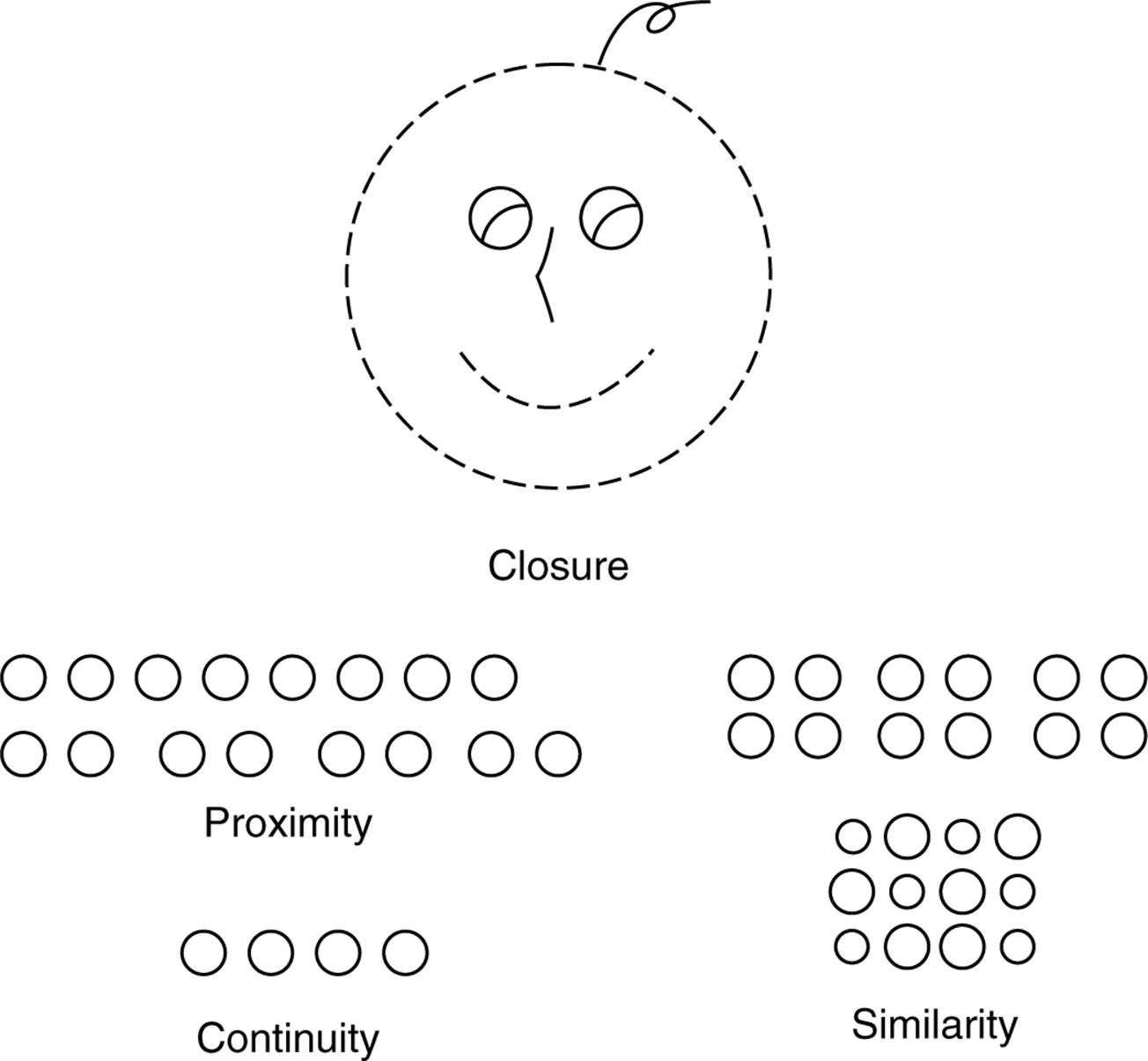

Gestalt Rules

At the beginning of the twentieth century, a group of researchers called the Gestalt psychologists described the principles that govern how we perceive groups of objects. The Gestalt psychologists pointed out that we normally perceive images as groups, not as isolated elements. They thought this process was innate and inevitable. Several factors influence how we will group objects.

|

Proximity |

Objects that are close together are more likely to be perceived as belonging in the same group. |

|

Similarity |

Objects that are similar in appearance are more likely to be perceived as belonging in the same group. |

|

Continuity |

Objects that form a continuous form (such as a trail or a geometric figure) are more likely to be perceived as belonging in the same group. |

|

Closure |

Similar to top-down processing. Objects that make up a recognizable image are more likely to be perceived as belonging in the same group even if the image contains gaps that the mind needs to fill in. |

Constancy

Every object we see changes minutely from moment to moment due to our changing angle of vision, variations in light, and so on. Our ability to maintain a constant perception of an object despite these changes is called constancy. There are several types of constancy.

|

Size constancy |

Objects closer to our eyes will produce bigger images on our retinas, but we take distance into account in our estimations of size. We keep a constant size in mind for an object (if we are familiar with the typical size of the object) and know that it does not grow or shrink in size as it moves closer or farther away. |

|

Shape constancy |

Objects viewed from different angles will produce different shapes on our retinas, but we know the shape of an object remains constant. For example, the top of a coffee mug viewed from a certain angle will produce an elliptical image on our retinas, but we know the top is circular due to shape constancy. Again, this depends on our familiarity with the usual shape of the object. |

|

Brightness constancy |

We perceive objects as being a constant color even as the light reflecting off the object changes. For example, we will perceive a brick wall as brick red even as the daylight fades and the actual color reflected from the wall turns gray. |

Perceived Motion

Another aspect of perception is our ability to gauge motion. Our brains are able to detect how fast images move across our retinas and to take into account our own movement. Interestingly, in a number of situations, our brains perceive objects to be moving when, in fact, they are not. A common example of this is the stroboscopic effect, used in movies or flip books. Images in a series of still pictures presented at a certain speed will appear to be moving. Another example you have probably encountered on movie marquees and with holiday lights, is the phi phenomenon. A series of lightbulbs turned on and off at a particular rate will appear to be one moving light. A third example is the autokinetic effect. If a spot of light is projected steadily onto the same place on a wall of an otherwise dark room and people are asked to stare at it, they will report seeing it move.

Depth Cues

One of the most important and frequently investigated parts of visual perception is depth. Without depth perception, we would perceive the world as a two-dimensional flat surface, unable to differentiate between what is near and what is far. This limitation could obviously be dangerous. Researcher Eleanor Gibson used the visual cliff experiment to determine when human infants can perceive depth. An infant is placed onto one side of a glass-topped table that creates the impression of a cliff. Actually, the glass extends across the entire table, so the infant cannot possibly fall. Gibson found that an infant old enough to crawl will not crawl across the visual cliff, implying the child has depth perception. Other experiments demonstrate that depth perception develops when we are about three months old. Researchers divide the cues that we use to perceive depth into two categories: monocular cues (depth cues that do not depend on having two eyes) and binocular cues (cues that depend on having two eyes).

MONOCULAR CUES

If you have taken a drawing class, you have learned monocular depth cues. Artists use these cues to imply depth in their drawings. One of the most common cues is linear perspective. If you wanted to draw a railroad track that runs away from the viewer off into the distance, most likely you would start by drawing two lines that converge somewhere toward the top of your paper. If you added a drawing of the train, you might use the relative size cue. You would draw the boxcars closer to the viewer as larger than the engine off in the distance. A water tower blocking our view of part of the train would be seen as closer to us due to the interposition cue; objects that block the view to other objects must be closer to us. If the train were running through a desert landscape, you might draw the rocks closest to the viewer in detail, while the landscape off in the distance would not be as detailed. This cue is called texture gradient; we know that we can see details in texture close to us but not far away. Finally, your art teacher might teach you to use shadowing in your picture. By shading part of your picture, you can imply where the light source is and thus imply depth and position of objects.

BINOCULAR CUES

Other cues for depth result from our anatomy. We see the world with two eyes set a certain distance apart, and this feature of our anatomy gives us the ability to perceive depth. The finger trick you read about during the discussion of the anatomy of the eye demonstrates the first binocular cue—binocular disparity (also called retinal disparity). Each of our eyes sees any object from a slightly different angle. The brain gets both images. It knows that if the object is far away, the images will be similar, but the closer the object is, the more disparity there will be between the images coming from each eye. The other binocular cue is convergence. As an object gets closer to our face, our eyes must move toward each other to keep focused on the object. The brain receives feedback from the muscles controlling eye movement and knows that the more the eyes converge, the closer the object must be.

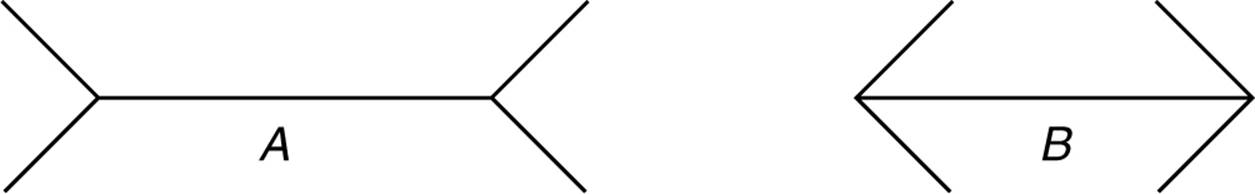

Effects of Culture on Perception

One area of psychology cross-cultural researchers are investigating is the effect of culture on perception. Research indicates that some of the perceptual rules psychologists once thought were innate are actually learned. For example, cultures that do not use monocular depth cues (such as linear perspective) in their art do not see depth in pictures using these cues. Also, some optical illusions are not perceived the same way by people from different cultures. For example, below is a representation of the famous Muller-Lyer illusion. Which of the following straight lines, A or B, appears longer to you?

Line A should look longer, even though both lines are actually the same length. People who come from noncarpentered cultures that do not use right angles and corners often in their building and architecture are not usually fooled by the Muller-Lyer illusion. Cross-cultural research demonstrates that some basic perceptual sets are learned from our culture.

Extrasensory Perception

Now that you’ve reviewed the senses and how the brain changes these sensations into perceptions, you can interpret the term extrasensory perception (ESP) in a more specific way than most people can: someone claiming to have “extrasensory perception” is claiming to perceive a sensation “outside” the senses discussed in this chapter. Psychologists are skeptical of ESP claims primarily because our senses are well understood, and researchers do not find reliable evidence that we can perceive sensations other than through our sight, smell, hearing, taste, touch, and vestibular/balance systems. Researchers who test ESP claims using rigorous experiments, such as double-blind studies, find other more likely explanations for supposed extrasensory phenomena. Usually ESP claims are better explained by such things as deception, magic tricks, or coincidence.

PRACTICE QUESTIONS

Directions: Each of the questions or incomplete statements below is followed by five suggested answers or completions. Select the one that is best in each case.

1.Our sense of smell may be a powerful trigger for memories because

(A)we are conditioned from birth to make strong connections between smells and events.

(B)the nerve connecting the olfactory bulb sends impulses directly to the limbic system.

(C)the receptors at the top of each nostril connect with the cortex.

(D)smell is a powerful cue for encoding memories into long-term memory.

(E)strong smells encourage us to process events deeply so they will most likely be remembered.

2.The cochlea is responsible for

(A)protecting the surface of the eye.

(B)transmitting vibrations received by the eardrum to the hammer, anvil, and stirrup.

(C)transforming vibrations into neural signals.

(D)coordinating impulses from the rods and cones in the retina.

(E)sending messages to the brain about orientation of the head and body.

3.In a perception research lab, you are asked to describe the shape of the top of a box as the box is slowly rotated. Which concept are the researchers most likely investigating?

(A)feature detectors in the retina

(B)feature detectors in the occipital lobe

(C)placement of rods and cones in the retina

(D)binocular depth cues

(E)shape constancy

4.The blind spot in our eye results from

(A)the lack of receptors at the spot where the optic nerve connects to the retina.

(B)the shadow the pupil makes on the retina.

(C)competing processing between the visual cortices in the left and right hemisphere.

(D)floating debris in the space between the lens and the retina.

(E)retinal damage from bright light.

5.Smell and taste are called __________ because __________

(A)energy senses; they send impulses to the brain in the form of electric energy.

(B)chemical senses; they detect chemicals in what we taste and smell.

(C)flavor senses; smell and taste combine to create flavor.

(D)chemical senses; they send impulses to the brain in the form of chemicals.

(E)memory senses; they both have powerful connections to memory.

6.What is the principal difference between amplitude and frequency in the context of sound waves?

(A)Amplitude is the tone or timbre of a sound, whereas frequency is the pitch.

(B)Amplitude is detected in the cochlea, whereas frequency is detected in the auditory cortex.

(C)Amplitude is the height of the sound wave, whereas frequency is a measure of how frequently the sound waves pass a given point.

(D)Both measure qualities of sound, but frequency is a more accurate measure since it measures the shapes of the waves rather than the strength of the waves.

(E)Frequency is a measure for light waves, whereas amplitude is a measure for sound waves.

7.Weber’s law determines

(A)absolute threshold.

(B)focal length of the eye.

(C)level of subliminal messages.

(D)amplitude of sound waves.

(E)just-noticeable difference.

8.Gate-control theory refers to

(A)which sensory impulses are transmitted first from each sense.

(B)which pain messages are perceived.

(C)interfering sound waves, causing some waves to be undetected.

(D)the gate at the optic chiasm controlling the destination hemisphere for visual information from each eye.

(E)how our minds choose to use either bottom-up or top-down processing.

9.If you had sight in only one eye, which of the following depth cues could you NOT use?

(A)texture gradient

(B)convergence

(C)linear perspective

(D)interposition

(E)shading

10.Which of the following sentences best describes the relationship between sensation and perception?

(A)Sensation is a strictly mechanical process, whereas perception is a cognitive process.

(B)Perception is an advanced form of sensation.

(C)Sensation happens in the senses, whereas perception happens in the brain.

(D)Sensation is detecting stimuli, perception is interpreting stimuli detected.

(E)Sensation involves learning and expectations, and perception does not.

11.What function does the retina serve?

(A)The retina contains the visual receptor cells.

(B)The retina focuses light coming in the eye through the lens.

(C)The retina determines how much light is let into the eye.

(D)The retina determines which rods and cones will be activated by incoming light.

(E)The retina connects the two optic nerves and sends impulses to the left and right visual cortices.

12.Color blindness and color afterimages are best explained by what theory of color vision?

(A)trichromatic theory

(B)visible hue theory

(C)opponent-process theory

(D)dichromatic theory

(E)binocular disparity theory

13.You are shown a picture of your grandfather’s face, but the eyes and mouth are blocked out. You still recognize it as a picture of your grandfather. Which type of processing best explains this example of perception?

(A)bottom-up processing

(B)signal detection theory

(C)top-down processing

(D)opponent-process theory

(E)gestalt replacement theory

14.What behavior would be difficult without our vestibular sense?

(A)integrating what we see and hear

(B)writing our name

(C)repeating a list of digits

(D)walking a straight line with our eyes closed

(E)reporting to a researcher the exact position and orientation of our limbs

15.Which of the following sentences best describes the relationship between culture and perception?

(A)Our perceptual rules are inborn and not affected by culture.

(B)Perceptual rules are culturally based, so rules that apply to one culture rarely apply to another.

(C)Most perceptual rules apply in all cultures, but some perceptual rules are learned and vary between cultures.

(D)Slight variations in sensory apparatuses among cultures create slight differences in perception.

(E)The processes involved in perception are genetically based, so genetic differences among cultures affect perception.