The Well-Educated Mind: A Guide to the Classical Education You Never Had (2016)

Part II. Reading: JUMPING INTO THE GREAT CONVERSATION

Chapter 10. The Cosmic Story: Understanding the Earth, the Skies, and Ourselves

SCIENCE BEGAN LONG before the first science text, just as storytelling came before the novel, poetic performances before the written poem. Science, says historian-of-science George Sarton, began as soon as humans “tried to solve the innumerable problems of life.” Mapping out a journey by the skies, balancing a wheel, building an irrigation canal, mixing herbs to relieve pain, designing a pyramid: this was science.1

And it went on for quite a long time before anyone decided to write about it.

Compared with the other genres we’ve investigated, science books have a much more distant relationship to the actual practice of the craft. Made-up stories; stories about the past; stories about ourselves; lyrical outpourings about God, or love, or depression; acting out imagined scenes: all of these existed before novels, histories, autobiographies, poems, and plays took the forms we now know. But all of them had to migrate into written form before they could survive, develop, evolve.

Science is different. Scientific discoveries don’t require the written word. Many of the most essential insights into the natural world (right triangles exist; electrical current can be channelled through a wire; the atoms of elements can be charted onto a periodic table; antibiotics kill bacteria) have not led to books about them. Science is perfectly capable of continuing independent of written narratives.

But side by side with the actual doing of science, a tradition of science writing slowly evolved: starting, as history did, with the Greeks.

A TWENTY-MINUTE HISTORY OF SCIENCE WRITING

The Natural Philosophers

In the fifth century B.C., the physician Hippocrates was struggling with the nature of disease.

He had been trained to practice medicine in a world where the divine suffused everything. Doctors were also priests, and they treated the sick by sending them for a night’s vigil in one of the temples of Aesculapius, god of healing. Perhaps the sacred serpents that lived in the temple would lick the patient’s wounds and miraculously heal them; or maybe the god would send a dream explaining how the illness should be treated; or, Aesculapius himself might even appear to carry out the cure.2

In this world, Hippocrates was an outlier.

He did not think that diseases were caused by angry deities, nor that they needed to be cured by a benevolent one. “I do not believe,” he wrote in his treatise about epilepsy, long thought to be a holy affliction sent directly from the gods, “that the ‘Sacred Disease’ is any more divine or sacred than any other disease, but, on the contrary, has specific characteristics and a definite cause . . . It is my opinion that those who first called this disease ‘sacred’ were the sort of people we now call witch-doctors, faith-healers, quacks and charlatans . . . By invoking a divine element they were able to screen their own failure to give suitable treatments.”3

Instead of invoking the gods, Hippocrates looked to the visible world, searching for both “definite causes” and “suitable treatments” in nature itself.

His investigations led him to formulate an entirely secular theory of disease. Four fluids course through the human body, Hippocrates claimed: bile, black bile, phlegm, and blood. When these four fluids (“humors”) exist in their proper proportions, we are healthy. But any number of natural factors might throw the fluids out of whack. Hot winds, for example, cause the body to produce too much phlegm; drinking stagnant water can lead to an overabundance of black bile. The recommended treatment? Restore the body’s balance. Use purges and bleeds to get rid of excess humors; send sick men and women to different climates, away from the winds and waters that are deranging their harmonies.4

The theory was ingenious, convincing, and completely wrong.

It could hardly be otherwise. Hippocrates had no access to the body’s secrets; no way to discern what was really happening inside the skin. Twenty-three centuries later, Albert Einstein and the physicist Leopold Infeld jointly offered an analogy for Hippocrates’s plight. The ancient investigator of the natural world, they wrote, was like

a man trying to understand the mechanism of a closed watch. He sees the face and the moving hands, even hears its ticking, but he has no way of opening the case. If he is ingenious he may form some picture of a mechanism which could be responsible for all the things he observes, but he . . . will never be able to compare his picture with the real mechanism and he cannot even imagine the possibility or the meaning of such a comparison.5

Hippocrates was no more able to peer inside the watch-case than his priest-physician contemporaries. He was not doing science as we would understand it; he was philosophizing about nature, attempting to reason his way into a closed system that he could not observe. But Hippocrates and his followers were at least attempting to find natural factors that would help explain the natural world. So the Hippocratic Corpus—some sixty medical texts, collected by his students and followers, that neither blame nor invoke the gods—is the first written record of a scientific endeavor.

In the centuries after Hippocrates, other Greek philosophers expanded his way of thinking of to encompass phusis: not just man, but the whole of the ordered universe.

Their theories were varied. The monists believed that the ordered universe all began with a single underlying element, one sort of stuff (water, or fire, or some still-unknown material); the pluralists were in favor of multiple underlying elements, most often a four-way assembly of earth, air, fire, and water. And the atomists suggested that all of reality was made up of minuscule elements called atomoi, the “indivisibles”—incomprehensibly small particles that clump together to form the “visible and perceptible masses” that make up our world.6

This last theory, as we now know, was within stabbing distance of the truth. But the atomists, like the monists and pluralists, were still doing philosophy. None of these explanations were susceptible to proof. These early “scientists” were theorizing with no way to check their results; the watch case was still firmly closed.

For the philosopher Aristotle—born a century after Hippocrates’ death, on the opposite side of the Aegean Sea—the greatest flaw in all of these speculations was their failure to account for change. Searching for a quality that all natural things shared, Aristotle pinpointed the principle of development. An animal, a plant, fire, water—none of these things remain the same indefinitely. Each, Aristotle wrote in his great work Physics, “has within itself a source of change . . . in respect of either movement or increase and decrease or alteration.” A bed, or a cloak, or a stone building—all created by man’s artifice—have no such “intrinsic impulse for change.”7

Watching a sprout grow into a tree, a cub into a lion, an infant into a man, Aristotle wanted an explanation. How do these changes happen? In what stages does one entity, one being, assume more than one form? What impels the change, and what determines its ending point? And even more, he wanted a reason. Why does a kitten become a cat, a seed a flower? What sends it on the long journey of transformation?

The monists and atomists had no answers for him; nor did the pluralists, although he found their theory of multiple elements more convincing. So he began to work his way toward a new set of explanations. To the pluralist sketch of four elements that combine to make up all natural things, Aristotle added a fifth, an imperishable heavenly substance called aether that carries the stars. He also proposed that each element has particular qualities (air is cold and dry, water is cold and wet) that interact with each other and produce change (for example, the “dry” in air can expel the “wet” from water, making water cold and dry, and thus converting water to air). Earth is the heaviest of the elements, and so is drawn toward the center of the universe; fire, the lightest, always tends to fly away from the cosmic core.8

Most important of all, natural things have within themselves a principle of motion: an internal potential for change. Each object and being in the natural world must move from its present state into a future, more perfect one. Built into the very fabric of the seed, the kitten, the infant, is the impulse to develop toward a more fully realized end.

The Physics, widely read in the Greek world, provided a model of the universe that would influence the practice of science for two thousand years. But Aristotle, too, was philosophizing. He could offer no solid proofs of his elements, nor pinpoint the principle of motion within them. And his vision of a driven and purpose-filled world did not go unchallenged.

The atomists were his most vocal opponents; particularly Epicurus, a generation Aristotle’s junior, who argued vehemently that there was no purposeful movement in the universe. There were only randomly moving atoms and “the empty”—the place in which atoms rushed about, collided, and intertwined by chance. The world that we see has come into being only because atoms, spinning through the void, occasionally give an unpredictable hop, a random jump sideways, slam into each other, and fortuitously join up to create new objects.9

Two hundred years after Epicurus’s death, his disciple Lucretius—a Roman educated in Greek philosophy—recast his teachings in a long poem called De Rerum Natura (On the Nature of the Universe or, more literally, On the Nature of Things). The atoms that make up everything, Lucretius writes, are in “ceaseless motion” and vary in size and shape. They created the earth and the human race; there is no design, either natural or supernatural. The soul is not transcendent; like our bodies, it is made up of material particles, of atoms “most minute.” Too tiny to comprehend, they disperse into air when the body dies, and so the soul too ceases to exist.

But the most central truth of atomism, as Lucretius explains in Book II, is that all things come to an end. All natural bodies—sun, moon, sea, our own—age and decay. They do not mature into greater and truer versions of themselves. Rather, they are struck again and again by “hostile atoms” and slowly melt away. And what is true of the physical bodies within the universe is true of the universe itself: “So likewise,” he concludes, “the walls of the great world . . . shall suffer decay and fall into moldering ruins . . . [I]t is vain to expect that the frame of the world will last forever.” Aristotle’s teleology was a delusion. The universe will perish, as surely as our own bodies, and come not to fulfillment, but only to dust.10

Even more firmly than Hippocrates and Aristotle, Lucretius insisted that phusis, the ordered universe, could be explained in purely natural terms: the most central principle of modern science. But like them, he had no way of proving his theories. He could not observe his atoms at work, any more than Hippocrates could view his humors, or Aristotle examine the aether. The “watch case” of nature was still firmly closed; and for the next fifteen hundred years, no one would succeed in popping the lock.

The Observers

In 1491, Nicolaus Copernicus began a new search for the key.

He was eighteen years old, a student of astronomy at the University of Cracow, grappling with his introductory astronomy textbook. The Epitome of the Almagest, a standard handbook for beginners, was an abridgment of a much more complex manual: the Almagest, assembled by the Greek astronomer Ptolemy in the second century. The Almagest assumed that the universe was exactly as Aristotle had described it: spherical, made up of five elements, with the earth sitting at its center. (That was logical enough. The earth is “heavy matter,” constantly drawn toward the center of the universe, and it’s clearly not falling through space: Q.E.D., it must already be at the center.) The stars above the earth, along with the seven independently moving celestial bodies known as the aster planetes (the wandering stars), moved around the earth.

But this movement around the earth was far from simple.

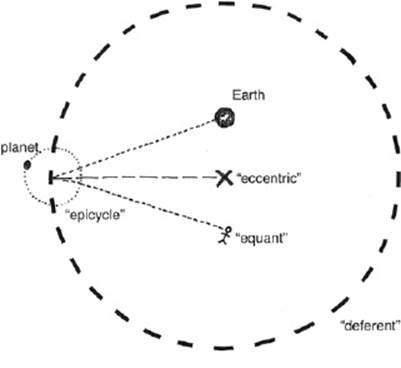

According to the Epitome of the Almagest, each planet came to a regular stop in its orbit (a “station”) and then backtracked for a predictable, calculable distance (“retrogradation”). They also performed additional small loops (“epicycles”) while traveling along the larger circles (“deferents”); and the center of the deferents was not the earth itself, but a point slightly offset from the theoretical core of the universe (the “eccentric”). Furthermore, the speed of planetary movement was measured from yet a third point, an imaginary standing place called the equant. (The equant was self-defining—it was the place from which measurement had to be made in order to make the planet’s path along the deferent proceed at a completely uniform rate.)11

This was a complicated and ugly system—but by measuring from the equant and the eccentric and by building epicycle upon epicycle, students of astronomy could accurately predict the future position of any given star or planet.

In all likelihood, none of them believed that the Epitome of the Almagest provided an actual picture of the universe. Ptolemy himself probably did not think that, should he suddenly be transported into the heavens, he would see Jupiter suddenly charge backward into retrograde and then swing around into an epicycle. The mathematical strategies were just that: gimmicks and tricks that yielded the correct results, not realistic sketches.

This was called “saving the phenomena”—proposing geometrical patterns that matched up with observational data. The patterns were reliable enough for the use of navigators and time-keepers, and allowed astronomers to (more or less) accurately chart the heavens. And, since no one had the ability to look into the heavens and see what Jupiter was actually up to, the earth-centered orbits were generally accepted.12

But from his first introduction to the Almagest, Copernicus questioned those elaborate and unwieldy paths. Why, he wondered, should each planet require its own individual set of movements, its own particular laws? It was as if, he later wrote, an artist decided to draw the figure of a man, but gathered “the hands, feet, head and other members for his images from diverse models, each part excellently drawn, but not related to a single body . . . the result would be monster rather than man.”13

The earth-centered universe of the Almagest was, he thought, monstrous: an unwieldy set of awkward mathematical contortions.

Copernicus spent a decade and a half studying the Almagest and making his own records of planetary positions. By 1514, he had formulated a more graceful theory. He wrote it out in a simple and readable form, eliminating all of the mathematics involved, and circulated it to his friends. This informal proposal was known as the Commentariolus.

“I often considered,” it began, “whether there could perhaps be found a more reasonable arrangement of circles.” This more reasonable arrangement began with a simple assumption: “All the spheres revolve about the sun as their mid-point, and therefore the sun is the center of the universe.” The earth was merely the center of the “lunar sphere,” not the entire universe. Furthermore, the earth did not remain motionless; instead, it “performs a complete rotation on its fixed poles in a daily motion.” This earthly rotation actually caused the apparent movement of the sun, and accounted for what seemed to be retrograde motion in the planetary paths.14

Copernicus spent the next quarter of a century working the Commentariolus up into the full-fledged astronomical manual On the Revolutions of the Heavenly Spheres, complete with mathematical calculations. “The harmony of the whole world teaches us their truth,” Copernicus wrote, “if only—as they say—we would look at the thing with both eyes.” That truth was simple: Only the mobility of the Earth, and the sun’s position at “the centre of the world,” can explain the motion of the stars.15

In other words, the heliocentric model was intended to be a true picture of the universe, not just a mathematical strategy. Unlike the Greek astronomers, Copernicus clearly believed that, should he suddenly be transported into the heavens, he would see the earth tracking faithfully around the sun.

He was moving away from philosophy, toward what we would now think of as a more scientific endeavor: a careful explanation of the physical world based on phenomena, not on a priori assumptions. But it was an incomplete journey. Copernicus had no telescope capable of providing visual confirmation of his model; he was a theoretical eyewitness, not an actual one.

And heliocentrism had its problems. For one thing, Copernicus couldn’t explain why, if the earth was whirling on its axis and sailing around the sun, both of those motions were imperceptible to people standing on its surface. And for another, heliocentrism seemed to contradict the literal interpretation of biblical passages such as Joshua 10:12–13, in which the sun and moon “stand still” rather than continuing to move around the earth.

So when On the Revolutions was first printed in 1542, an unsigned introduction was appended to it, explaining that the heliocentric model was merely another mathematical trick, not a real description. “For these hypotheses,” the introduction explained, “are not put forward to convince anyone that they are true, but merely to provide a reliable basis for computation.”

Copernicus may not even have seen this disclaimer; it is generally thought to have been written by a friend who was overseeing the printing process. Yet it was accepted as genuine by most readers, and for a century, Copernicus’s scheme remained merely one among many.

Its ultimate triumph was due to the work of two men: the English philosopher Francis Bacon, and the Italian astronomer and physicist Galileo.

Bacon, born nineteen years after On the Revolutions was published, was an ambitious politician and an even more ambitious thinker. While he was busy climbing up the ladder of preferment at the English court, he was also planning out his masterwork. This would be a definitive study of human knowledge called the Great Instauration: the Great Establishment, a complete system of philosophy in six volumes that would shape the minds of men and guide them into new truths.

By 1620, he had only completed the first two books, and his time was running out; his position at court had been undermined by his enemies, he was about to be confined in the Tower of London, and he would die of pneumonia in 1626 without returning to his magnum opus. But Novum Organon (“New Tools”), published shortly before his death, laid the foundations for the modern scientific method.

Novum Organon (a play on the title of Aristotle’s six books on logic, known collectively as the Organon) challenged the reliability of deductive reasoning, the Aristotelian way of thinking generally followed by natural philosophers. Deductive reasoning begins with generally accepted truths, or premises, and works its way toward more specific conclusions:

MAJOR PREMISE: All heavy matter falls toward the center of the universe.

MINOR PREMISE: The earth is made of heavy matter.

MINOR PREMISE: The earth is not falling.

CONCLUSION: The earth must already be at the center of the universe.

Bacon had come to believe that deductive reasoning was a dead end that distorted physical evidence, and made observation secondary to preconceived ideas. “Having first determined the question according to his will,” he complained, “man then resorts to experience, and bending her to conformity . . . leads her about like a captive in a procession.”

Instead, he argued, the careful thinker must reason the other way around: starting from specific observations, and building from them toward general conclusions. This new way of thinking—inductive reasoning—had three steps to it. The “true method,” Bacon explained, “first lights the candle, and then by means of the candle shows the way; commencing as it does with experience duly ordered and digested, not bungling or erratic, and from it deducing axioms, and from established axioms again new experiments.” In other words, the natural philosopher must first come up with an idea about how the world works: “lighting the candle.” Second, he must test the idea against physical reality, against “experience duly ordered”—both observations of the world around him, and carefully designed experiments. These experiments should be carried out with the use of instruments that magnify, intensify, and make clearer the process of nature: “Neither the naked hand nor the understanding left to itself can effect much,” Bacon wrote. “It is by instruments and helps that the work is done.”16

Only then, as a last step, should the natural philosopher “deduce axioms,” or come up with a theory that could be claimed to carry truth.

Hypothesis, experiment, conclusion: Bacon had just traced out the outlines of the scientific method. It was not, of course, fully developed. But the Novum Organum continued to shape the seventeenth-century practice of science. Finally, a method was in place that would allow natural philosophers to “look with both eyes,” as Copernicus had asked, and to come to conclusions based on their observations.

Chief among the “instruments and helps” that made these observations more useful was the telescope: brand new, and under steady improvement even as Bacon was writing. Ten years before the publication of the Novum Organon, the Italian mathematician and astronomer Galileo Galilei had first encountered a telescope on a visit to Venice. This arrangement of convex and concave lenses had been invented the year before by a Low Country spectacle-maker; immediately on returning home, Galileo set to work grinding his own lenses and improving the instrument’s refraction.

The original telescope had been only slightly more useful than the naked eye, but Galileo managed to refine the magnification to around 20X. Through his instrument, he saw mountains and valleys on the moon, and many more stars than were visible to the eye alone. He also saw four objects near Jupiter, never before observed. When Galileo first viewed them, he thought they were fixed stars.

But when he looked at them again on the following day, they had moved.

And they kept moving, in and out of sight, to the left and to the right of Jupiter itself. Over the course of a week, Galileo was able to sketch out their progression and come to the inevitable conclusion: they were moons, and all four “perform[ed] their revolutions about this planet . . . in unequal circles.”

This provided unequivocal proof that not all heavenly bodies revolved around the earth—proof that Galileo published in 1610, in a short work known as The Sidereal Messenger (“The Starry Messenger”). A few months later, he used his telescope to observe the changing phases of Venus; inexplicable in the Ptolemaic system, making sense only if Venus were, in fact, traveling around the sun.

These observations did not convince anyone. In fact, the chief philosopher at Padua, an Aristotelian named Cesar Cremonini, simply refused to look through Galileo’s telescope. “To such people,” Galileo wrote bitterly to the astronomer Johannes Kepler, “truth is to be sought, not in the universe or in nature, but (I use their own words) by comparing texts!” In Galileo’s opinion, Aristotle himself would have been happy both to look, and to adjust his physics in response: “We do have in our age new events and observations,” he later remarked, “such that, if Aristotle were now alive, I have no doubt he would change his opinion.”17

An epic battle was shaping up: between ancient authority and present observation, Aristotelian thought and Baconian method, text and eye. Galileo himself had not yet written anything that explicitly supported Copernicus. But his observations in The Sidereal Messenger certainly implied that he accepted heliocentrism, and he had already offered (in an unpublished collection of essays known as De motu) a mathematical explanation for why the earth’s motion through space was imperceptible from its surface.

In 1616, the cardinal Robert Bellarmine (under orders from Pope Paul V) recommended that On the Revolutions be placed on the church’s list of condemned texts. He also warned Galileo, in a private but official meeting, to abandon public agreement with Copernicus. Instead, Galileo spent the next sixteen years tackling the remaining problems with the heliocentric model, one at a time.

In 1632, he put all of his conclusions into a major work: the Dialogue on the Two Chief World Systems, Ptolemaic and Copernican. In order to sidestep Bellarmine’s dictate, Galileo framed the Dialogue as a hypothetical discussion, an argument among three friends as to what might be the best possible model for the universe. Two of his characters, charmingly intelligent and sympathetic, agree that the Copernican theory is superior; the third, a moronic idiot named Simplicius, insists on Aristotle’s earth-centered system.

The first print run of a thousand copies sold out almost immediately. It didn’t take long for churchmen to notice that Galileo was violating Bellarmine’s warning; and in 1633, Galileo—now in his seventies, and unwell—was forced to travel to Rome to defend himself against the Inquisition. Threatened with “greater rigor of procedure,” a code phrase for torture, Galileo agreed to “abandon the false opinion which maintains that the Sun is the center and immovable.” The Dialogue was banned in Italy, and Galileo was sentenced to house arrest. He died in 1642, his condemnation still in place.18

But outside the reach of the Inquisition, the Dialogue continued to circulate: reprinted, read throughout Europe, translated into English in 1661, consulted by astronomers who used ever more powerful telescopes to confirm Galileo’s conclusions.

At the same time, the English scientist Robert Hooke took Bacon’s recommendations in the other direction; instead of using instruments to examine the distant skies, he looked more closely at earthly objects.

Hooke was an excellent mathematician, an expert at grinding and using lenses, the inventor of a barometer, a competent geologist, biologist, meteorologist, architect, and physicist. In 1662, he was appointed to the post of Curator of Experiments for the fledgling Royal Society of London for Improving Natural Knowledge. The Society was a “research club,” a regular gathering of natural philosophers who were committed to the experimental method of science; they were all students of the Novum Organan, and the Royal Society’s dedicatory epistle (written by the poet Abraham Cowley, himself an enthusiastic amateur scientist) was all in praise of Francis Bacon.

From words, which are but pictures of the thought,

Cowley enthused,

Though we our thoughts from them perversely drew

To things, the mind’s right object, he it brought . . .

Who to the life an exact piece would make,

Must not from other’s work a copy take . . .

No, he before his sight must place

The natural and living face;

The real object must command

Each judgment of his eye, and motion of his hand.

This examination of “real objects,” when carried out with instruments and helps, was known as “elaborate,” and such experiments were done in well-equipped “elaboratories”; Hooke himself had worked, as a young man, in the elaboratory of the chemist Robert Boyle. His training there, along with his wide-ranging skills and interests, made him the perfect choice as Curator of Experiments. He was paid a full-time stipend to do two things: to present a variety of weekly experiments to the gathered Society, explaining and demonstrating as he went; and to assist the Fellows with their own experiments, as needed.

This made Robert Hooke (probably) the first full-time salaried scientist in history. The Royal Society was made up of astronomers, geographers, physicians, philosophers, mathematicians, opticians, and even a few chemists, so Hooke was called on to experiment and research across the entire field of natural philosophy. He conducted demonstrations with pendulums, distilled urine, insects placed in pressurized containers, colored and plain glass, and much more.

But, increasingly, his experimental demonstrations involved the microscope.

Microscopes had improved as telescopes had grown more powerful. In 1663, the minutes of the Royal Society note, Hooke demonstrated the microscopic structures of moss, cork, bark, mold, leeches, spiders, and “a curious piece of petrified wood.” This puzzled him greatly, but he suggested perhaps it had “lain in some place where it was well soaked with water . . . well impregnated with stony and earthy particles,” and that the stone and earth had “intruded” into it.19

Hooke had described, for the first time the process of fossilization. And he had gone beyond observation with instruments to something new: the establishment of a new physical process which he had not (and could not) see, but which he was able to deduce.

In 1664, the Royal Society formally requested that Hooke print his micrographical observations. On top of his other competencies, Hooke was a skilled draughtsman and artist. Rather than merely describing his discoveries in words, or commissioning nonscientists to produce his drawings, he made his own: large, exquisitely detailed, and perfectly clear. The resulting work, Micrographia, was published in 1665.

The eye-grabbing pictures attracted the most attention. But even more notable is that, throughout, Hooke uses his newly extended senses to build new theories. After carefully examining the colors and layers of muscovite (“Moscovy-glass”) he goes beyond his observations to suggest nothing less than a theory of how light works: it is, he speculates, a “very short vibrating motion” propagated “through an Homogeneous medium by direct or straight lines.” It was not enough merely to extend the senses by way of instruments; the reason must follow the path laid by these observations, interpret them, and then check itself again.

And again, and again, and again. Hooke and the members of the Royal Society were committed to Baconian thinking, but they were also cautious, reluctant to draw conclusions without exhaustive proof—an attitude that soon drove a wedge between the Society and its newest member, one “Mr. Isaac Newton, professor of mathematics in the university of Cambridge.”

Isaac Newton, twenty-nine years old when he joined the Society in 1672, was a student of the experimental method and an enthusiastic user of artificial helps (his most recent work was with prisms). But when he shared his most recent “philosophical discovery”—that all light is made up of a spectrum of rays, and that “whiteness is nothing but a mixture of all sorts of colours, or that it is produced by all sorts of colours blended together”—the Society greeted him with skepticism. Hooke objected that he could think of at least two other “various hypotheses” that could equally well explain Newton’s results, and the other members of the Society recommended that many more experiments should be made before any universal conclusions were drawn.20

These experiments dragged on for the next three years, with much correspondence flying back and forth between Newton’s Cambridge elaboratory and the Society’s London headquarters. Newton became increasingly frustrated. “It is not number of experiments, but weight, to be regarded,” he complained in 1676, “and where one will do, what need many?” Gradually, he withdrew from participation in the Royal Society, and devoted himself instead to his own research: not only light and optics, but also the orbits of the planets, and the celestial mechanics that might explain them.

In 1687, he published his first major work: Philosophiae Naturalis Principia Mathematica, or “Mathematical Principles of Natural Philosophy.” It was intended to solve the biggest problems that still plagued the heliocentric model. For one thing, calculations based on perfectly circular orbits didn’t match up with the exact position of the planets. Galileo’s friend and colleague Johannes Kepler had proposed laws for elliptical orbits; this yielded much better results, but neither Kepler nor Galileo had been able to explain why the orbits should be elliptical rather than circular.

Newton had a possible solution. Planets circled the sun, he suggested, not because they were mounted on some sphere, but because the sun was exerting a force on them. Planets exerted the same force on moons that surrounded them. This force he called gravitas.

Galileo, like Aristotle, had believed that objects fell because of an inherent quality within them, an intrinsic “weightiness.” Newton argued that objects fell because the earth’s gravitas drew them toward it. But the strength of this force did not remain the same over distance. It changed. As the planets moved further from the sun, the force that pulled on them weakened: thus, the ellipse.

In order to fully explain the laws governing this new force, Newton had to come up with an improved mathematics, capable of accounting for continual small changes. This new math was a “mathematics of change,” able to predict results in a setting where the conditions were constantly shifting, forces altering, factors appearing and receding.21

So the Principia performed two groundbreaking tasks simultaneously. It explained the why behind the ellipses of the planets—and in doing so, revealed for the first time a new force in the universe, the force of gravity. And it introduced an entirely new branch of mathematics, which became known as calculus (from the Latin word for “pebble,” the tiny stones used as arithmetical counters).

None of this was easy going. The Principia is, deliberately, composed of impenetrable mathematical explanations; William Derham, Newton’s longtime friend and colleague, later explained that since Newton “abhorred all Contests,” he “designedly made his Principia abstruse” in order to “avoid being baited by little Smatterers in Mathematicks.” (This drove off quite a few academics as well; a frustrated Cambridge student famously remarked, as Newton passed him on the street, “There goes the man who has writt a book that neither he nor any one else understands.”)22

But at the beginning of Book 3, Newton abandoned his dense formulaic prose in order to write clearly.

The “Rules for the Study of Natural Philosophy” that begin Book 3 are, in a way, his final response to the Royal Society’s unending demands for proof. Newton was aware that the conclusions of the Principia could be dismissed by the literal-minded as “ingenious Romance”—mere guesses. After all, he had not actually spun planets at different distances from the sun to observe the rate of their orbit. Instead, he had taken the results of experiments with weighted objects carried out on earth, and had extrapolated their results into the heavens.23

The Rules explain why Newton’s conclusions about the planetary orbits, while not experimentally proven in the way that would make the Society happy, are nevertheless reliable—as the first three Rules make very clear.

1. Simpler causes are more likely to be true than complex ones.

2. Phenomena of the same kind (e.g., falling stones in Europe and falling stones in America) are likely to have the same causes.

3. If a property can be demonstrated to belong to all bodies on which experiments can be made, it can be assumed to belong to all bodies in the universe.

This is Bacon’s inductive reasoning, always progressing from specifics to generalities, based on observation—but now extended by Newton to breathtaking lengths.

Across, in fact, the entire face of the universe.

The Historians

For nearly two centuries, the universe would remain Newtonian.

His laws always seemed to work, in every place. Gravity functioned in the same way in every corner of the universe. Time passed everywhere at the same rate. The universe was static and infinite, and it went on forever.

But this did not mean that the earth was static and unchanging, or that the living things upon it had always been the same. And Newton’s Rules made it possible for observations about the present to be extrapolated back into educated guesses about the past.

For one thing, how long had the earth been around?

Newton himself speculated that the earth might originally have been a molten sphere. In that case, it would have taken at least 50,000 years for it to cool to its present temperature—although he refused to offer this as an actual theory, since he didn’t feel he had the experimental proof to back it up. His colleague and sometimes competitor, the German mathematician Gottfried Wilhelm von Leibniz, offered a similar speculation—that the earth had once been liquified, like metal, and had cooled and hardened over time. This had produced large bubbles; some of them calcified into mountains, others shattered and disintegrated, producing valleys.24

Questions about the age of the earth and its past history became suddenly more fraught in 1701, when the the Bishop of Winchester, William Lloyd, inserted a creation date of 4004 B.C. to the marginal notes of the newest version of the 1611 Authorized Version of the Bible. This date had first been proposed by the Irish bishop and astronomer James Ussher a half century before; Ussher had combined the study of biblical chronology with his own astronomical observations, and had concluded that the earth could not be more than six thousand years old.

The Authorized Version of the Bible was the most widely read and influential English translation in print. From this point on, proposing an age of more than six thousand years for the earth would carry with it the slur of denying Scripture—and not just in English-speaking countries. In 1749, the French naturalist George-Louis Leclerc (usually known by his title, the Comte de Buffon) estimated the age of the earth at 74,832 years, and privately thought that an even longer time frame was probable—perhaps as long as three billion years (not so far off from the contemporary estimate of 4.57 billion). His theories drew the attention of the Faculty of Theology in Paris, which carried on a long and suspicious correspondence with him over his understanding of Genesis. But Buffon dug in his heels, refusing to yield the point.25

He was not alone in his insistence. Despite theological opposition, a growing cadre of scientists was coming to the conclusion that the scientific method and Newton’s Rules, exercised together, yielded a long, long history for the earth. In 1785’s Theory of the Earth, the Scottish-born James Hutton argued that the continents had been formed, over vast amounts of time, by the exact same cycles of erosion and buildup, ebb and flow, that still operate today. And the measurement of those present processes suggested that change happened very, very slowly.

So slowly, in fact, that Hutton could not wrap his head around the amount of time needed. “[T]he production of our present continents must have required a time which is indefinite,” he wrote. “. . . The result, therefore, of this physical inquiry, is that we find no vestige of a beginning, no prospect of an end.” Geological time—what John McPhee would later label “deep time”—was so different from the time of human experience that Hutton could barely even use the measure of years to express it.26

In 1809, the French zoologist Jean-Baptiste de Monet—better known as the Chevalier de Lamarck—suggested that the living creatures on the earth’s surface had a history almost as long. Before Lamarck, most natural historians had treated animals and plants as coming late to the surface of the globe, arriving more or less already in their present forms. But Lamarck’s Zoological Philosophy married the history of life to the history of the globe: As it altered, so did the creatures on its surface. “With regard to living bodies . . . nature has done everything little by little,” he wrote. “[S]he acts everywhere slowly and by successive stages.”27

Unfortunately, Lamarck couldn’t really come up with a defensible theory as to how living creatures altered. The best he could do was to offer a “principle of use and disuse,” which suggested that when the environment changed, living creatures found themselves using some organs more (leading to greater “vigour and size” in those parts) and other organs less (causing them to “deteriorate and ultimately disappear”). This was impossible to demonstrate experimentally and the principle was widely scorned by other scientists: “Something that might amuse the imagination of a poet,” sniffed Lamarck’s contemporary, the naturalist Georges Cuvier.28

But despite Lamarck’s shortcomings, he and his predecessors had managed to establish a firm working principle: Both the earth, and the living creatures who occupied it, had an unimaginably long history. It was a principle that gave birth to the foundational works of modern biology and geology.

The first among these was written by Georges Cuvier himself. In his twenties, Cuvier had been given the job of organizing and cataloguing the massive collection of fossil bones piled haphazardly in the storage rooms of Paris’s National Museum of Natural History. It seemed clear to Cuvier that some of these fossil skeletons—particular two that he labeled as “mammoth” and “mastodon”—were not simply variations on present-day animals; they were something else, species that no longer existed.

Eventually, Cuvier identified, in the museum stockpiles, twenty-three species that appeared to be extinct. Trying to figure out why they had disappeared, Cuvier turned to the rock layers in which the fossils had been found. He and his colleague, the mineralogist Alexandre Brongniart, identified six distinct layers in the rock strata around Paris: six different eras in the earth’s past, each with its own population of plants and animals, some now extinct. Before long, Cuvier extrapolated these discoveries into an earth-wide theory. In 1812, he published this theory as the preface, or “Preliminary Discourse,” to his collected papers on fossils (Recherches sur Les Ossemenes Fossiles de Quadrupeds, an assembly of all of the different studies he had presented and published since 1804).

The earth, Cuvier argued, had undergone six separate catastrophic changes. Its layers changed suddenly and distinctly, not gradually and by degrees; therefore, it seemed clear that a series of nearly worldwide disasters had wiped out various populations of flora and fauna. “Thus, life on earth has often been disturbed by terrible events,” Cuvier concluded. “These great and terrible events are clearly imprinted everywhere, for the eye that knows how to read.”29

For a time, Cuvier’s catastrophism was the most widely accepted model for the past—until the geologist Charles Lyell proposed a different version of the past.

Catastrophe, Lyell argued, wasn’t necessarily the cause of past phenemona. “It appears premature,” he wrote in the London journal Quarterly Review, “to assume that existing agents could not, in the lapse of ages, produce such effects.” Extraordinary, earth-wrecking disasters could have produced the specimens in Cuvier’s collections. But it was equally possible that the “existing agents” still at work in the world—plain old erosion, the common rise and fall of temperatures, the regular wash of the tides—might be responsible instead.30

Which was Lyell’s distinct preference. He was convinced that catastrophism was a dead end for science. If one-time past events were responsible for the current form of the earth, there was no way that the past could be understood through the exercise of reason. The natural philosopher could always haul in a disastrous flood, or a passing giant comet, or some other event that could never be experimentally reproduced, to explain the planet.

Instead, Lyell argued, every force that has operated in the past can be observed, still acting, with the same intensity, in the present: a principle now known as uniformitarianism. The title of his 1830 natural history made this commitment perfectly clear: Principles of Geology, Being an Attempt to Explain the Former Changes of the Earth’s Surface, by Reference to Causes Now in Operation.

Uniformitarianism made catastrophes unfashionable, global floods and divine intervention unnecessary. Uniformitarianism also made the unimaginably long time frame first proposed by Hutton completely necessary. “Existing agents” such as tides and erosion could have shaped the world into its present form, but it would take them a really, really long time.

The year after the Principles of Geology was published, a young Charles Darwin put it into his luggage before setting off on the HMS Beagle for what would become a five-year journey of exploration: from Plymouth Sound to the South American coast, then to the Galápagos Islands, Tahiti, and Australia, circling the globe before returning home. “[Lyell’s] book was of the highest service to me in many ways,” he later wrote. He was struggling with the problem of species (where did they come from? what accounted for the differences between them?) and he found Lyell’s long-and-slow philosophy of change entirely convincing. “Natura non facit saltum,” Darwin concluded: Nature does not make sudden jumps. Whatever mechanism had produced the difference between species, it had taken a very long time to work.

He also read Lamarck, but disagreed vigorously with the principle of use and disuse. “It is absurd!” he scribbled in the margin of the Zoological Philosophy. Instead, Darwin found the key to the species question in Thomas Malthus’s bestselling An Essay on the Principle of Population, which had been first published in 1798. The future of the human race, Malthus argued, was shaped by two factors: Humanity has an innate drive to reproduce, which means that the population constantly increases. But because the food supply does not increase as rapidly as the population, a large percentage of those born will always die of starvation.

“It at once struck me,” Darwin later wrote, “that under these circumstances favourable variations would tend to be preserved and unfavorable ones to be destroyed. The result of this would be the formation of new species.” He had found, he believed, the key to the species problem; but he drafted and redrafted his thoughts for over a decade before finally publishing On the Origin of Species by Means of Natural Selection, or the Preservation of Favoured Races in the Struggle for Life in 1859.31

The book laid out a series of arguments in support of Darwin’s main conclusion: Life, like the earth itself, is changing constantly, and natural causes alone account for that change. And different species of animals have not always existed; new species appear when previous species develop variations, and those variations prove helpful in the fight for survival. In 1864, the well-known biologist and philosopher Herbert Spencer used the phrase “survival of the fittest” to described Darwin’s theory; although it never appears in The Origin of Species itself, the phrase soon became inextricably entwined with Darwin’s own work.

A major stumbling block remained. Although Charles Darwin was quite sure that variations were passed from parent to child, he had no idea how this worked.

“The laws governing inheritance are quite unknown,” he lamented, in the second chapter of The Origin of Species. “No one can say why a peculiarity . . . is sometimes inherited and sometimes not so.” Nine years after Origin of Species was first published, he suggested that inheritance could be explained through the existence of “minute particles” called gemmules, which are thrown off by every part of an organism, accumulate in the sex organs, and are then passed on to offspring. The strongest argument for this theory was simply that he couldn’t think of anything better. “It is a very rash and crude hypothesis,” he wrote to his friend T. H. Huxley, “yet it has been a considerable relief to my mind, and I can hang on it a good many groups of facts.”32

He never came up with a better explanation, although the key to the truth was literally under his own roof.

At Darwin’s death in 1882, his library contained unopened copies of a short paper in German by the Austrian botanist (and Augustinian friar) Gregor Mendel, describing Mendel’s nine-year experiments with sweet peas. Interbreeding thirty-four different varieties, Mendel had discovered a series of laws that seemed to govern how their characteristics (shape and color of the seeds and pods, position of flowers, length of stem) were passed on.

Clearly, the characteristics were carried from parent pea to offspring pea by the egg and pollen cells, so (Mendel proposed) those cells must contain discrete units, or elements, with each element carrying a particular characteristic within it. The proper manipulation of those elements could change the characteristics of the next generation—and, Mendel speculated, might be able to eventually mutate one species into another.33

Mendel wasn’t able to identify exactly what the elements of heredity were, or where in the cell they might be. But a series of biological experiments to pinpoint them was already underway.

The German biologist Ernst Haeckel, a generation younger than Darwin (and originator of the catchy phrase “ontogeny recapitulates phylogony”)34 proposed that inheritance might be controlled by something in the nucleus of a cell. He didn’t have the equipment to prove it, but in the early 1880s, Haeckel’s countryman Walther Flemming made use of much-improved microscopic lenses and better staining techniques to observe minuscule, thread-like structures in cells that had begun to divide (mitosis). His colleague Wilhelm Waldeyer suggested that these should be named chromosomes, a name which simply described their ability to soak up dye (chrom, color; soma, body).

In 1902, the German biologist Theodore Boveri discovered that sea urchin embryos need exactly 36 chromosomes to develop normally—which strongly suggested that each chromosome carried a unique and necessary piece of information from parent to child. Simultaneously, an American graduate student named Walter Sutton realized from his experiments with grasshoppers that chromosomes carry the “physical basis of a certain definite set of qualities.” The Danish botanist Wilhelm Johannsen gave this unit of heredity, the carrier of information from one generation to the next, its name: the gene. This was Darwin’s missing puzzle piece, the mechanism that transformed organic life from one form into another.35

A decade and a half later, a German astronomer named Alfred Wegener stumbled across the other major missing mechanism: the one that had transformed the inorganic surface of the globe.

“Anyone who compares, on a globe, the opposite coasts of South America and Africa,” Wegener wrote, in his 1915 book The Origin of Continents and Oceans, “cannot fail to be struck by the similar configuration of the two coast lines.” The jigsaw match suggested to him that the continents had once been a single mass, a giant supercontinent that he labeled Pangea; long, long ago, Pangea had broken up and drifted apart. This required him to provide an explanation for how solid earth could “drift.” So he proposed that the earth was not actually solid. Instead, it consisted of a liquid core, surrounded by a series of shells that increased in density as they got closer to the surface.36

It was a simple, elegant explanation, and accounted for almost all the factors that puzzled geologists: odd similarities between fossils found in far distant places, the apparent interlocking fit of the continental coastlines, the origin of mountains (which, according to Wegener, sprang up where the drifting pieces collided and overlapped). The problem was the absolute absence of any physical evidence. Wegener could not demonstrate the existence of a liquid core; nor could he supply a reason why Pangea didn’t simply remain in one supercontinent.

But Wegener believed that the explanatory power of his theory trumped his lack of explicit proof. He argued that, after all, the earth “supplies no direct information” about any part of its history: “We are like a judge confronted by a defendent who declines to answer,” he wrote, “and we must determine the truth from the circumstantial evidence. . . . The theory offers solutions for . . . many apparently insoluble problems.”37

Thirteen years after the original publication of The Origin of Continents and Oceans, the naval astronomers F. B. Littell and J. C. Hammond compared the longitudes of Washington and Paris in 1913 and in 1927. Their readings revealed that the distance between the two cities had increased by 4.35 meters—a creep of .32 meters per year.

Given that Paris is some six thousand kilometers from Washington, it would have taken over 18 million years for the two cities to move that far apart. But the drift was measurable, beyond a doubt. The continents were indeed drifting—and had been doing so for a very long time. They, like the living creatures on them, had a history; and the basic time line of that history had now been put into place for both.

The Physicists

While historians of life were working out a narrative for the past, physicists were puzzling out the present—and discovering that time, space, and matter itself were not nearly as straightforward as Newton, Bacon, and their heirs had once thought.

Ten years before the publication of The Origin of Continents and Oceans, the patent examiner and physicist Albert Einsten had completed five papers in a single year, all dealing with problems in electricity, magnetism, and related issues of space, time, and motion. One of the papers proposed that the conversion of energy into mass could be expressed as

E = mc2

which became the most familiar formula of the twentieth century.

But Einstein thought another of the papers, “On the Electrodynamics of Moving Bodies,” even more important. It was, he told a friend, an out-and-out “modification of the theory of space and time”: his first exploration of what would later be known as the special theory of relativity.

The paper set out to reconcile two apparently contradictory principles of physics. The first concerned the speed of light. Since the early 1880s, physicists had agreed that light traveling through a vacuum always has the exact same velocity (“c = 300000 km/sec.”).

The second was the principle of relativity, a cornerstone of the Newtonian universe, which decrees that a law of physics must work in the same way across all related frames of reference.

Imagine, Einstein later wrote, that a railway car is traveling along next to an embankment at a regular rate of speed. At the same time, a raven is flying through the air, also in a straight line relative to the embankment, and also at a steady rate of speed. An observer standing on the embankment sees the raven flying at a certain rate of speed. An observer standing on the moving railway car sees the raven flying at a different rate of speed. But although the speed changes, relative to the observer, both watchers still see the raven flying at a constant rate of speed, and in a straight line. The principle of relativity dictates that the raven cannot suddenly appear to be accelerating, or traveling in zigzags.

Now, imagine that a vacuum exists above the railway tracks, and that a ray of light travels above it, in the same direction as the raven. The principle of relativity says that light too will travel at a constant rate, and in a straight line. But it also implies that an observer on the embankment and an observer on the railway car will see the light traveling at two different speeds—which means that the speed of light is not constant.

Most physicists dealt with this problem by abandoning the principle of relativity. But Einstein argued that neither law needed to be given up—as long as we are willing to adjust our ideas about time and space.38

Both observers measure the speed of light per second; perhaps, Einstein suggested, what was changing was not the speed per second, but the second itself. Time itself was slowing down as the observer moved faster. For the observer who was moving, a second was actually . . . longer. Time was not, as had always been thought, a constant.

Instead, Einstein concluded, time was a fourth dimension that we move through—a dimension that changes as we travel in it. The “special theory of relativity” had redefined the nature of time.

In 1916, Einstein redefined space as well.

Building on the work of the nineteenth-century mathematician Bernhard Riemann, Einstein proposed that space is just as relative to the observer as time (the “general theory of relativity”). The presence of massive objects, Einsten argued, actually bends space. Since we (the observers) are within space, we cannot see the curves—but objects traveling through space are affected by the bends.

This theory could be checked against effects caused by the sun, the most massive object nearby. If Einstein was correct, light from stars, traveling through space, would move along curved space as it neared the sun. The starlight would then appear to be “pulled” toward the mass of the sun; starlight would be, observably, bent by the sun’s mass.

This could only be observed during a total solar eclipse, and it was another three years before the British astronomer Arthur Eddington was able to take the necessary measurements. His calculations, made during a solar eclipse in 1919, showed that the starlight passing by the sun had shifted, to the exact degree that Einstein had foreseen.

In Relativity: The Special and General Theory, Einstein laid out his conclusions about time and space for general readers. Neither, it turned out, was what it seemed. Baconian observation had its limits; common sense can lead the observer astray.

Meanwhile, a small handful of Einstein’s colleagues were doing equally revolutionary work on a much smaller scale: on the atom itself. By the end of the nineteenth century, physicists had come to believe that atoms—Lucretius’s “indivisible” particles—were, in fact, made up of smaller particles carrying a negative electrical charge; these were labeled electrons by the Irish physicists George Stoney and George Fitzgerald. Early in the twentieth century, the young German physicist Hans Geiger and his elder colleague Ernest Rutherford theorized that these electrons were orbiting a central mass, a “nucleus.” It was an elegant, intuitive model; electrons spun around the nucleus like planets around the sun, the smallest particles in the universe mirroring the heavens.39

But the orbits of those electrons posed a problem.

The “Rutherford model” imagined electrons to be something like satellites circling the earth. If a satellite orbiting the earth lost some of its energy, it would spiral down and crash. But when an atom emitted energy (as, for example, hydrogen atoms did, giving off light particles that some physicists had labeled photons), it remained stable. The orbits of the electrons did not seem to decay.

In 1913, the Danish physicist Niels Bohr proposed a solution. Electrons, he suggested, don’t orbit in continuous smooth circles, like planets or satellites. Instead, they jump from discrete spot to discrete spot. When a hydrogen atom emits a photon, the electron loses energy, but it doesn’t spiral down; it “leaps” to a lower orbital path, one which is stable but takes less energy to maintain.

These jumps were known as quantum jumps. A few years earlier, the physicist Max Planck had discovered that he could only predict the behavior of certain kinds of radiation if he treated energy, not as a wave (radiating out smoothly and evenly, as was the accepted model) but as a series of chunks: separate particles, pulsing out at intervals. Planck called these hypothetical energy particles “quanta,” and he wasn’t happy with them. They were, he told a friend, a “formal assumption,” a mathematical hat trick, a way of “saving the phenomenon.” “What I did can be described as simply an act of desperation,” he explained. “It was clear to me that classical physics could offer no solution to this problem . . . [so] I was ready to sacrifice every one of my previous convictions about physical laws.”40

But then Einstein himself found that treating light as if it were made up of quanta, rather than waves, helped explain some previously perplexing properties. And now, Bohr had solved an atomic-level problem by proposing that an electron’s path was quantized. Quantum theory, announced Max Planck in his Nobel Prize Address of 1922—a clear and interesting summary of the field’s development—had the potential “to transform completely our physical concepts” of the universe.41

Yet its implications became increasingly odder. For example: in the new “Bohr-Rutherford model” of the atom, an electron performed a “quantum leap” between orbits, rather than gliding smoothly through consecutive space. This implied that, while making the leap, the electron was . . . nowhere.

It was also impossible to predict, with certainty, where the electron would reappear at the end of its jump. The best physicists could do was predict its probable place of reappearance. The theoretical physicist Werner Heisenberg, who worked extensively on this problem, pointed out (reasonably enough) that the uncertainty is infinitesimal once physics moves into the realm of objects larger than a molecule; an electron orbiting the nucleus of a hydrogen atom might make an unexpected leap, but a goat grazing on a hillside isn’t going anywhere unpredictable at all.

But other scientists found it maddening to be pushed into the realm of probabilities, rather than measurable certainties. “If we are going to have to put up with these damn quantum jumps,” the Austrian physicist Erwin Schrödinger complained to Niels Bohr, “I am sorry that I ever had anything to do with quantum theory.” Even Einstein, who had a high capacity for startling new ideas, objected that quantum theory was “spookish.” (“I cannot seriously believe in it,” he wrote to his friend Max Born, not long before Born won the Nobel Prize for his work in quantum mechanics.)42

Yet quantum theory continued to solve problems, despite the massive disturbance it had caused in the world of physics.

The Synthesists

Meanwhile, Darwinian evolution had begun to lose its grip on the scientific imagination.

Since Darwin had created the grand narrative of evolution, individual researchers in widely separated fields had been slotting new details into place: the existence of chromosomes, the laws of heredity, the presence of deoxyribonucleic acid (DNA) within the nucleus of cells. Better instruments, more data, and improved research techniques were yielding discoveries thick and fast, many of them (in new fields of study: cytology, biometry, embryonics, genetics) filling in the empty fretwork of Darwin’s overarching structure.

But these studies were clogged with technical language, published in narrowly focused professional journals with tiny specialist audiences. There was, in Ernst Mayr’s words, “an extraordinary communication gap” between the sciences. Genetics had nothing to do with anthropology, or paleontology with biochemistry. Each researcher, viewing his (rarely her) own brick in the wall, had lost sight of the the whole building. “The theory of evolution,” concluded the director of the National Museum of Natural History in Paris, in 1937, “will very soon be abandoned.”43

Yet individual discoveries in the life sciences were confirming, again and again, that natural selection did explain the present form of organic life. A defense of Darwin was needed: a defense which would connect the highly meaningful dots, explaining the ways in which the grand theory and specific discoveries acted together.

In 1937, the Russian entomologist Theodosius Dobzhansky published the first attempt to do just that: Genetics and the Origin of Species. The book was a synthesis of his laboratory experiments in genetics, his field observations on fruit fly inheritance, and his work in the mathematical field of population genetics. In the next decade, a handful of well-regarded biologists followed his lead. George Gaylord Simpson’s Tempo and Mode in Evolution, Bernhardt Rensch’s Evolution above the Species Level, and Ersnt Mayr’s Systematics and the Origin of Species, from the Viewpoint of a Zoologist all made the same argument: Darwinian natural selection did, indeed, account for the existence of species.

In 1942, yet another work on the topic appeared: Evolution: The Modern Synthesis, by the English biologist Julian Huxley (grandson, as it happened, of one of Darwin’s most ardent contemporary supporters, Thomas Huxley). Julian Huxley was not only a well-regarded biologist, but a skilled popular writer; a decade before, he had collaborated with the novelist H. G. Wells on a best-selling popular history of biology.

Evolution: The Modern Synthesis was a sprawling, multifaceted book. It covered, in turn, paleontology, genetics, geographical differentiation, ecology, taxonomy, and adaptation—but clearly, readably, without jargon. It was an instant success: “The outstanding evolutionary treatise of the decade, perhaps of the century,” exclaimed one of the most important journals of the field. From 1942 on, the ongoing attempt to connect specialized laboratory discoveries with the larger world of natural history, all in support of the Darwinian scheme, would take its name from Huxley’s book: the modern synthesis.44

Two years later, Erwin Schrödinger—still struggling with those damn quantum jumps—published another kind of synthesis. What Is Life? dealt with the overlap between quantum physics and biology, the common ground between the study of ourselves and the study of the cosmos. Using quantum theory to account for the behavior of orbiting electrons, Schrödinger showed how this behavior affected the formation of chemical bonds, and how those chemical bonds then affected cell behavior, genetics, evolutionary biology itself.

The success of What Is Life? as a synthesis can be measured by the number of physicists who were inspired, after reading it, to migrate over into biological research. “No doubt molecular biology would have developed without What Is Life?” writes Schrödinger’s biographer, Walter Moore, “but it would have been at a slower pace, and without some of its brightest stars. There is no other instance in the history of science in which a short semipopular book catalyzed the future development of a great field of research.”45

Semipopular: the word is a signpost, pointing out a shift in scientific writing.

What Is Life? was, first and foremost, written for other scientists. A biologist had once been able to glance over an entire kingdom. Now, it was a full-time job to keep up with discoveries in a single subspecies: epigenetics, population genetics, genomics, phytochemistry, phylogenetics, and many more. The study of physics—the behavior of the universe—had increasingly focused itself on smaller and smaller segements of the cosmos, each requiring more and more specialized instrumentation: optics, photonics, particle physics, radio astronomy, quantum chemistry. New theories were written up for academic journals with very narrow audiences. The articles made use of technical vocabulary and arcane mathematical notation, inaccessible to nonspecialists—and even more so to the general public.

As discoveries multiplied, audiences shrank. Yet translating those discoveries for the wider reading public—the interested, intelligent layperson—turned out to be a fraught activity.

The Popularizers

A faint line had been already traced between professional and popular science writing.

In 1894, Julian Huxley’s grandfather had complained about the unwillingness of scientists to write plainly for the lay reader, for fear of lowering their prestige in their own fields: “[They] keep their fame as scientifichierophants,” T. H. Huxley grumbled, “unsullied by attempts—at least of the successful sort—to be understood of the people.” As the twentieth century wore on, the line between popularizers and academic scientists darkened. Best-selling books on science were widely scorned by professional researchers, and to be labeled a “mere popularizer” was death to an academic career.46

Simultaneously, the public thirst for science was growing greater and greater. The first daily science feature to run in a newspaper (“What’s What with Science,” by journalist Watson Davis) appeared in the Washington Herald in the 1920s; in the 1930s, the National Association of Science Writers (journalists, not professors) took shape. The end of World War II whetted interest in atomic science, and the startling launch of Sputnik by the Soviet Union in 1957 sparked a general demand for information about space.

Yet scientists were slow to feed this public appetite. “For better or worse, whether scientists like it or not,” mourned the Bulletin of the Atomic Scientists in 1963, “the public today gets its image of science, its information about science, and its understanding of scientific concepts largely from these nonscientists, the science writers.” Why not join the ranks of science writers themselves? Because most scientists believed themselves to be objective, unbiased, clearsighted hunters of truth. The “science writer,” on the other hand, “works in the world of journalism and is subject to its pressures, its traditions and conventions, and its biases.” 47

Given this deepening hostility toward “popular” science, it is hardly surprising that the next influential science book to hit the shelves was written by a (female) outsider: Rachel Carson, a talented biologist who ran out of money after completing her M.A. in 1932, and was never able to complete her Ph.D. or gain an academic position. Instead, she wrote about science: first, for the Baltimore Sun, and then for the U.S. Fish and Wildlife Service. Her second book, 1951’s The Sea Around Us, was a best seller and National Book Award winner. But sales of her third book, Silent Spring, left it in the dust.

“There are very few books that can be said to have changed the course of history,” writes Carson’s biographer, Linda Lear, “but this was one of them.” Silent Spring began with a dreadful warning: “For the first time in the history of the world, every human being is now subjected to contact with dangerous chemicals, from the moment of conception until death.” The book went on to attack Western governments, the chemical industry, and the farming industry for the indiscriminate use of pesticides.

Silent Spring was not only a massive work of synthesis (between chemistry and biology, laboratory science and public policy, academic research and citizen activism, the study of man and the study of man’s entire world) but popular science at its best: well-informed and dramatic, a gripping blend of statistic and story, affecting every human being. Carson had demonstrated just how powerful popular science could be; and in the next two decades, an unprecedented raft of academic scientists defected to the popular fold.48

Life scientists led the pack. In 1967, the zoologist Desmond Morris teased out the full implications of Darwinian evolution for human behavior in The Naked Ape, an interpretation of man’s cultural behavior through the lens of biology: one of the first works of sociobiology. The following year, James Watson published an account of his work with Francis Crick on DNA. That odd little substance in the nucleus of the cell had been identified as the carrier of genetic information from one generation to the next, and in 1953, Crick and Watson together had proposed a double helix structure for DNA that made sense of the mechanism. Their model, which would not actually be observed for some decades, was chemically sound, tested worldwide, and soon accepted by biologists everywhere. Watson’s 1968 bestseller, The Double Helix: A Personal Account of the Discovery of the Structure of DNA, mixed science with memoir, and made DNA a household word.

In 1976, Oxford biologist Richard Dawkins took the story of DNA further in The Selfish Gene, which offered a comprehensive explanation for all organic life, including ours. “Intelligent life on a planet comes of age when it first works out the reason for its own existence,” Dawkins begins, and the reason he has worked out is a simple one: we eat, sleep, have sex, think, write, build space vehicles and war machines, sacrifice ourselves or others, all in order to preserve our DNA. Natural selection happens at the most basic level, the molecular; our bodies have evolved to do nothing more than protect and propagate our genes, which are ruthlessly selfish molecules working to ensure their own survival.49

This was not a comforting view of human nature, but popular science was proving a perfect vehicle for scientists to make the sort of sweeping conclusions (about human existence, all of culture, the cosmos itself) that scientific papers and journal articles rarely contained.

In 1977, Steven Weinberg’s smash hit, The First Three Minutes leapt directly from physics to metaphysics. Weinberg explained the so-called “Big Bang,” the expansion of the entire universe from an original super-dense point known as a singularity—and then went further:

It is almost irresistable for humans to believe that we have some special relation to the universe . . . that human life is not just a more-or-less farcical outcome of a chain of accidents reaching back to the first three minutes. . . . The more the universe seems comprehensible, the more it also seems pointless.

That conclusion (one that certainly reaches beyond the Baconian project) leads him to an even broader statement about the purpose of human existence. “If there is no solace in the fruits of our research,” Weinberg concludes, at the very end of the book, “there is at least some consolation in the research itself. . . . The effort to understand the universe is one of the very few things that lifts human life a little above the level of farce, and gives it some of the grace of tragedy.”50

Popular science was itself evolving. It was more than information, more than entertainment, more than a call to activism. It offered scientists a chance to make broader conclusions about human life: to explain not just what, but who and why we are.

In some ways, popular science did succumb to the “traditions and conventions” of the marketplace, as the Bulletin of the Atomic Scientists had gloomily foretold. Scientists were forced to write in ways that would grab, and keep, their readers; witness the fairy-tale opening of Silent Spring (“Some evil spell had settled on the community . . . Everywhere was the a shadow of death”), the vivid analogies of The First Three Minutes (“If some ill-advised giant were to wiggle the sun back and forth, we on earth would not feel the effect for eight minutes, the time required for a wave to travel at the speed of light from the sun to the earth”), and the epic first chapter of Walter Alvarez’s T. rex and the Crater of Doom, which is titled “Armageddon” and begins with an epigraph from the Lord of the Rings.

The hostility between popular and academic science grew more nuanced and complex, but didn’t go away. “Popularization,” concluded a 1985 study of the relationship, “is traditionally seen as a low-status activity . . . something external to research which can be left to non-scientists, failed scientists, or ex-scientists.” Among scientists, the Oprah Effect became known as the Sagan Effect, “whereby one’s popularity and celebrity with the general public were thought to be inversely proportional to the quantity and quality of real science being done.”51

Science writing, increasingly, traveled down two different paths: one broad and well-trodden, the other narrow and high-walled. New discoveries and groundbreaking theories were first floated to the scientific world in journals, articles, and conference talks, and slowly disseminated through the scientific world. Only then did they take book form and enter the general consciousness. James Gleick’s best-selling Chaos: Making a New Science came out in 1987, twelve years after the mathematicians Tien-Yien Li and James A. Yorke used the term chaos theory in their technical paper about nonlinear equations, and twenty-four years after Edward Lorenz had first described the phenomenon. And Stephen Hawking’s cosmology overview A Brief History of Time, published in 1988, sold over 10 million copies—but contained nothing revolutionary at all.

T. rex and the Crater of Doom, Walter Alvarez’s widely read account of his detective work in finding tracks of the asteroid that (theoretically) wiped out the dinosaurs, came out in 1997, seventeen years after Alvarez and his colleagues first published their theory as an academic paper (“Extraterrestrial Cause for the Cretaceous-Tertiary Extinction”). Alvarez’s dramatic scenarios (“Doom was coming out of the sky . . . Entire forests were ignited, and continent-sized wildfires swept across the lands . . . [A] wall of water . . . towered above the shorelines”) were immediately incorporated into the movies Deep Impact and Armageddon, sparking an entire subgenre of films about the end of the earth—and also gave rise to academic conferences (such as 2009’s “Near-Earth Objects: Risks, Responses and Opportunities,” hosted by the University of Nebraska–Lincoln) and at least one multinational committee tasked with “establishing global frameworks to respond to NEO threats.” Popular science writing had not only grasped the public imagination; it had altered public policy—and even turned back to shape the academy.52

HOW TO READ SCIENCE