Advanced Calculus of Several Variables (1973)

Part I. Euclidean Space and Linear Mappings

Chapter 3. INNER PRODUCTS AND ORTHOGONALITY

In order to obtain the full geometric structure of ![]() n (including the concepts of distance, angles, and orthogonality), we must supply

n (including the concepts of distance, angles, and orthogonality), we must supply ![]() n with an inner product. An inner (scalar) product on the vector space V is a function V × V →

n with an inner product. An inner (scalar) product on the vector space V is a function V × V → ![]() , which associates with each pair (x, y) of vectors in V a real number

, which associates with each pair (x, y) of vectors in V a real number ![]() x, y

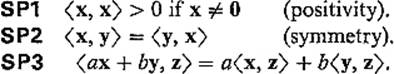

x, y![]() , and satisfies the following three conditions:

, and satisfies the following three conditions:

The third of these conditions is linearity in the first variable; symmetry then gives linearity in the second variable also. Thus an inner product on V is simply a positive, symmetric, bilinear function on V × V. Note that SP3 implies that ![]() 0, 0

0, 0![]() = 0 (see Exercise 3.1).

= 0 (see Exercise 3.1).

The usual inner product on ![]() n is denoted by x · y and is defined by

n is denoted by x · y and is defined by

![]()

where x = (x1, . . . , xn), y = (y1, . . . , yn). It should be clear that this definition satisfies conditions (SP1, SP2, SP3 above. There are many inner products on ![]() n see Example 2 below), but we shall use only the usual one.

n see Example 2 below), but we shall use only the usual one.

Example 1Denote by ![]() the vector space of all continuous functions on the interval [a, b], and define

the vector space of all continuous functions on the interval [a, b], and define

![]()

for any pair of functions ![]() It is obvious that this definition satisfies conditions SP2 and SP3. It also satisfies SP1, because if f(t0) ≠ 0, then by continuity (f(t))2 > 0 for all t in some neighborhood of t0, so

It is obvious that this definition satisfies conditions SP2 and SP3. It also satisfies SP1, because if f(t0) ≠ 0, then by continuity (f(t))2 > 0 for all t in some neighborhood of t0, so

![]()

Therefore we have an inner product on ![]() .

.

Example 2Let a, b, c be real numbers with a > 0, ac − b2 > 0, so that the quadratic form q(x) = ax12 + 2bx1x2 + cx22 is positive-definite (see Section II.4). Then ![]() x, y

x, y![]() = ax1y1 + bx1y2 + bx2y1 + cx2y2 defines an inner product on

= ax1y1 + bx1y2 + bx2y1 + cx2y2 defines an inner product on ![]() 2 (why?). With a = c = 1, b = 0 we obtain the usual inner product on

2 (why?). With a = c = 1, b = 0 we obtain the usual inner product on ![]() 2.

2.

An inner product on the vector space V yields a notion of the length or “size” of a vector ![]() , called its norm

, called its norm ![]() x

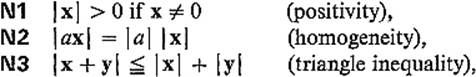

x![]() . In general, a norm on the vector space V is a real-valued function x →

. In general, a norm on the vector space V is a real-valued function x → ![]() x

x![]() on V satisfying the following conditions:

on V satisfying the following conditions:

for all ![]() and

and ![]() . Note that N2 implies that

. Note that N2 implies that ![]() 0

0![]() = 0.

= 0.

The norm associated with the inner product ![]() ,

, ![]() on V is defined by

on V is defined by

![]()

It is clear that SP1–SP3 and this definition imply conditions N1 and N2, but the triangle inequality is not so obvious; it will be verified below.

The most commonly used norm on ![]() n is the Euclidean norm

n is the Euclidean norm

![]()

which comes in the above way from the usual inner product on ![]() n. Other norms on

n. Other norms on ![]() n, not necessarily associated with inner products, are occasionally employed, but henceforth

n, not necessarily associated with inner products, are occasionally employed, but henceforth ![]() x

x![]() will denote the Euclidean norm unless otherwise specified.

will denote the Euclidean norm unless otherwise specified.

Example 3![]() x

x![]() = max {

= max {![]() x1

x1![]() , . . . ,

, . . . , ![]() xn

xn![]() }, the maximum of the absolute values of the coordinates of x, defines a norm on

}, the maximum of the absolute values of the coordinates of x, defines a norm on ![]() n (see Exercise 3.2).

n (see Exercise 3.2).

Example 4![]() x

x![]() 1 =

1 = ![]() x1

x1![]() +

+ ![]() x2

x2![]() + · · · +

+ · · · + ![]() xn

xn![]() defines still another norm on

defines still another norm on ![]() n (again see Exercise 3.2).

n (again see Exercise 3.2).

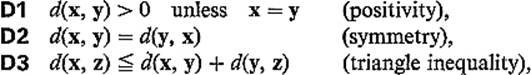

A norm on V provides a definition of the distance d(x, y) between any two points x and y of V:

![]()

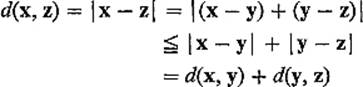

Note that a distance function d defined in this way satisfies the following three conditions:

for any three points x, y, z. Conditions D1 and D2 follow immediately from N1 and N2, respectively, while

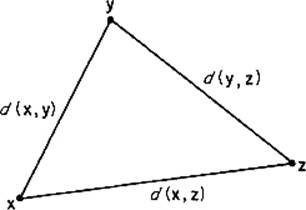

by N3. Figure 1.1 indicates why N3 (or D3) is referred to as the triangle inequality.

Figure 1.1

The distance function that comes in this way from the Euclidean norm is the familiar Euclidean distance function

![]()

Thus far we have seen that an inner product on the vector space V yields a norm on V, which in turn yields a distance function on V, except that we have not yet verified that the norm associated with a given inner product does indeed satisfy the triangle inequality. The triangle inequality will follow from the Cauchy–Schwarz inequality of the following theorem.

Theorem 3.1If ![]() ,

, ![]() is an inner product on a vector space V, then

is an inner product on a vector space V, then

![]()

for all ![]() [where the norm is the one defined by (2)].

[where the norm is the one defined by (2)].

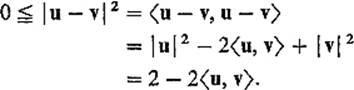

PROOFThe inequality is trivial if either x or y is zero, so assume neither is. If u = x/![]() x

x![]() and v = y/

and v = y/![]() y

y![]() , then

, then ![]() u

u![]() =

= ![]() v

v![]() = 1. Hence

= 1. Hence

So ![]() that is

that is ![]() or

or

![]()

Replacing x by −x, we obtain

![]()

also, so the inequality follows.

![]()

The Cauchy–Schwarz inequality is of fundamental importance. With the usual inner product in ![]() n, it takes the form

n, it takes the form

![]()

while in ![]() , with the inner product of Example 1, it becomes

, with the inner product of Example 1, it becomes

![]()

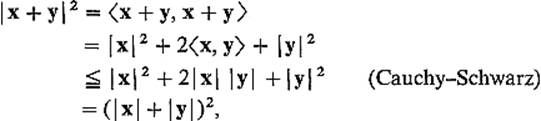

PROOFOF THE TRIANGLE INEQUALITY Given ![]() note that

note that

which implies that ![]()

![]()

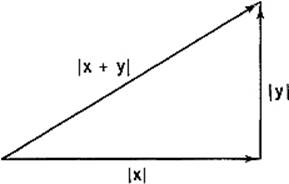

Notice that, if ![]() x, y

x, y![]() = 0, in which case x and y are perpendicular (see the definition below), then the second equality in the above proof gives

= 0, in which case x and y are perpendicular (see the definition below), then the second equality in the above proof gives

![]()

This is the famous theorem associated with the name of Pythagoras (Fig. 1.2).

Figure 1.2

Recalling the formula x · y = ![]() x

x![]()

![]() y

y![]() cos θ for the usual inner product in

cos θ for the usual inner product in ![]() 2, we are motivated to define the angle

2, we are motivated to define the angle ![]() (x, y) between the vectors

(x, y) between the vectors ![]() by

by

![]()

Notice that this makes sense because ![]() by the Cauchy–Schwarz inequality. In particular we say that x and y are orthogonal (or perpendicular) if and only if x · y = 0, because then

by the Cauchy–Schwarz inequality. In particular we say that x and y are orthogonal (or perpendicular) if and only if x · y = 0, because then ![]() (x, y) = arccos π/2 = 0.

(x, y) = arccos π/2 = 0.

A set of nonzero vectors v1, v2, . . . in V is said to be an orthogonal set if

![]()

whenever i ≠ j. If in addition each vi is a unit vector, ![]() vi, vi

vi, vi![]() = 1, then the set is said to be orthonormal.

= 1, then the set is said to be orthonormal.

Example 5The standard basis vectors e1, . . . , en form an orthonormal set in ![]() n.

n.

Example 6The (infinite) set of functions

![]()

is orthogonal in ![]() (see Example 1 and Exercise 3.11). This fact is the basis for the theory of Fourier series.

(see Example 1 and Exercise 3.11). This fact is the basis for the theory of Fourier series.

The most important property of orthogonal sets is given by the following result.

Theorem 3.2Every finite orthogonal set of nonzero vectors is linearly independent.

PROOFSuppose that

![]()

Taking the inner product with vi, we obtain

![]()

because ![]() vi, vi

vi, vi![]() = 0 for i ≠ j if the vectors v1, . . . , vk are orthogonal. But

= 0 for i ≠ j if the vectors v1, . . . , vk are orthogonal. But ![]() vi, vi

vi, vi![]() ≠ 0, so ai = 0. Thus (3) implies a1 = · · · = ak = 0, so the orthogonal vectors v1, . . . , vk are linearly independent.

≠ 0, so ai = 0. Thus (3) implies a1 = · · · = ak = 0, so the orthogonal vectors v1, . . . , vk are linearly independent.

![]()

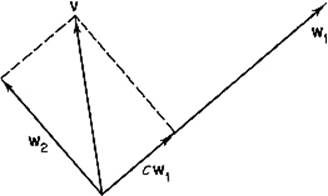

We now describe the important Gram–Schmidt orthogonalization process for constructing orthogonal bases. It is motivated by the following elementary construction. Given two linearly independent vectors v and w1, we want to find a nonzero vector w2 that lies in the subspace spanned by v and w1, and is orthogonal to w1. Figure 1.3 suggests that such a vector w2 can be obtained by subtracting from v an appropriate multiple cw1 of w1. To determine c,

Figure 1.3

we simply solve the equation ![]() w1, v − cw1

w1, v − cw1![]() = 0 for c =

= 0 for c = ![]() v, w1

v, w1![]() /

/![]() w1, w1

w1, w1![]() . The desired vector is therefore

. The desired vector is therefore

![]()

obtained by subtracting from v the “component of v parallel to w1.” We immediately verify that ![]() w2, w1

w2, w1![]() = 0, while w2 ≠ 0 because v and w1 are linearly independent.

= 0, while w2 ≠ 0 because v and w1 are linearly independent.

Theorem 3.3If V is a finite-dimensional vector space with an inner product, then V has an orthogonal basis.

In particular, every subspace of ![]() n has an orthogonal basis.

n has an orthogonal basis.

PROOFWe start with an arbitrary basis v1, . . . , vn for V. Let w1 = v1. Then, by the preceding construction, the nonzero vector

![]()

is orthogonal to w1 and lies in the subspace generated by v1 and v2.

Suppose inductively that we have found an orthogonal basis w1, . . . , wk for the subspace of V that is generated by v1, . . . , vk. The idea is then to obtain wk+1 by subtracting from vk+1 its components parallel to each of the vectors w1, . . . , wk. That is, define

![]()

where ci = ![]() vk+1, wi

vk+1, wi![]() /

/![]() wi, wi

wi, wi![]() . Then

. Then ![]() wk+1, wi

wk+1, wi![]() =

= ![]() vk+1, wi

vk+1, wi![]() − ci

− ci![]() wi, wi

wi, wi![]() = 0 for

= 0 for ![]() , and wk+1 ≠ 0, because otherwise vk+1 would be a linear combination of the vectors w1, . . . , wk, and therefore of the vectors v1, . . . , vk. It follows that the vectors w1, . . . , wk+1 form an orthogonal basis for the subspace of V that is generated by v1, . . . , vk+1.

, and wk+1 ≠ 0, because otherwise vk+1 would be a linear combination of the vectors w1, . . . , wk, and therefore of the vectors v1, . . . , vk. It follows that the vectors w1, . . . , wk+1 form an orthogonal basis for the subspace of V that is generated by v1, . . . , vk+1.

After a finite number of such steps we obtain the desired orthogonal basis w1, . . . , wn for V.

![]()

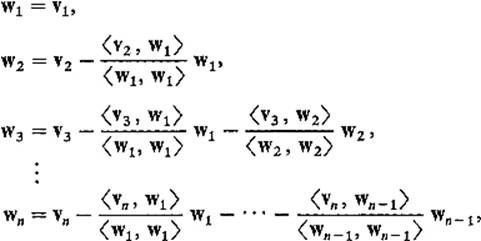

It is the method of proof of Theorem 3.3 that is known as the Gram–Schmidt orthogonalization process, summarized by the equations

defining the orthogonal basis w1, . . . , wn in terms of the original basis v1, . . . , vn.

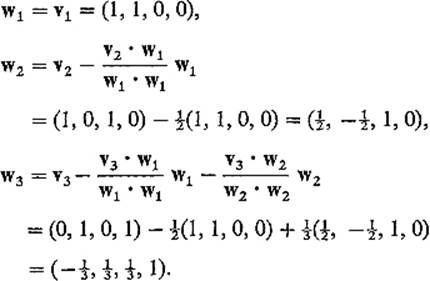

Example 7To find an orthogonal basis for the subspace V of ![]() 4 spanned by the vectors v1 = (1, 1, 0, 0), v2 = (1, 0, 1, 0), v3 = (0, 1, 0, 1), we write

4 spanned by the vectors v1 = (1, 1, 0, 0), v2 = (1, 0, 1, 0), v3 = (0, 1, 0, 1), we write

Example 8Let ![]() denote the vector space of polynomials in x, with inner product defined by

denote the vector space of polynomials in x, with inner product defined by

![]()

By applying the Gram–Schmidt orthogonalization process to the linearly independent elements 1, x, x2, . . . , xn, . . . , one obtains an infinite sequence ![]() , the first five elements of which are

, the first five elements of which are ![]() (see Exercise 3.12). Upon multiplying the polynomials {pn(x)} by appropriate constants, one obtains the famous Legendre polynomials

(see Exercise 3.12). Upon multiplying the polynomials {pn(x)} by appropriate constants, one obtains the famous Legendre polynomials ![]() etc.

etc.

One reason for the importance of orthogonal bases is the ease with which a vector ![]() can be expressed as a linear combination of orthogonal basis vectors w1, . . . , wn for V. Writing

can be expressed as a linear combination of orthogonal basis vectors w1, . . . , wn for V. Writing

![]()

and taking the inner product with wi, we immediately obtain

![]()

so

![]()

This is especially simple if w1, . . . , wn is an orthonormal basis for V:

![]()

Of course orthonormal basis vectors are easily obtained from orthogonal ones, simply by dividing by their lengths. In this case the coefficient v · wi of wi in (5) is sometimes called the Fourier coefficient of v with respect to wi. This terminology is motivated by an analogy with Fourier series. The orthonormal functions in ![]() corresponding to the orthogonal functions of Example 6 are

corresponding to the orthogonal functions of Example 6 are

![]()

Writing

![]()

one defines the Fourier coefficients of ![]() by

by

![]()

and

![]()

It can then be established, under appropriate conditions on f, that the infinite series

![]()

converges to f(x). This infinite series may be regarded as an infinite-dimensional analog of (5).

Given a subspace V of ![]() n, denote by V

n, denote by V![]() the set of all those vectors in

the set of all those vectors in ![]() n, each of which is orthogonal to every vector in V. Then it is easy to show that V

n, each of which is orthogonal to every vector in V. Then it is easy to show that V![]() is a subspace of

is a subspace of ![]() n, called the orthogonal complement of V (Exercise 3.3). The significant fact about this situation is that the dimensions add up as they should.

n, called the orthogonal complement of V (Exercise 3.3). The significant fact about this situation is that the dimensions add up as they should.

Theorem 3.4If V is a subspace of ![]() n, then

n, then

![]()

PROOF By Theorem 3.3, there exists an orthonormal basis v1, . . . , vr for V, and an orthonormal basis w1, . . . , ws for V![]() . Then the vectors v1, . . . , vr, w1, . . . , ws are orthornormal, and therefore linearly independent. So in order to conclude from Theorem 2.5 that r + s = n as desired, it suffices to show that these vectors generate

. Then the vectors v1, . . . , vr, w1, . . . , ws are orthornormal, and therefore linearly independent. So in order to conclude from Theorem 2.5 that r + s = n as desired, it suffices to show that these vectors generate ![]() n. Given

n. Given ![]() , define

, define

![]()

Then y · vi = x · vi − (x · vi)(vi · vi) = 0 for each i = 1, . . . , r. Since y is orthogonal to each element of a basis for V, it follows easily that ![]() (Exercise 3.4). Therefore Eq. (5) above gives

(Exercise 3.4). Therefore Eq. (5) above gives

![]()

This and (7) then yield

![]()

so the vectors v1, . . . , vr, w1, . . . , ws constitute a basis for ![]() n.

n.

![]()

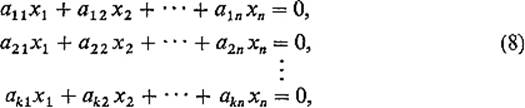

Example 9Consider the system

of ![]() homogeneous linear equations in x1, . . . , xn. If ai = (ai1, . . . , ain), i = 1, . . . , k, then these equations can be rewritten as

homogeneous linear equations in x1, . . . , xn. If ai = (ai1, . . . , ain), i = 1, . . . , k, then these equations can be rewritten as

Therefore the set S of all solutions of (8) is simply the set of all those vectors ![]() that are orthogonal to the vectors a1, . . . , ak. If V is the subspace of

that are orthogonal to the vectors a1, . . . , ak. If V is the subspace of ![]() n generated by a1, . . . , ak, it follows that S = V

n generated by a1, . . . , ak, it follows that S = V![]() (Exercise 3.4). If the vectors a1, . . . , ak are linearly independent, we can then conclude from Theorem 3.4 that dim S = n − k.

(Exercise 3.4). If the vectors a1, . . . , ak are linearly independent, we can then conclude from Theorem 3.4 that dim S = n − k.

Exercises

3.1Conclude from condition SP3 that ![]() 0, 0

0, 0![]() = 0.

= 0.

3.2Verify that the functions defined in Examples 3 and 4 are norms on ![]() n.

n.

3.3If V is a subspace of ![]() n, prove that V

n, prove that V![]() is also a subspace.

is also a subspace.

3.4If the vectors a1, . . . , ak generate the subspace V of ![]() n, and

n, and ![]() is orthogonal to each of these vectors, show that

is orthogonal to each of these vectors, show that ![]() .

.

3.5Verify the “polarization identity” ![]()

3.6Let a1, a2, . . . , an be an orthonormal basis for ![]() n. If x = s1a1 + · · · + snan and y = t1a1 + · · · + tnan, show that x · y = s1t1 + · · · + sn tn. That is, in computing x · y, one may replace the coordinates of x and y by their components relative to any orthonormal basis for

n. If x = s1a1 + · · · + snan and y = t1a1 + · · · + tnan, show that x · y = s1t1 + · · · + sn tn. That is, in computing x · y, one may replace the coordinates of x and y by their components relative to any orthonormal basis for ![]() n.

n.

3.7Orthogonalize the basis (1, 0, 0, 1), (−1, 0, 2, 1), (0, 1, 2, 0), (0, 0, −1, 1) in ![]() 4.

4.

3.8Orthogonalize the basis

![]()

in ![]() n.

n.

3.9Find an orthogonal basis for the 3-dimensional subspace V of ![]() 4 that consists of all solutions of the equation x1 + x2 + x3 − x4 = 0. Hint: Orthogonalize the vectors v1 = (1, 0, 0, 1), v2 = (0, 1, 0, 1), v3 = (0, 0, 1, 1).

4 that consists of all solutions of the equation x1 + x2 + x3 − x4 = 0. Hint: Orthogonalize the vectors v1 = (1, 0, 0, 1), v2 = (0, 1, 0, 1), v3 = (0, 0, 1, 1).

3.10Consider the two equations

![]()

Let V be the set of all solutions of (*) and W the set of all solutions of both equations. Then W is a 2-dimensional subspace of the 3-dimensional subspace V of ![]() 4 (why?).

4 (why?).

(a) Solve (*) and (**) to find a basis v1, v2 for W.

(b) Find by inspection a vector v3 which is in V but not in W. Why is v1, v2, v3 then a basis for V?

(c) Orthogonalize v1, v2, v3 to obtain an orthogonal basis w1, w2, w3 for V, with w1 and w2 in W.

(d) Normalize w1, w2, w3 to obtain an orthonormal basis u1, u2, u3 for V. Express v = (11, 3, 6, −11) as a linear combination of u1, u2, u3.

(e) Find vectors ![]() and

and ![]() such that v = x + y.

such that v = x + y.

3.11Show that the functions

![]()

are orthogonal in the inner product space ![]() of Example 1.

of Example 1.

3.12Orthogonalize in ![]() the functions 1, x, x2, x3, x4 to obtain the polynomials p0(x), . . . , p4(x) listed in Example 8.

the functions 1, x, x2, x3, x4 to obtain the polynomials p0(x), . . . , p4(x) listed in Example 8.